The convergence of robotics and artificial intelligence has ushered in a paradigm shift, moving intelligence from abstract algorithms to physical entities that perceive, act, and learn within the real world. This is the domain of embodied intelligence, and its quintessential carrier is the embodied AI robot. As someone deeply immersed in this field, I observe that the fundamental tenet is clear: cognition is not a disembodied process but is constructed through an agent’s dynamic interactions with its environment. An embodied AI robot, therefore, is not merely a machine executing pre-programmed commands; it is an artificial agent that leverages its physical form—its sensors and actuators—to build a model of the world and execute tasks with a degree of autonomy and understanding. The significance of this pursuit is profound. From transforming manufacturing floors and assisting in complex surgical procedures to providing companionship and aid in domestic settings, embodied AI robots promise to redefine our interaction with technology, demanding more natural interfaces, robust safety, and adaptive intelligence.

The architecture of an embodied AI robot can be conceptually analogized to a biological system. It consists of a “brain” unit and a physical “body.” The brain encompasses high-level cognition and decision-making (akin to the cerebral cortex), refined motion control and coordination (reminiscent of the cerebellum), and low-level signal routing and reflexive functions (similar to the brainstem). The body comprises the suite of sensors—eyes, ears, and tactile skin—and the actuators—limbs, grippers, and wheels—that enable interaction. The seamless synergy between these components allows the embodied AI robot to close the perception-cognition-action loop, thereby enhancing its ability to operate in unstructured, complex environments. With the advent of large foundation models, these robots are now poised to “understand” nuanced human language, decompose abstract instructions into actionable steps, and exhibit unprecedented morphological and functional versatility.

Core Technological Pillars of Embodied AI Robots

The realization of a competent embodied AI robot rests on several interdependent technological pillars. Each addresses a critical part of the intelligent pipeline, from sensing the world to physically altering it.

1. Multimodal Perception and Fusion

For an embodied AI robot, perception is the foundation. It employs a suite of heterogeneous sensors—RGB-D cameras, LiDAR, inertial measurement units (IMUs), microphones, and tactile sensors—to construct a comprehensive and redundant representation of its surroundings. The core challenge lies in fusion: integrating these disparate data streams (images, point clouds, inertial data, sound) into a coherent, temporally synchronized, and actionable world model.

Target detection, segmentation, and 3D reconstruction generate the foundational multimodal data. Modern approaches heavily leverage transformer-based architectures, initially popularized by models like GPT and BERT, for their superior ability in cross-modal association learning. Models are trained on massive datasets to align visual, linguistic, and sometimes physical concepts. The learning objective for a vision-language model can be conceptualized as maximizing the alignment between the embedded representations of a visual scene \(V\) and its textual description \(T\):

$$ \max_{\theta} \sum_{(V, T) \in \mathcal{D}} \text{sim}(f_{\theta}(V), g_{\theta}(T)) $$

where \(f_{\theta}\) and \(g_{\theta}\) are deep neural network encoders for vision and text, respectively, \(\text{sim}\) is a similarity function (e.g., cosine similarity), and \(\mathcal{D}\) is the training dataset. “Embodied” variants like PaLM-E take this further by integrating such models with real-time sensor data and robotic state information to directly inform action sequences.

The following table summarizes key sensor modalities and their fusion challenges:

| Sensor Modality | Data Type | Primary Role | Fusion Challenge |

|---|---|---|---|

| RGB Camera | 2D Pixel Array (Color) | Object recognition, scene understanding | Lack of depth information; sensitive to lighting |

| Depth Camera (e.g., ToF, Structured Light) | 2.5D Point Cloud/Depth Map | 3D geometry, distance measurement | Noise under bright light/transparent surfaces; limited range |

| LiDAR | 3D Point Cloud | High-precision 3D mapping, localization | Sparse data; difficulty with reflective surfaces; high cost |

| IMU | Linear Acceleration, Angular Velocity | Ego-motion estimation, orientation | Accumulative drift error over time |

| Microphone Array | Audio Signal | Sound source localization, speech recognition | Ambient noise interference; reverberation |

Fusion algorithms range from classical probabilistic methods (Kalman Filters, Particle Filters) for state estimation to deep learning models (Multimodal Transformers) for joint representation learning. The choice depends on the required latency, accuracy, and computational resources available on the embodied AI robot.

2. Autonomous Decision-Making and Learning

This pillar constitutes the “mind” of the embodied AI robot. The decision-making system enables the robot to assess its state, evaluate potential actions, and choose behaviors that maximize task success. A formal framework for this is the Markov Decision Process (MDP), defined by the tuple \((S, A, P, R, \gamma)\):

- \(S\): Set of states (the perceived world).

- \(A\): Set of actions the robot can take.

- \(P(s_{t+1} | s_t, a_t)\): State transition probability.

- \(R(s_t, a_t)\): Reward received after taking action \(a_t\) in state \(s_t\).

- \(\gamma\): Discount factor for future rewards.

The robot’s goal is to learn a policy \(\pi(a_t | s_t)\) that maximizes the expected cumulative reward: \( \mathbb{E}_{\pi}[\sum_{t=0}^{\infty} \gamma^t R(s_t, a_t)] \).

Three primary methodological strands are shaping how embodied AI robots learn to make decisions:

| Approach | Core Mechanism | Advantages | Limitations for Embodiment |

|---|---|---|---|

| Large Model-Based Planning | Uses LLMs/VLMs as knowledge bases and planners to decompose natural language instructions into action sequences or code. | Leverages vast prior knowledge; excellent for task parsing and high-level planning. | Lacks physical commonsense; plans may be physically infeasible or unsafe without grounding. |

| Perception-Action Planning | Connects perceived world state (e.g., object poses, scene graph) to symbolic planners (e.g., PDDL) or neural planners. | Physically grounded; can be verifiable and interpretable. | Requires accurate perception and predefined symbolic representations; struggles with novelty. |

| Reinforcement Learning (RL) | The robot (agent) learns optimal policy \(\pi^*\) through trial-and-error interaction with a simulated or real environment. | Can discover novel, high-performing strategies; adapts to complex dynamics. | Extremely sample inefficient; sim-to-real transfer is challenging; reward design is critical and difficult. |

In practice, the most promising path for advanced embodied AI robots involves hybrid systems. For example, an LLM might propose a high-level plan (“make a cup of coffee”), a perception module grounds this in the immediate scene (“coffee machine is on the counter”), and a trained RL policy executes the low-level visuomotor control (“approach, grasp handle, press button”).

3. Motion Control and Planning

This is the translation of decisions into physical motion. It is split into two interrelated problems: planning (what path to take) and control (how to accurately follow it). For an embodied AI robot, this must account for kinematics, dynamics, obstacles, and often, real-time constraints.

Motion Planning: The goal is to find a collision-free path from a start configuration \(q_{start}\) to a goal configuration \(q_{goal}\) in the robot’s configuration space \(C\). Sampling-based planners like Rapidly-exploring Random Trees (RRT) and its asymptotically optimal variant RRT* are widely used for high-dimensional spaces. The algorithm incrementally builds a tree \(T\) rooted at \(q_{start}\) by sampling random points \(q_{rand}\) in \(C\), finding the nearest node \(q_{near}\) in \(T\), and extending towards \(q_{rand}\) by a step size \(\eta\) to create \(q_{new}\). The process continues until \(q_{new}\) is within a threshold of \(q_{goal}\).

Motion Control: This involves generating the precise torque commands \(\tau\) for the robot’s actuators to achieve a desired trajectory \(q_d(t)\). A classic and foundational method is PID control:

$$ \tau = K_p e(t) + K_i \int_0^t e(t’) dt’ + K_d \frac{de(t)}{dt} $$

where \(e(t) = q_d(t) – q(t)\) is the tracking error. While effective for many applications, complex embodied AI robots operating in contact-rich environments require more advanced strategies:

- Impedance/Admittance Control: Regulates the dynamic relationship between force and motion, crucial for safe human-robot interaction.

- Model Predictive Control (MPC): Solves an optimization problem over a finite time horizon to determine control actions, explicitly handling constraints.

- Learning-Based Control: Uses neural networks, often trained via RL, to learn direct or improved control policies \(\pi_{\theta}(a_t | s_t)\), bypassing the need for an explicit dynamic model.

4. Natural and Intuitive Human-Robot Interaction (HRI)

For an embodied AI robot to be truly useful in human spaces, it must communicate and collaborate effectively. This extends beyond simple voice commands to include understanding intent, context, and even affect.

Modern HRI leverages the power of large models. For instance, a system can use a speech recognizer to convert audio to text, an LLM to interpret the instruction and its context, and a vision model to ground the request in the current scene. The robot’s response can be multimodal: verbal reply, expressive gesture, and physical action. Research in affective computing aims to equip robots with the ability to recognize human emotional states through facial expression analysis, vocal tone prosody, and body language, and to respond with appropriate empathy, a field explored by projects like empathic voice interfaces.

The technical pipeline for a natural language-driven task might be:

1. Speech & Language Understanding: \( \text{Audio Signal} \xrightarrow{\text{ASR}} \text{Text} \xrightarrow{\text{LLM}} \text{Task Semantics/Intent} \)

2. Visual Grounding: \( \text{Camera Image} \xrightarrow{\text{VLM}} \text{Object Labels/Poses} \)

3. Action Generation: \( \text{(Intent, Scene Context)} \xrightarrow{\text{Planner}} \text{Action Sequence} \xrightarrow{\text{Controller}} \text{Motor Commands} \)

Persistent Challenges and Technical Bottlenecks

Despite rapid progress, the development of robust and versatile embodied AI robots is constrained by significant, interconnected challenges.

1. Perception and Understanding Under Uncertainty. Real-world perception is noisy and incomplete. Depth sensors (e.g., ToF) suffer from errors on the order of centimeters under challenging lighting or with specular surfaces. Multimodal fusion must handle asynchronous data streams and occlusions. While deep learning models offer robustness, they are data-hungry and can fail catastrophically on out-of-distribution inputs, a serious safety concern for an embodied AI robot operating in the open world.

2. The Sample Efficiency and Generalization Crisis in Learning. Reinforcement learning, while powerful, is notoriously sample-inefficient. Training a complex robot policy can require millions of simulated episodes, equivalent to years of real-time experience. Furthermore, policies trained in simulation often fail to transfer to reality—the “sim-to-real gap”—due to unmodeled dynamics, perception differences, and latency. Generalizing learned skills across different objects, environments, and tasks remains a fundamental hurdle.

3. The Complexity of Dexterous Control and Long-Horizon Planning. Planning in dynamic environments with multiple agents (like humans) has exponential computational complexity. Controlling a high-degree-of-freedom humanoid robot for dexterous manipulation (e.g., in-hand rotation, tool use) requires managing contact forces, slippage, and object compliance, problems that are still at the forefront of robotics research. Achieving human-like precision (e.g., sub-millimeter accuracy for surgical robots) demands exquisite sensorimotor integration.

4. Achieving Truly Natural and Context-Aware Interaction. Understanding human intent goes beyond literal speech. It requires a deep understanding of context, social norms, and non-verbal cues. Current models struggle with sarcasm, implied tasks, and multi-turn dialogue where intent evolves. Creating robots that can proactively assist without being intrusive, or explain their failures in an understandable way, is a major HRI challenge.

5. The Tangled Web of Ethics, Safety, and Privacy. An embodied AI robot in a home or workplace collects vast amounts of sensitive visual, audio, and spatial data. Ensuring this data is encrypted (e.g., using AES-256 for storage, TLS for transmission) and processed with privacy-by-design principles is paramount. Algorithmic bias in decision-making, safety assurance in unpredictable environments, and clear legal frameworks for liability in case of malfunction or harm are unresolved societal and technical questions that must be addressed alongside technological advancement.

Future Trajectories and Strategic Imperatives

Looking forward, the evolution of the embodied AI robot will be characterized by deeper integration, bio-inspiration, and systemic innovation.

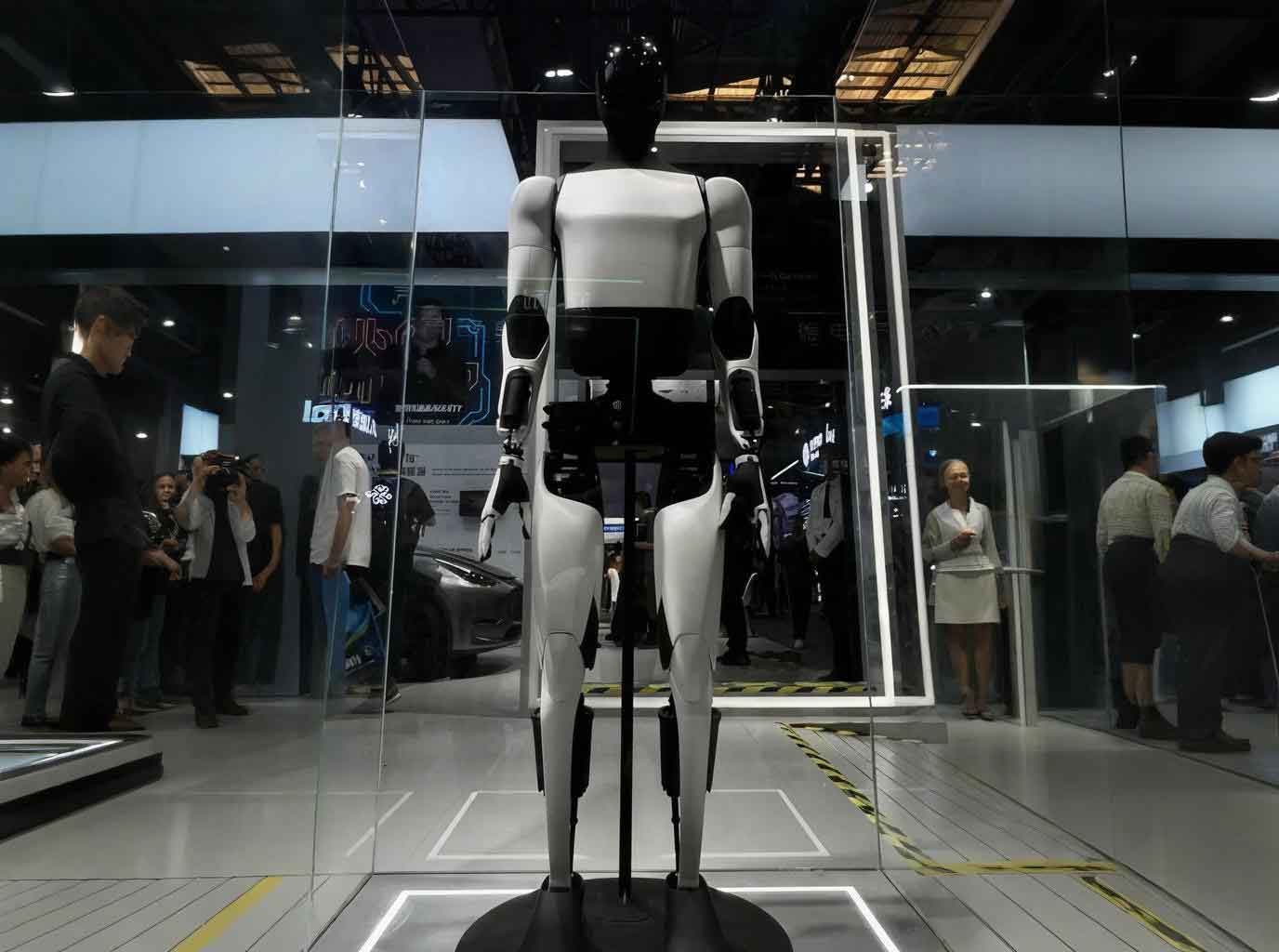

Convergent Design and Bio-Inspiration: The future embodied AI robot will likely see tighter co-design of hardware and software. Biomimetic principles will inspire new actuator technologies (like artificial muscles), compliant and tactile-sensing skins, and energy-efficient locomotion. Humanoid form factors will be pursued not for vanity, but because our world is built for human bodies; this morphology may be optimal for general-purpose embodied AI robots.

Ubiquitous Fusion with Advanced AI: The boundary between the robot and its AI “brain” will blur. We will see:

– Foundation Models for Embodiment: Large models pre-trained on internet-scale data plus physical interaction data will serve as universal priors for robot reasoning and skill acquisition.

– Edge-Cloud Hybrid Architectures: Time-critical perception and control will run on optimized hardware onboard the embodied AI robot, while complex reasoning, learning, and model updates will leverage cloud computing.

– Causal and World Models: Moving beyond pattern recognition, robots will need to learn causal models of physics and interaction to predict outcomes and plan more robustly.

Cultivating an Interdisciplinary Ecosystem: Progress hinges on talent that bridges robotics, computer science, cognitive science, materials engineering, and ethics. Educational programs must foster this cross-pollination. Furthermore, a healthy ecosystem requires open platforms (for simulation, benchmark tasks, and software), shared datasets, and collaboration across industry and academia to avoid fragmentation and accelerate the path from lab to real-world application.

Responsible Deployment and Societal Integration: Strategic guidance should prioritize the development and application of embodied AI robots in sectors with high social impact and clear need, such as healthcare (rehabilitation, elder care), hazardous environments (disaster response, nuclear decommissioning), and personalized education. Proactive engagement with policymakers, ethicists, and the public is essential to build trust and establish sensible governance frameworks for this transformative technology.

In conclusion, embodied intelligence represents a fundamental advance in our quest to create machines that understand and act in our world. The embodied AI robot is the vessel for this ambition. Its journey is fraught with formidable technical obstacles spanning perception, learning, control, and interaction, compounded by profound ethical considerations. However, the trajectory is clear: through continued research in multimodal fusion, sample-efficient and grounded learning, dexterous control, and natural HRI—all supported by interdisciplinary collaboration and responsible innovation—the embodied AI robot will evolve from a sophisticated tool into a capable, collaborative partner, unlocking new possibilities across the spectrum of human endeavor.