The evolution of artificial intelligence is marked by a profound paradigm shift from disembodied software agents to intelligent entities that physically interact with the world. This paradigm is embodied intelligence, which posits that true intelligence arises not merely from abstract computation but from the dynamic coupling of a brain (control algorithms), a body (physical morphology and sensors), and the environment. The ultimate manifestation of this concept is the embodied AI robot – a synergistic integration of hardware and software capable of autonomous perception, decision-making, and action through continuous environmental interaction and self-evolution. Unlike traditional AI models deployed on static hardware, an embodied AI robot learns and adapts through a closed-loop “perception-action” cycle, acquiring commonsense reasoning and robust skills essential for operating in the open-ended, unpredictable physical world.

The development of sophisticated embodied AI robot systems is propelled by converging advancements in machine learning, robotics, computer vision, and cognitive science. However, transitioning from conceptual frameworks to practical, reliable systems presents immense challenges. This article delves into the core research problems of embodied intelligence, analyzing its general framework, key technologies for perception and execution, mechanisms for learning and evolution, and its application across various robotic platforms, concluding with future perspectives shaped by digital twins and parallel intelligence.

The General Framework for Embodied Intelligence Research

The design and research of an embodied AI robot must transcend isolated component optimization and embrace a holistic, interactive systems perspective. While specific implementations vary with the robot’s form and function, a general framework typically revolves around three core, interconnected modules: Embodied Perception, Embodied Execution, and Embodied Learning & Evolution. These modules form a cohesive, recursive loop where perception drives execution, the outcomes of execution provide feedback to refine perception and models, and continuous learning optimizes the entire system for long-term autonomy.

1. Embodied Perception and Execution: This is the immediate “sense-think-act” cycle. Perception is not a passive reception of data but an active, task-driven process. An embodied AI robot must fuse multi-sensory data (e.g., vision, LiDAR, tactile, audio) to construct a comprehensive and actionable understanding of its surroundings. Crucially, this understanding must be robust across different domains (e.g., lighting, weather). Following perception, the execution module translates cognitive understanding into physical action. This involves trajectory planning under complex constraints (obstacles, dynamics) and precise motion control for locomotion or manipulation, all while considering the predicted consequences and risks of actions.

2. Embodied Learning and Evolution: This module encapsulates the embodied AI robot‘s capacity for lifelong adaptation. It involves mechanisms for incremental knowledge acquisition, where the robot can learn new object categories or skills from novel experiences without catastrophically forgetting previous knowledge. Furthermore, through reinforcement learning and other trial-and-error methods, the robot optimizes both its behavioral policies (how to act) and, in advanced systems, its morphological strategies (how its body could be better configured). This learning enables the robot to evolve from performing simple, pre-programmed tasks to mastering complex, long-horizon activities in dynamic environments.

The interplay between these modules is continuous. Execution provides the experiential data (successes and failures) that fuels learning. The evolved knowledge and policies, in turn, enhance the efficiency and accuracy of perception and the optimality of execution strategies, creating a virtuous cycle of self-improvement for the embodied AI robot.

Core Challenges in Embodied Perception and Execution

The physical interaction of an embodied AI robot with the world demands a level of perceptual robustness and executive reliability far beyond that required for virtual agents. A promising integrated approach is the “Perception-Simulation-Execution” loop.

The Perception-Simulation-Execution Loop

This mechanism refines the classic perception-action cycle by incorporating an internal “imagination” or simulation phase. First, embodied perception algorithms process multi-modal sensor streams to create a scene representation. This representation is then fed into a simulation or world model, often powered by generative AI or physics engines. Here, the embodied AI robot can predict the outcomes of potential actions, assess risks, and reason about future states before committing to a physical act. The insights from simulation, such as identifying high-risk zones, can provide feedback to dynamically adjust the focus and parameters of the perception system. Finally, the validated action plan is sent to the execution module for physical enactment. This closed-loop integration allows for safer, more foresightful, and computationally efficient behavior.

Key Technologies for Embodied Perception

1. Multi-Modal Fusion Perception: An embodied AI robot perceives the world through heterogeneous sensors. Effective fusion is critical. Methods can be categorized by their feature representation strategy.

| Fusion Method | Core Principle | Advantages | Challenges |

|---|---|---|---|

| Point-based Fusion | Projects 2D image features into 3D space as pseudo-point clouds for fusion with LiDAR data. | Leverages mature point cloud processing networks (e.g., PointNet++). | Depth estimation from images can be noisy; pseudo-point clouds may be dense and computationally heavy. |

| BEV-based Fusion | Lifts all sensor modalities (cameras, LiDAR, radar) into a unified Bird’s-Eye-View (BEV) representation. | Provides a consistent spatial layout ideal for planning; simplifies multi-modal and temporal fusion. | Transformation from perspective view to BEV can lose detailed semantic or height information. |

| Heterogeneous Fusion | Processes different modalities in their native feature spaces and fuses them at various network stages (early, late, or hybrid). | Flexible architecture that can preserve the unique strengths of each modality’s representation. | Designing effective cross-modal interaction layers (e.g., attention mechanisms) is complex. |

2. Domain Adaptation: A practical embodied AI robot must operate in unseen environments. Domain adaptation techniques bridge the gap between training (source) and deployment (target) domains. Key approaches include:

- Data Optimization: Using techniques like domain randomization (varying textures, lighting in simulation) or image-to-image translation (e.g., CycleGAN) to create a diverse, robust training set.

- Model Optimization: Learning domain-invariant features through adversarial training, where a domain discriminator tries to distinguish source from target features, forcing the feature extractor to become domain-agnostic. The loss for this adversarial component can be formulated as part of a minimax game:

$$ \min_{G} \max_{D} V(D, G) = \mathbb{E}_{x \sim p_{data}(x)}[\log D(x)] + \mathbb{E}_{z \sim p_z(z)}[\log(1 – D(G(z)))] $$

where $G$ is the feature generator and $D$ is the domain discriminator.

Key Technologies for Embodied Execution

1. Trajectory Planning: Planning a safe and feasible path for an embodied AI robot is a core challenge. Methods are broadly classified.

- Model-based Planning: Relies on explicit models of the environment and/or robot dynamics.

- Environmental Models: Algorithms like A*, RRT, and D* search geometric or topological maps. For example, A* finds the shortest path by minimizing a cost function $f(n) = g(n) + h(n)$, where $g(n)$ is the cost from start to node $n$, and $h(n)$ is a heuristic estimate to the goal.

- Kinodynamic Models: Algorithms like Kinodynamic RRT* consider the robot’s differential constraints (e.g., acceleration limits, non-holonomic constraints) directly in the planning loop, ensuring the resulting trajectory is dynamically feasible.

- Learning-based Planning: Uses reinforcement learning (RL) to learn optimal policies directly from interaction.

- Model-Free RL: Algorithms like Deep Q-Networks (DQN) or Soft Actor-Critic (SAC) learn a policy $\pi(a|s)$ or a value function $Q(s,a)$ without an explicit world model. The Q-learning update rule is: $$Q(s_t, a_t) \leftarrow Q(s_t, a_t) + \alpha [r_{t+1} + \gamma \max_a Q(s_{t+1}, a) – Q(s_t, a_t)]$$.

- Model-Based RL: Learns or uses a model of the environment dynamics $P(s_{t+1}|s_t, a_t)$ to perform internal simulation (planning) for more data-efficient policy improvement.

2. Motion Control: This translates planned trajectories into low-level actuator commands. Control strategies range from classical PID and Linear-Quadratic Regulator (LQR) for stable, linear systems to modern adaptive and intelligent control for complex, nonlinear embodied AI robot dynamics.

- Intelligent Control: Incorporates AI techniques. Neural network controllers can approximate complex inverse dynamics models. Fuzzy logic handles imprecise sensor inputs. Most notably, Deep Reinforcement Learning (DRL) Control enables an embodied AI robot to learn highly agile and adaptive motion skills, such as bipedal locomotion or dexterous manipulation, through extensive practice in simulation.

Core Challenges in Embodied Learning and Evolution

For an embodied AI robot to be truly autonomous, it must possess a lifelong learning capability, continuously integrating new experiences into its existing knowledge base without degradation—a capability inspired by human cognitive development.

The “Impression-Memory-Knowledge” Cognitive Model

This theoretical model describes the hierarchical learning process of an embodied AI robot.

- Impression: Upon encountering a novel object or situation, the robot extracts and caches its raw sensory features and tentative semantic label (e.g., from a lightweight vision-language model). This forms a transient “impression.”

- Memory: When similar impressions accumulate beyond a threshold, they are consolidated into long-term memory through incremental learning. The robot’s perceptual model is updated to reliably recognize this new category, and its behavioral policies are refined to interact with it.

- Knowledge: Through reinforcement learning and cross-robot collaboration, highly generalized and robust behavioral and morphological strategies are distilled from memories. This distilled knowledge can be stored as policy network parameters or skill primitives, serving as transferable prior knowledge for other embodied AI robot instances or for tackling more complex future tasks.

Key Technologies for Incremental Learning (From Impression to Memory)

The primary challenge in incremental learning is catastrophic forgetting—the tendency of a neural network to overwrite previously learned knowledge when trained on new data. Strategies to mitigate this are compared below.

| Method Category | Core Mechanism | Representative Algorithm | Pros & Cons |

|---|---|---|---|

| Parameter Regularization | Estimates importance of network parameters for old tasks and penalizes their change during new training. | Elastic Weight Consolidation (EWC) | + Simple, effective for task-incremental learning. – Can struggle with class-incremental learning where discrimination between old and new classes is needed. |

| Knowledge Distillation | Uses outputs or intermediate features of the old model as soft targets to guide training of the new model, preserving old class relationships. | Learning without Forgetting (LwF) | + More flexible, generally better for class-incremental learning. – Performance depends on the similarity between old and new tasks. |

| Replay-Based | Stores a subset of old data samples or their features, or generates them via a Generative Adversarial Network (GAN), and rehearses them alongside new data. | iCaRL, Generative Replay | + Often achieves high performance. – Storage overhead or complexity of training a generative model. |

| Architectural | Dynamically expands the network architecture (adds new neurons or branches) to accommodate new knowledge. | Progressive Neural Networks | + Naturally avoids forgetting. – Model size grows indefinitely, may not be suitable for long-term deployment on an embodied AI robot. |

Key Technologies for Policy Evolution and Knowledge Transfer

1. Reinforcement Learning for Embodied Optimization: RL is a powerful framework for an embodied AI robot to learn optimal policies through trial and error. The process is formalized as a Markov Decision Process (MDP) defined by the tuple $(S, A, P, R, \gamma)$, where an agent in state $s_t \in S$ takes action $a_t \in A$, transitions to state $s_{t+1}$ with probability $P(s_{t+1}|s_t, a_t)$, and receives reward $r_t = R(s_t, a_t)$. The goal is to learn a policy $\pi(a|s)$ that maximizes the expected discounted return $G_t = \sum_{k=0}^{\infty} \gamma^k r_{t+k+1}$.

In the context of an embodied AI robot, RL can optimize:

- Behavioral Policies: Standard application, e.g., learning locomotion or grasping.

- Morphological Policies: Co-optimizing the design or control parameters of the robot’s body itself, often using graph neural networks (GNNs) to represent modular structures for transfer across morphologies.

2. Multi-Agent Collaborative Optimization: In real-world settings like warehouses or smart cities, multiple embodied AI robot units must cooperate. Multi-Agent Reinforcement Learning (MARL) frameworks extend RL to environments with multiple interacting agents. Key paradigms include:

- Cooperative: Agents share a common reward, working towards a unified goal (e.g., collaborative transport). Algorithms like QMIX learn factored value functions.

- Competitive: Agents have opposing rewards (zero-sum games), as seen in adversarial robotics.

- Mixed: Environments with both cooperative and competitive elements, modeled as general-sum stochastic games.

MARL enables the emergence of complex coordinated behaviors and efficient division of labor, which is essential for scaling up the capabilities of embodied AI robot systems.

Embodied AI Robot Systems: From Mobility to Parallel Worlds

The principles of embodied intelligence are being integrated into diverse robotic platforms, each with unique challenges and opportunities.

1. Mobile Robot Systems: These include autonomous vehicles and mobile manipulators. An embodied AI robot like a self-driving car leverages the “Perception-Simulation-Execution” loop for real-time scene understanding, trajectory prediction, and risk-aware planning. The trend is towards end-to-end systems where a single foundation model, trained on massive multi-modal data, directly maps sensory input to driving commands, enhancing generalization and simplifying the stack.

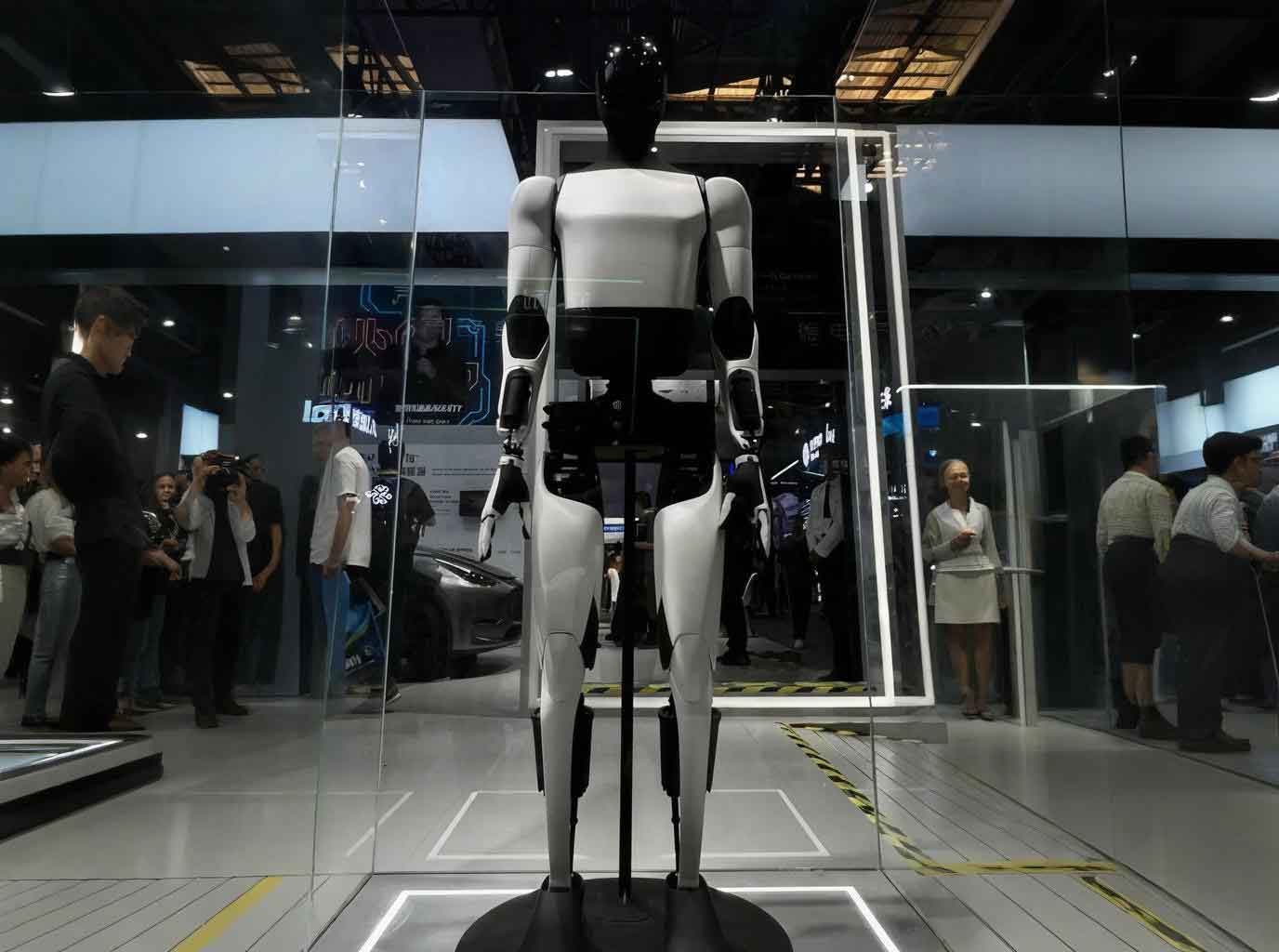

2. Bionic Robot Systems: This category includes humanoids, quadrupeds, and soft robots. Here, embodied intelligence is crucial for mastering stable, dynamic locomotion and whole-body manipulation in human-centric environments. For instance, a humanoid embodied AI robot uses deep RL to learn agile movements like walking or running, while integrating large language models for natural task understanding and instruction following (“pick up the cup next to the laptop”).

3. Parallel Robot Systems: This visionary framework, closely aligned with the concept of Digital Twins, proposes a triad: a Physical Robot in the real world, a Software Robot (AI mind) in cyberspace, and a Knowledge Robot for automated reasoning. An embodied AI robot in this system operates in a closed-loop with its digital twin. The virtual counterpart can run millions of computational experiments (planning, testing failure scenarios, exploring new skills) at high speed. The optimal policies or insights are then deployed to the physical robot. This parallel execution (ACP method: Artificial systems, Computational experiments, Parallel execution) allows for safe, rapid learning, robust validation, and intelligent prescriptive control, fundamentally accelerating the development and deployment of intelligent embodied AI robot systems.

Conclusion and Future Outlook

The journey toward truly intelligent embodied AI robot systems is a grand challenge at the intersection of hardware engineering, algorithms, and cognitive science. Key future directions include:

1. Virtual-Real Fusion Data Intelligence: Developing high-fidelity, interactive simulators and efficient sim-to-real transfer techniques is paramount. These digital playgrounds will allow embodied AI robot algorithms to learn safely and at scale before real-world deployment.

2. Foundation Models and Embodied Foundation Intelligence: Large pre-trained vision-language-action models will serve as the “common sense brain” for robots. The future lies in grounding these models in physical embodiment—enabling an embodied AI robot to understand “fragile” not just from text, but from the tactile sensation and visual context of handling a glass, and to plan actions that respect this physics-based understanding.

3. Digital Twins and Parallel Intelligence: The fusion of embodied intelligence with the parallel intelligence framework represents a powerful paradigm. By creating and interacting with digital twins in a metaverse of scenarios, an embodied AI robot can achieve continuous testing, learning, and adaptation. This symbiotic relationship between the physical and artificial systems will be key to managing the complexity of real-world operations, ensuring safety, and ultimately enabling the emergence of adaptive, trustworthy, and truly autonomous embodied AI robot societies.

The evolution from rigid automation to flexible, learning-enabled embodied AI robot marks a fundamental shift. By solving the intertwined problems of perception, execution, and lifelong learning within interactive environments, we pave the way for machines that can not only perform tasks but also understand, adapt to, and collaboratively shape the physical world alongside humans.