The field of robotics stands at a transformative juncture, propelled by the convergence of embodied intelligence and large foundation models. For decades, the quintessential challenge has been enabling an embodied AI robot to autonomously navigate and act within our complex, unstructured world. Traditional paradigms, reliant on precise mapping and hand-crafted rules, often falter outside controlled environments. The advent of large language models (LLMs) and multimodal large language models (MLLMs) has ignited a paradigm shift, offering a path toward robots that can understand, reason, and navigate with a degree of generalization and interactivity previously unimaginable. This article surveys the rapidly evolving landscape of large model-driven navigation for embodied AI robot systems, tracing the conceptual journey from symbolic AI to embodied interaction, dissecting the current architectural paradigms, and charting the future trajectory of this foundational technology.

From Symbolic Logic to Embodied Interaction: The Evolution of Robotic Navigation

The quest for intelligent navigation is inextricably linked to the broader history of artificial intelligence. The classical approach, rooted in symbolic AI, treated the embodied AI robot as a geometric planner in a perfectly known world. This “sense-plan-act” pipeline involved constructing a precise metric map, computing an optimal path using algorithms like A* or Dijkstra, and executing it with low-level controllers. The intelligence was hard-coded into the rules and maps. While effective in structured settings like factories, this approach’s fragility became apparent in dynamic, unknown environments where maps were unavailable or instantly obsolete.

The rise of deep reinforcement learning (DRL) marked the first major leap toward embodied learning. Here, the embodied AI robot learns navigation policies through trial-and-error interactions with a simulated environment, optimizing a reward function $$R(s_t, a_t)$$. This paradigm enabled robots to develop complex behaviors like dynamic obstacle avoidance and traversal of challenging terrain directly from high-dimensional sensory inputs (e.g., images, lidar). The policy $$π_θ(a_t | s_t)$$, parameterized by a deep neural network with weights θ, is trained to maximize the expected cumulative reward:

$$J(θ) = \mathbb{E}_{τ \sim π_θ}\left[\sum_{t=0}^{T} γ^t R(s_t, a_t)\right]$$

where τ is a trajectory and γ is a discount factor. However, DRL-based navigation is notoriously sample-inefficient and often struggles with catastrophic forgetting and poor generalization to new, unseen physical environments, limiting its practical deployment.

The emergence of the Vision-and-Language Navigation (VLN) task further bridged the gap between perception and human instruction. It required an embodied AI robot to follow natural language directives (e.g., “Go to the kitchen and wait near the brown table”) using only visual observations, without a prior map. While leveraging sophisticated cross-modal learning, these models still required extensive task-specific training on limited datasets and lacked the broad, commonsense reasoning needed for robust real-world operation.

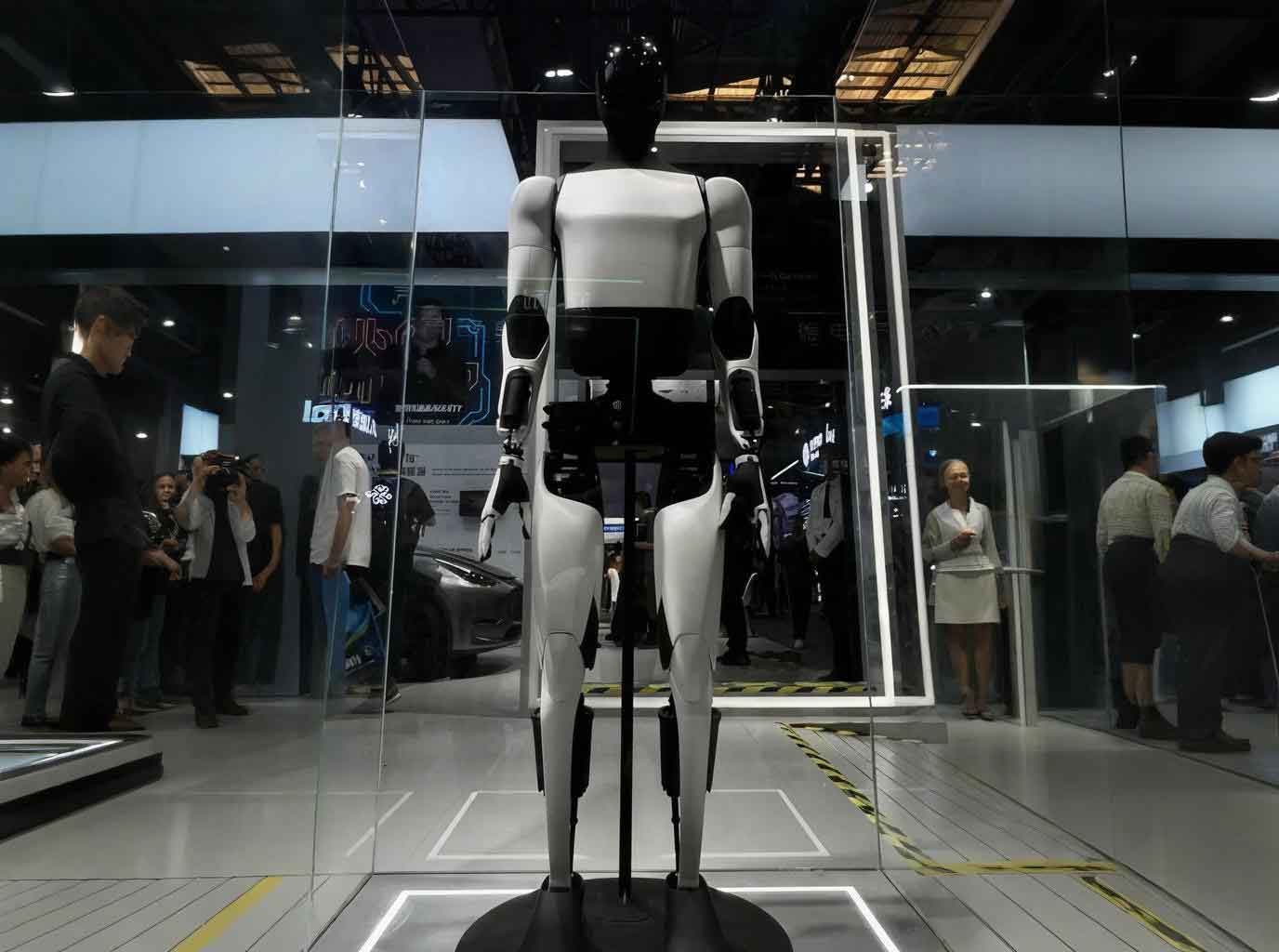

The physical form of the embodied AI robot is a critical factor in its navigational capabilities. The choice of mobility platform determines the environmental affordances. The table below summarizes common embodiments.

| Embodiment Type | Key Characteristics | Navigation Advantages | Limitations |

|---|---|---|---|

| Wheeled Mobile Robot | Differential drive, Ackermann steering, omnidirectional wheels. | High efficiency and stability on flat, structured surfaces. Simple control. | Cannot traverse stairs, significant obstacles, or very rough terrain. |

| Legged Mobile Robot | Bipedal, quadrupedal, or hexapod designs with articulated limbs. | Extreme terrain adaptability. Can climb stairs, step over gaps, and navigate highly unstructured environments. | High mechanical complexity, energy consumption, and challenging motion planning/control. |

| Wheel-Legged Hybrid Robot | Combines wheels for efficiency with legs for obstacle negotiation. | Versatility. Uses wheels on flat ground for speed and deploys legs for obstacles. | Design complexity and potential compromises in both wheeled and legged performance modes. |

The Large Model Revolution: Core Architectural Paradigms

The breakthrough in foundation models, such as GPT-4, CLIP, and PaLM, has fundamentally redefined the possibilities for embodied AI robot navigation. These models provide two revolutionary capabilities: general-purpose reasoning and multimodal grounding. An LLM can decompose a high-level command (“Bring me the coffee mug from the living room”) into a logical sequence of sub-tasks, drawing on world knowledge. An MLLM can ground this reasoning in visual perception, linking the word “mug” to pixel regions in a camera feed. This fusion enables a new class of navigation systems that are zero-shot or few-shot learners, requiring minimal task-specific training. Current research has crystallized around two dominant architectural paradigms for integrating these models into the embodied AI robot control loop.

End-to-End Embodied Navigation Models

The end-to-end paradigm aspires to a fully integrated cognitive engine. Here, a single, massive multimodal model (e.g., PaLM-E) is trained to consume all raw or lightly processed sensor data—RGB images, lidar point clouds, proprioceptive state, and natural language instructions—and directly output low-level motor commands or high-level action primitives. The model’s internal representations seamlessly blend perception, language, and control.

The core advantage is the elimination of hand-designed modular pipelines and their associated error propagation. Information flows through a unified latent space, potentially allowing the model to discover optimal representations for the navigation task. The training objective often combines multiple losses: a language modeling loss for instruction following, a vision-language contrastive loss for grounding, and a policy loss for action generation. The action $$a_t$$ at time $$t$$ is generated directly by the model $$M$$:

$$a_t = M(τ_{0:t}, I, O_{0:t})$$

where $$τ_{0:t}$$ is the history of previous actions/states, $$I$$ is the natural language instruction, and $$O_{0:t}$$ is the sequence of multimodal observations.

However, this paradigm faces significant hurdles. Training such models requires colossal, diverse datasets of robot experience (e.g., RT-X, Open X-Embodiment), which are scarce and expensive to collect. The models are often opaque “black boxes,” making it difficult to diagnose failures or ensure safety. Furthermore, their sheer computational cost can hinder real-time operation on onboard hardware, and they can exhibit unpredictable behaviors when faced with out-of-distribution scenarios. The pursuit of this paradigm is a quest for a general-purpose embodied AI robot brain, but its practical deployment remains challenging.

Hierarchical (Modular) Embodied Navigation Models

In contrast, the hierarchical paradigm adopts a divide-and-conquer strategy, leveraging large models as powerful, specialized modules within a structured pipeline. This design philosophy prioritizes interpretability, reliability, and ease of integration. The navigation process is decomposed into stages like perception, scene understanding, task planning, and motion control, with LLMs or MLLMs injected at the strategic planning level. A typical three-layer hierarchy includes:

- Perception & Grounding Layer: MLLMs (e.g., CLIP, BLIP-2) are used to parse visual scenes into semantic descriptions or to ground language instructions onto specific objects and locations in the environment. For example, generating a textual caption of the scene or scoring image patches based on their relevance to the query “front door.”

- Task Planning & Reasoning Layer: This is the core reasoning engine. An LLM (e.g., GPT-4) acts as a high-level planner. It receives the grounded perceptual information, the user’s instruction, and a history of actions. Using prompt engineering techniques like Chain-of-Thought, it decomposes the mission into a sequence of actionable sub-goals (e.g., “1. Exit the current room. 2. Turn left into the corridor. 3. Locate the door with a mat.”).

- Action Execution Layer: The symbolic plan from the LLM is translated into executable commands for the robot. This often involves a traditional, robust navigation stack (e.g., ROS navigation with SLAM and a local planner) or a learned policy. The LLM’s sub-goals (“go to the kitchen doorway”) are converted into costmaps or target coordinates for the lower-level controller.

The interaction can be formalized. Let the LLM-based planner $$P_{LLM}$$ produce a plan $$Π$$ as a sequence of $$K$$ symbolic actions $$σ_k$$:

$$Π = [σ_1, σ_2, …, σ_K] = P_{LLM}(I, G(Φ(O_t), I), H)$$

where $$G$$ is the grounding module (MLLM) that relates observation features $$Φ(O_t)$$ to the instruction $$I$$, and $$H$$ is the history. A deterministic or learned translation function $$T$$ then maps each symbolic action to a controller command:

$$a_t \sim T(σ_k, s_t)$$

This modularity is the key strength. Each component can be independently improved, validated, or replaced. The system’s reasoning is more transparent, as the LLM’s plan can be inspected. It enables efficient reuse of reliable, real-time tested lower-level navigation modules. The primary challenges are designing robust interfaces between modules to prevent error accumulation and managing the latency of sequential LLM calls.

The following table summarizes and contrasts representative works in both paradigms, highlighting the diversity of approaches within large model-driven embodied AI robot navigation.

| Model | Core Large Model(s) | Paradigm | Key Innovation | Primary Challenge Addressed |

|---|---|---|---|---|

| PaLM-E | PaLM, ViT | End-to-End | Embeds continuous sensor data directly into LLM’s token space for multimodal, embodied reasoning. | Integrating vision, language, and control into a single, generative model. |

| SayNav | LLM (e.g., GPT) | Hierarchical | Incrementally builds a 3D scene graph during exploration and uses LLM for dynamic, long-horizon planning. | Long-term planning and exploration in large, unknown indoor spaces. |

| LM-Nav | GPT-3, CLIP, ViNG | Hierarchical | Composes off-the-shelf models for zero-shot outdoor navigation: LLM for instruction parsing, CLIP for visual grounding, ViNG for local policy. | Zero-shot generalization to novel outdoor environments. |

| VLM Frontier Map (VLFM) | VLM (e.g., GPT-4V) | Hierarchical | Uses a VLM to score frontier exploration points based on language instruction, creating a semantic value map. | Improving efficiency of exploration in object goal navigation. |

| ESC | GLIP, LLM | Hierarchical | Uses Probabilistic Soft Logic to incorporate commonsense knowledge (e.g., “mugs are likely in kitchens”) as soft constraints on exploration. | Leveraging commonsense for efficient search in unseen environments. |

Fueling the Models: Datasets and Evaluation

The development of large model-driven navigation is critically dependent on data for training and benchmarks for evaluation. Unlike classic robotics, these systems often learn from passive internet-scale data (for pre-training) and specialized embodied datasets (for fine-tuning).

Key Datasets and Simulators:

* Matterport3D (MP3D): A large-scale dataset of 3D indoor scans, providing RGB-D images, surface reconstructions, and semantic annotations. It is the foundation for many VLN benchmarks like Room-to-Room (R2R).

* ProcTHOR: A procedural framework for generating vast, diverse, and photorealistic indoor scenes for training and testing embodied agents, enabling large-scale training.

* Habitat / iGibson: High-performance simulators that support configurable indoor environments, physics, and sensor models, allowing for fast, parallelized training of embodied AI robot policies.

* Open X-Embodiment: A massive, curated collection of robotic trajectory data from many different robots and labs, aiming to train generalist “Robotics Transformer” models that can transfer across embodiments.

Evaluation Metrics: Assessing an embodied AI robot navigator requires multifaceted metrics beyond simple success. The table below outlines the standard suite.

| Metric | Formula | Description |

|---|---|---|

| Success Rate (SR) | $$SR = \frac{1}{N} \sum_{i=1}^{N} S_i$$ where $$S_i \in \{0,1\}$$. |

The primary metric. Fraction of episodes where the agent stops within a threshold distance (e.g., 3m) of the goal. |

| Path Length (PL) | $$PL = \sum_{p \in \mathcal{P}} d(p_k, p_{k+1})$$ | The total length (in meters) of the trajectory $$\mathcal{P}$$ executed by the agent. |

| Success weighted by Path Length (SPL) | $$SPL = \frac{1}{N} \sum_{i=1}^{N} S_i \frac{L_i}{\max(P_i, L_i)}$$ | The most holistic metric. Balances success with efficiency. $$L_i$$ is the shortest possible path length, $$P_i$$ is the agent’s path length. Penalizes long, meandering paths even if successful. |

| Navigation Error (NE) | $$NE = d(p_{goal}, p_{stop})$$ | The shortest distance (in meters) from the agent’s stopping point to the true goal location. |

Challenges and Future Trajectories

Despite remarkable progress, the journey toward truly robust and ubiquitous large model-driven embodied AI robot navigation is fraught with open challenges. First is the real-time performance and safety dilemma. LLM inference is slow and computationally expensive, making it difficult to achieve the high-frequency control loops (10-100 Hz) required for stable, reactive navigation in dynamic environments. Future work must focus on model distillation, specialized efficient architectures, and hybrid systems where fast, reactive controllers are guided by slower, deliberate LLM planners.

Second is the problem of generalization and embodiment alignment. Models trained in simulation or on specific robot platforms struggle to transfer to different physical embodiments (a different wheelbase, camera height, or dynamics) or to unseen, cluttered real-world conditions. Research into domain-agnostic representations, robust sim-to-real transfer techniques, and meta-learning for quick adaptation is crucial. The embodiment, defined by its dynamics $$f(s_t, a_t)$$, must be explicitly accounted for in the reasoning process.

Third, there is the critical issue of grounding and long-horizon consistency. LLMs are prone to “hallucination”—generating plausible but incorrect plans based on textual priors that don’t match the current visual scene. Ensuring that the robot’s actions remain firmly grounded in its ongoing perceptual stream over long tasks is essential. Techniques like iterative verification through vision, maintaining persistent spatial-semantic memory (e.g., scene graphs), and fine-tuning on failure cases are promising directions.

Finally, the field must grapple with learning from interaction and human feedback. The ultimate teacher for an embodied AI robot is the environment and human users. Developing frameworks where robots can learn from few demonstrations, correct their mistakes based on human intervention, and align their objectives with human values (constitutional AI for robotics) will be key to moving from impressive demos to trustworthy collaborators in homes, hospitals, and public spaces.

In conclusion, the integration of large foundation models into embodied AI robot navigation is not merely an incremental improvement but a fundamental re-architecting of how robots perceive, reason, and move. By endowing machines with language understanding, commonsense reasoning, and multimodal fusion, we are transitioning from robots that navigate maps to robots that navigate meaning. The interplay between the end-to-end and hierarchical paradigms will likely define the next generation of systems, balancing the power of unified models with the safety and clarity of structured reasoning. As these technologies mature, the vision of a truly intelligent, helpful, and adaptive embodied AI robot seamlessly operating in our daily lives moves from science fiction toward an imminent reality.