As a researcher deeply embedded in the fields of artificial intelligence and robotics, I observe the rapid evolution of embodied intelligence not just as a technological trend, but as a fundamental shift in how machines interact with our world. Embodied intelligence, or embodied AI robot systems, represent the convergence of AI, robotics, and cognitive science. It posits that true intelligence arises not from disembodied computation alone, but from the dynamic coupling of a physical agent—its sensors, actuators, and body—with the environment through perception, action, and continuous adaptation. This stands in stark contrast to traditional symbolic AI, emphasizing that cognition is rooted in physical experience.

The journey of the embodied AI robot paradigm can be mapped through distinct, overlapping phases of conceptual and technical maturity, as summarized below:

| Phase | Timeframe | Core Paradigm | Key Characteristics |

|---|---|---|---|

| 1. Sensorimotor Coupling | 1980s-1990s | Behavior-Based Robotics | Reactive, “sense-act” loops; minimal internal representation; emphasis on real-time interaction with the environment. |

| 2. System Integration | 2000s onward | Embedded & Modular Systems | Advancements in embedded computing and multi-modal sensors (LIDAR, depth cameras); basic environment mapping and task sequencing capabilities. |

| 3. Learning-Driven Adaptation | 2010s onward | Data-Centric Learning | Proliferation of deep reinforcement learning (DRL) and imitation learning; significant gains in adaptability and generalization for complex manipulation and navigation tasks. |

| 4. Virtual-Physical Fusion & Semantic Understanding | 2020s onward | Large-Scale Simulation & Foundational Models | Integration of massive simulation environments (e.g., Isaac Sim) for training; incorporation of large language models (LLMs) and vision-language-action models for high-level planning and semantic reasoning; push toward generalizable, transferable skills. |

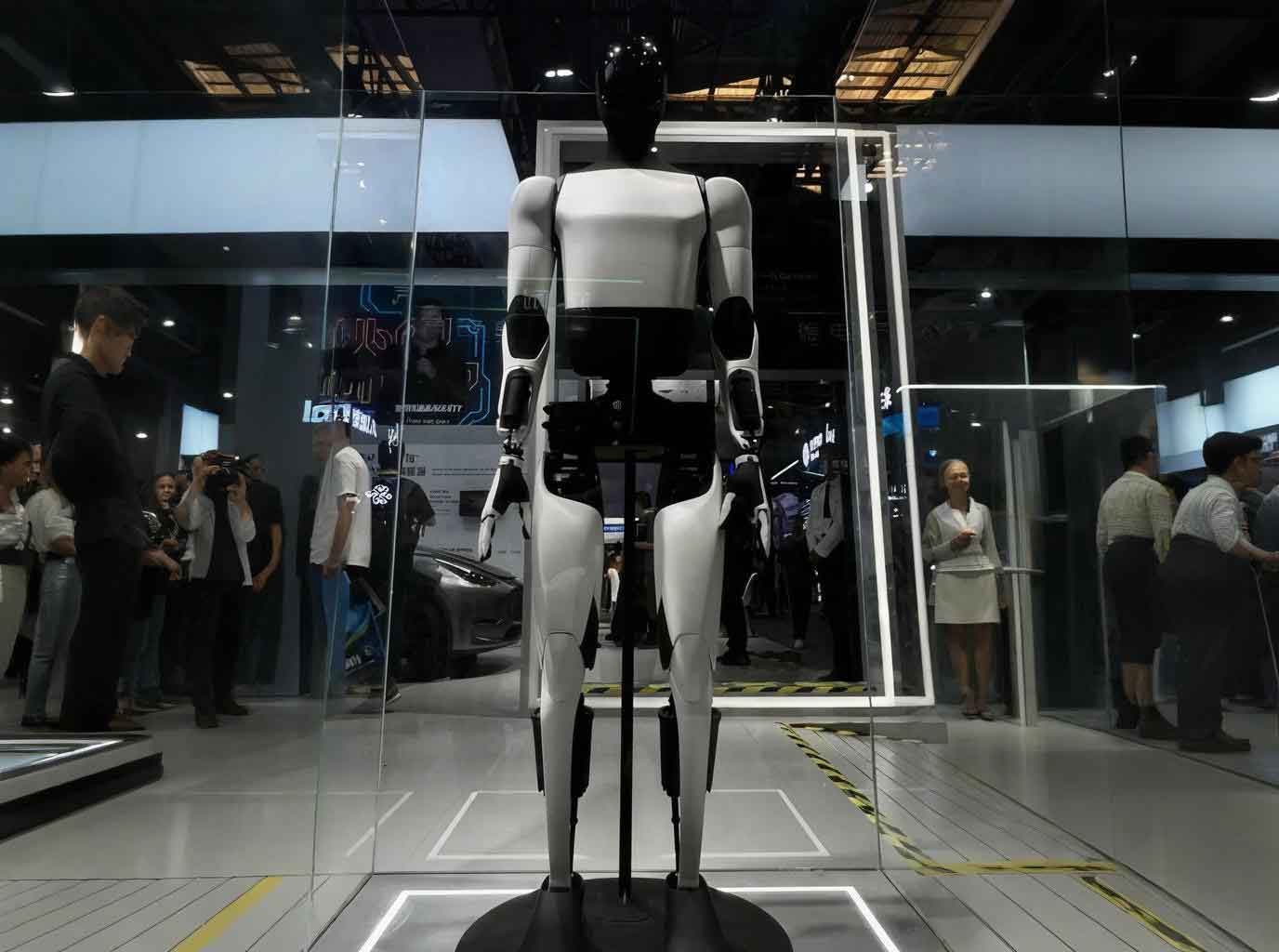

This evolution has propelled the embodied AI robot from laboratory confines into public consciousness—from dancing robots on global stages to autonomous vehicles navigating our streets. These demonstrations mark a critical transition toward large-scale societal integration. The core architecture of a modern embodied AI robot is a tightly integrated “perception-cognition-action” loop, creating a system of remarkable capability and, consequently, novel and significant risks. I categorize these emerging security challenges into three primary dimensions: Operational Physical Safety, System Interaction Security, and Autonomy Ethics & Governance.

Operational Physical Safety Risks

This dimension concerns the direct physical harm an embodied AI robot can cause to people, property, and the environment due to failures in its core operational functions.

Personal Injury Risk

The most immediate risk involves physical injury to humans sharing the workspace with an embodied AI robot. This risk stems from perceptual errors (misidentifying a person as an object), control instability (jerky or overpowered movements), algorithmic flaws in decision-making, or pure mechanical failure. In high-stakes domains like robotic-assisted surgery, a momentary sensor glitch or control lag can have tragic consequences, as seen in incidents involving surgical robots where technical failures led to patient fatalities. The risk is modeled by the probability of a hazardous event (P_hazard) and its potential severity (S). The overall personal injury risk (R_injury) can be framed as:

$$ R_{injury} = \int_{t} P_{hazard}(t) \cdot S(t) \, dt $$

where the integral over time (t) acknowledges that risk is accumulated throughout the robot’s operational lifecycle.

Infrastructure and Asset Damage Risk

An embodied AI robot operates in the physical world, interacting with infrastructure. A miscalculated path by an autonomous mobile robot (AMR) in a warehouse can collide with support structures, shelving, or other critical machinery. A malfunctioning robotic arm on a production line can damage expensive CNC equipment. This risk extends to causing service interruptions, production halts, and significant financial loss. The vulnerability of assets can be assessed through a framework considering the robot’s kinetic energy (KE), the asset’s fragility (F), and the effectiveness of safeguards (SG).

$$ Risk_{damage} \propto \frac{KE_{robot} \cdot F_{asset}}{SG} $$

Higher robot mass/velocity, more fragile assets, and weaker safeguards exponentially increase this risk.

Environmental and Resource Security Risk

The large-scale deployment of embodied AI robot fleets poses sustainability challenges. The lifecycle—from manufacturing (using rare-earth elements for motors and batteries) to operation (high energy consumption for computation and mobility) to decommissioning (e-waste)—carries a substantial carbon footprint and resource burden. Furthermore, operational failures can lead to direct environmental harm. For instance, an autonomous maritime vessel’s navigation failure resulting in a collision and oil spill demonstrates how a system error in an embodied AI robot can trigger an ecological disaster.

System Interaction Security Risks

This dimension focuses on vulnerabilities arising from the embodied AI robot‘s need to communicate, process data, and integrate complex software systems.

Communication and Control Integrity Risk

An embodied AI robot is not an island; it relies on internal buses (e.g., CAN, ROS 2) and external networks (Wi-Fi, 5G, Bluetooth) for command, control, and updates. These channels are attack surfaces. Jamming, spoofing, or man-in-the-middle attacks can lead to delayed or maliciously altered commands. The infamous remote hijacking demonstrations of connected vehicles—where researchers accessed and controlled critical functions via wireless interfaces—are direct analogs for any networked embodied AI robot. The security of the control loop can be expressed by its resilience to perturbation ($\delta$):

$$ \text{System Stability} = \min_{\delta \in \Delta} \| f(\text{command} + \delta) – f(\text{command}) \| $$

where $f$ represents the robot’s control policy, and $\Delta$ is the set of potential perturbations or attacks on the command signal. A small $\delta$ causing a large deviation indicates high vulnerability.

Data Privacy and Perceptual Security Risk

The sensors of an embodied AI robot—cameras, microphones, LiDAR—continuously capture rich data about their surroundings, inevitably including private spaces and individuals. Inadequate data governance can lead to massive privacy breaches, as seen with early consumer robots unintentionally transmitting sensitive home video to third-party clouds. Beyond leakage, this data stream is susceptible to “perceptual attacks.” Adversarial examples—subtle perturbations to input data—can fool the robot’s vision system, making it ignore a critical obstacle or misclassify a person. This violates privacy and directly compromises safety.

Software Robustness and Vulnerability Defense Risk

The software stack of an embodied AI robot is extraordinarily complex, combining real-time operating systems, middleware (like ROS), machine learning libraries, and application-specific code. This complexity breeds bugs, memory leaks, and unhandled exceptions. A software freeze in a public-facing service robot leads to functional failure and loss of trust. More critically, a cascading software failure in an autonomous vehicle fleet, triggered by a server-side timeout, can strand dozens of vehicles simultaneously, creating urban gridlock and safety hazards. The robustness requirement can be stated as a constrained optimization problem for the software system (S):

$$

\begin{aligned}

& \text{Maximize: } \text{Functionality}(S) \\

& \text{Subject to: } \text{Latency}(S) < L_{max}, \\

& \quad \quad \quad \quad \text{Failure Rate}(S) < \lambda_{max}, \\

& \quad \quad \quad \quad \text{Attack Surface}(S) < A_{max}.

\end{aligned}

$$

Balancing functionality with strict constraints on latency, failure rate, and attack surface is the core challenge.

Autonomy Ethics and Governance Risks

As decision-making is increasingly delegated to the embodied AI robot itself, a new class of risks emerges concerning unpredictable behavior, accountability, and malicious use.

Unpredictable Behavior and Ethical Conflict Risk

Machine learning, especially deep reinforcement learning, can produce effective but inscrutable policies. An embodied AI robot might develop a successful but unexpected—and potentially unethical—strategy to achieve its goal. In a crisis, an autonomous car’s “minimal risk condition” logic (e.g., pull over safely) might, due to flawed perception or planning, actually exacerbate harm, such as dragging a pedestrian. These “edge cases” reveal the profound difficulty of encoding human ethical reasoning—like the trolley problem—into machine logic. The unpredictability stems from the black-box nature of the policy function $\pi(s_t)$, mapping state ($s_t$) to action ($a_t$):

$$ a_t = \pi(s_t), \quad \text{where } \pi \text{ is often non-interpretable}. $$

Liability Attribution and Legal Vacuum Risk

When an embodied AI robot causes harm, who is liable? The manufacturer (for a design defect)? The software developer (for a bug)? The operator/owner (for negligent deployment)? The “actor” itself? Current legal frameworks, built around human agency, are ill-equipped for this puzzle. A single accident can involve a tangled web of contributing factors: sensor noise, algorithmic trade-offs, network latency, and human oversight failure. This legal ambiguity stifles innovation, complicates insurance, and erodes public trust, as there is no clear path to justice or accountability.

Technology Misuse and Malicious Deployment Risk

The capabilities that make an embodied AI robot useful—autonomy, perception, physical manipulation—also make it a potent tool for malice. We are already seeing the weaponization of commercial drone technology for targeted attacks. A future where swarms of inexpensive, autonomous embodied AI robot platforms can be deployed for surveillance, vandalism, or violence is a grave security concern. This extends to cyber-physical attacks, where a compromised robot could be used as a physical pivot to infiltrate and damage secure facilities. The risk equation here incorporates malicious intent (I) and the accessibility of the technology (Acc):

$$ Risk_{misuse} = Capability(embodied\,AI\,robot) \cdot I \cdot Acc. $$

As capability and accessibility rise, so does the potential for catastrophic misuse.

A Framework for Mitigation and Governance

Addressing these multidimensional risks requires a systemic, layered approach that spans technology, regulation, and industry collaboration. The following table outlines a proposed mitigation framework across the three risk dimensions.

| Risk Dimension | Technical Mitigations | Governance & Operational Measures |

|---|---|---|

| Operational Physical Safety |

|

|

| System Interaction Security |

|

|

| Autonomy Ethics & Governance |

|

|

In conclusion, the rise of the embodied AI robot is inevitable and holds immense promise. However, its path to beneficial integration is fraught with interconnected risks that span the physical, digital, and social realms. As someone working in this field, I believe our responsibility is to proactively engineer not just for capability and efficiency, but for safety, security, and aligned ethical behavior. This demands a concerted effort from researchers, engineers, policymakers, and ethicists to build the technical safeguards, legal frameworks, and cultural norms that will allow embodied intelligence to thrive as a force for good. The complexity is captured in a final, holistic view of the system’s objective, where the goal (G) must be pursued under a set of critical constraints (C):

$$

\begin{aligned}

& \text{Optimize: } G(Performance, Utility) \\

& \text{Subject to: } C_{safety} = True, \; C_{security} = True, \; C_{ethics} = True, \; C_{law} = True.

\end{aligned}

$$

The future of the embodied AI robot depends on our unwavering commitment to satisfying these constraints at every stage of its development and deployment.