The relentless pursuit of more precise, minimally invasive, efficient, and cost-effective healthcare solutions has propelled the field of medical robotics and convergence engineering into a global spotlight. The deep integration of medical science with engineering disciplines—often termed “medical-engineering convergence”—is fundamentally reshaping surgical procedures, rehabilitation paradigms, and patient care. This convergence, driven by advancements in artificial intelligence, sophisticated sensing, and high-fidelity human-machine interaction, is no longer a futuristic concept but a present reality fostering significant theoretical innovations and technological breakthroughs. This article explores the current research landscape and future trajectories of medical robot systems and the synergistic technologies that empower them.

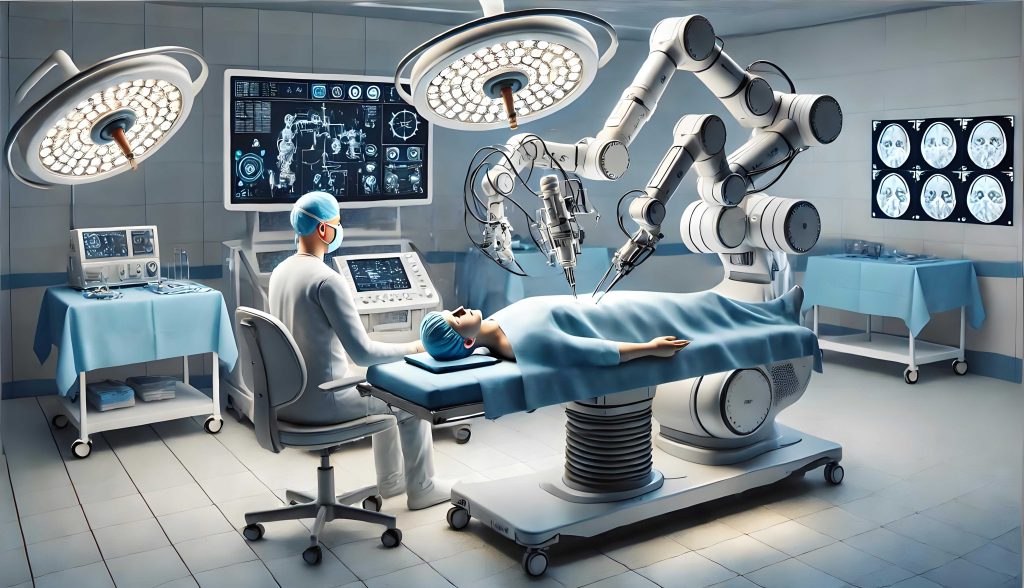

At its core, a medical robot is an intelligent service robot designed to assist in or autonomously execute medical tasks within clinical or care-giving environments. These systems follow pre-programmed or real-time commanded action plans, translating them into coordinated motions of their manipulators. The most prominent example is robotic-assisted surgery, where a surgeon controls robotic arms from a console to perform procedures with enhanced precision and minimal invasiveness. The global market for medical robotics, particularly surgical robots, is experiencing rapid growth, stimulated by technological advances and clinical demand, with projections indicating a multi-billion dollar valuation in the coming years.

Remote Telesurgery Systems

The vision of telesurgery is to enable a surgeon to perform procedures on a patient located at a distant site. This capability holds immense promise for democratizing high-quality care, allowing expert surgeons to operate on patients in remote, underserved, or hazardous locations without physical travel. The foundational principle involves a master-slave architecture, where the surgeon’s motions at a master console are transmitted, often over specialized networks, to guide the instruments of a slave medical robot at the patient’s side. Key challenges include managing communication latency, ensuring system stability, and providing the surgeon with sufficient sensory feedback (visual, force, tactile) to maintain situational awareness and operative skill.

Early and landmark experiments, such as those conducted under NASA’s NEEMO projects, demonstrated the feasibility of complex surgical tasks like gallbladder removal over long distances with significant time delays, using systems like AESOP and later the M7 and RAVEN I robots. These trials simulated extreme environments like underwater habitats, paving the way for more advanced systems. A quintessential example of a system designed for austere environments is the Trauma Pod, a conceptual robotic medical system intended for forward battlefield use. Its design philosophy revolves around modularity and autonomy, comprising several integrated units:

| Trauma Pod Module | Primary Function |

|---|---|

| Surgical Robot Manipulator | Executes precise surgical maneuvers (cutting, suturing) as directed by the remote surgeon. |

| Instrument Changer | Automates the exchange of surgical tools on the robot arm, mimicking a human scrub nurse. |

| Tool Cache | Stores a variety of sterile instruments for the procedure. |

| Pharmacological Dosing System | Administers medications and fluids intravenously as required. |

| Caregiver Robot | Assists with patient positioning, sterile draping, and other supportive tasks. |

The advent of 5G communication technology marks a revolutionary step for telesurgery. Its high bandwidth, ultra-low latency, and massive device connectivity directly address the critical limitations of previous networks. Successful animal and human trials have been conducted, including a multi-point collaborative surgery where experts in different cities jointly operated a medical robot system to perform complex procedures. The low latency (often below 150ms) ensures near-real-time responsiveness, making delicate remote manipulation feasible. The fundamental kinematic relationship for a surgical robot arm in such a system can be described by:

$$ \mathbf{x} = f(\mathbf{q}) $$

where $\mathbf{x}$ is the position and orientation (pose) of the surgical tool tip in Cartesian space, $\mathbf{q}$ is the vector of joint angles, and $f$ represents the forward kinematics function of the medical robot. The control system must solve the inverse kinematics to find the joint angles needed to achieve a desired tool pose commanded by the remote surgeon.

Surgical Navigation and Augmented Reality

Precision in surgery often hinges on the accurate localization of pathological targets and critical anatomical structures. Surgical navigation systems provide a “GPS for the body,” integrating pre-operative and intra-operative imaging with the real-time position of surgical instruments. These systems establish a common coordinate system, allowing for precise surgical planning and guidance.

Spatial tracking, the core of navigation, can be achieved through various contact and non-contact methods. The choice of technology involves trade-offs between accuracy, line-of-sight requirements, and interference.

| Tracking Technology | Principle | Advantages | Disadvantages |

|---|---|---|---|

| Optical Tracking | Uses infrared cameras to detect reflective or active markers. | Very high accuracy and update rate. | Requires line-of-sight; markers can be obscured. |

| Electromagnetic (EM) Tracking | Measures the position of a small sensor within a generated EM field. | No line-of-sight needed; tracks flexible tools. | Sensitive to metallic objects and field distortions. |

| Mechanical Tracking | Uses encoded joints on a physical arm linked to the instrument. | High intrinsic accuracy; robust. | Bulky; restricts free movement. |

| Ultrasound-Based Tracking | Uses time-of-flight of ultrasonic waves. | Low cost; no radiation. | Lower accuracy; sensitive to temperature/airflow. |

Augmented Reality (AR) represents a transformative leap in surgical navigation. By superimposing computer-generated images (e.g., 3D models of tumors, vessels, or planned resection paths) onto the surgeon’s real-world view of the operative field, AR creates an integrated, enhanced visual environment. This “x-ray vision” capability allows surgeons to see beyond the surface, improving spatial understanding and targeting accuracy. The technical pipeline for AR in surgery involves:

- Calibration: Precisely aligning the coordinate systems of the imaging device (e.g., CT scanner), the tracking system, the patient’s anatomy, and the AR display. This is often the most critical step for accuracy.

- Registration: Mapping the pre-operative 3D model onto the live patient anatomy. This can be based on anatomical landmarks (point-based) or surface matching.

- Visualization: Rendering the virtual models with correct perspective, occlusion, and depth cues onto the surgeon’s display, which can be a head-mounted display, a surgical microscope, or a screen overlay.

Applications are vast, spanning from neurosurgery, where tumor margins are visualized in situ, to orthopedic surgery for implant placement, and laparoscopic surgery for visualizing underlying structures. The integration of AR with medical robot systems is a powerful trend, where the robot can use the AR-derived spatial information for semi-autonomous actions or enhanced guidance.

Rehabilitation Robotics

Rehabilitation robotics is a vital branch of medical robotics focused on restoring, augmenting, or assisting motor functions lost due to stroke, spinal cord injury, neurological disorders, or aging. These systems can deliver high-dosage, repetitive, and consistent therapy, alleviating the physical burden on therapists and enabling objective quantification of patient progress through embedded sensors.

A prominent category is robotic exoskeletons. These are wearable, powered orthoses that move in parallel with the user’s limbs. For lower-limb rehabilitation, exoskeletons can provide gait training for paralyzed patients, facilitating neuroplasticity and functional recovery. Control strategies are paramount and can be categorized as:

- Pre-programmed Trajectory Control: The exoskeleton moves the limb along a fixed, physiologically correct path.

- Admittance/Impedance Control: The robot adjusts its motion based on the interaction forces from the user, allowing for assist-as-needed paradigms. The dynamic equation often involves:

$$ M\ddot{x} + B\dot{x} + Kx = F_{human} – F_{robot} $$

where $M$, $B$, $K$ represent inertia, damping, and stiffness matrices, $x$ is position, and $F$ denotes force. - Intention-Driven Control: Using signals from residual muscle activity (EMG), brain waves (EEG), or mechanical sensors to trigger and modulate assistance, making the therapy more engaging and adaptive.

The evolution of exoskeleton technology points toward several key trends: Modularity for customizable therapy; Lightweight and Soft Robotics using compliant materials for improved comfort and safety; Enhanced Intelligence through AI that adapts to user performance and intent; and Home-Based Systems for accessible, long-term use.

Convergence with Wearable Devices and Virtual Reality

The synergy between medical robotics, wearable sensors, and Virtual Reality (VR) is creating powerful platforms for rehabilitation and motor skill training. Wearable devices, equipped with inertial measurement units (IMUs), force sensors, and surface electromyography (sEMG), provide rich, continuous data on a patient’s movement kinematics and muscle activation outside the lab or clinic.

When combined with VR, these systems create immersive, task-oriented training environments. For instance, a stroke patient wearing a sensor-laden glove can practice virtual activities of daily living, like picking up a cup. The VR environment provides engaging visual feedback and can adapt task difficulty in real-time based on performance metrics derived from the wearable data. This convergence offers significant advantages:

| Advantage | Description |

|---|---|

| Enhanced Motivation & Engagement | Game-like VR environments make repetitive exercises more enjoyable, improving adherence. |

| Quantitative Assessment | Wearable data provides objective measures of range of motion, speed, smoothness, and force, replacing subjective therapist evaluations. |

| Safe Training Environment | Patients can practice challenging tasks in VR without risk of real-world injury. |

| Telerehabilitation Potential | Data can be transmitted to therapists for remote monitoring and program adjustment, extending care access. |

The fusion of wearable technology with medical robot-assisted therapy is a logical next step, where a lightweight robotic exoskeleton provides physical guidance and assistance while the VR-wearable combo manages engagement and assessment, creating a comprehensive bio-cooperative rehabilitation system.

Future Trajectories and Convergent Technologies

The future of medical robotics and convergence engineering is being shaped by several interdependent technological waves. The push for true integration faces challenges, including the translational gap between engineering prototypes and clinical adoption, the need for interdisciplinary education, and the establishment of robust regulatory pathways for increasingly intelligent systems. Future directions are likely to focus on:

- Intelligent, Modular, and Miniaturized Surgical Robots: Development of multi-functional, smart micro-surgical platforms capable of autonomous sub-tasks (e.g., suturing, knot-tying) and able to navigate complex, deformable anatomy with greater autonomy under surgeon supervision.

- Nanoscale Medical Robots and Targeted Delivery: Convergence with micro/nano-fabrication to create microscopic robots for targeted drug delivery, microsurgery at the cellular level, or precision sensing within the body.

- AI-Driven Predictive Analytics and Personalization: Using machine learning on large multimodal datasets (imaging, genomics, wearable sensor data) to predict surgical outcomes, optimize rehabilitation protocols for individuals, and enable predictive maintenance of medical robot systems themselves.

- 5G/6G and Cloud-Enabled Distributed Medical Ecosystems: Leveraging next-generation networks for seamless real-time data sharing, enabling true collaborative surgery across multiple experts, expansive telemedicine, and the operation of medical robot fleets in smart hospitals.

- Advanced Human-Machine Interfaces (HMIs): Moving beyond joysticks and consoles to include brain-computer interfaces (BCIs), advanced haptics that simulate tissue texture, and gaze-tracking for more intuitive control of medical robot systems.

The accuracy of a medical robot system in a dynamic biological environment is a composite function of multiple error sources. A simplified model can be expressed as:

$$ E_{total} = \sqrt{E_{system}^2 + E_{registration}^2 + E_{tissue\_deformation}^2 + E_{dynamics}^2} $$

where $E_{system}$ is the inherent mechanical/encoder error of the robot, $E_{registration}$ is the error in aligning the planning data to the patient, $E_{tissue\_deformation}$ accounts for organ movement or soft tissue shift during the procedure, and $E_{dynamics}$ includes errors from latency and control loop delays. Future research aims to minimize each component through better sensors, real-time imaging, predictive modeling, and adaptive control.

In conclusion, the field of medical robotics and convergence engineering stands at a pivotal juncture. Driven by unmet clinical needs and powered by rapid advancements in AI, sensing, and communication, it is transitioning from assisting in well-defined procedures to enabling entirely new paradigms of diagnosis, treatment, and continuous care. The successful realization of this future hinges on deep, sustained collaboration between engineers, clinicians, and scientists—a true convergence of disciplines dedicated to advancing human health.