The integration of artificial intelligence into healthcare represents one of the most transformative technological shifts of our time. As a researcher deeply invested in the confluence of law and technology, I observe that medical robots, evolving from simple assistive tools to potentially autonomous diagnostic and surgical agents, promise to revolutionize patient care by enhancing precision, efficiency, and access. However, this profound evolution brings forth equally complex legal and ethical challenges. The core dilemma I aim to explore revolves around a critical question: when a medical robot causes harm, who is to be held accountable? The traditional frameworks of tort liability, built upon clear distinctions between human actors and inert tools, are strained by the “quasi-autonomous” nature of these intelligent systems. This article delves into the intricate problem of attributing liability for harms caused by medical robots, analyzing the inadequacies of current legal paradigms and proposing a structured, phase-sensitive approach to navigate this uncharted territory.

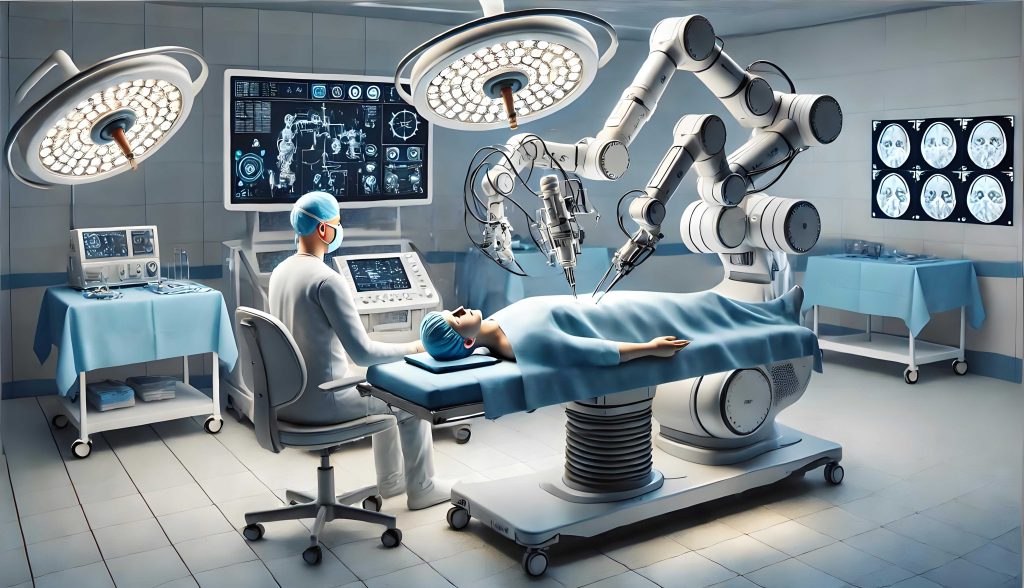

The current landscape of medical robotics encompasses a wide spectrum. On one end, we have telemanipulative systems like the Da Vinci Surgical System, where a surgeon controls robotic arms with enhanced precision, albeit through a direct, master-slave interface. On the other, we encounter increasingly sophisticated diagnostic AIs and robotic systems capable of learning from vast datasets. These systems introduce what is often termed the “algorithmic black box” – a situation where the decision-making process of the medical robot is opaque, even to its designers. The input (patient data) and the output (a diagnosis or surgical action) are observable, but the internal pathway from one to the other is complex, non-linear, and difficult to fully interpret. This opacity, symbolized by the function $y = f(x)$ where the mapping $f$ is non-transparent, fundamentally complicates the establishment of causation and fault, which are cornerstones of tort law.

Before we can intelligently assign liability, we must first confront a foundational jurisprudential question: what is the legal status of an advanced medical robot? This is not a mere philosophical exercise but a practical necessity for constructing a coherent liability framework. The prevailing global consensus, which I support for the current technological epoch, is to deny legal personhood to contemporary medical robots. They are sophisticated products, instruments in the hands of healthcare providers. However, as we project into a future where a medical robot may possess strong AI – capable of independent learning, adaptation, and decision-making without real-time human oversight – the question becomes more pressing. In such a scenario, I argue that conferring a sui generis (of its own kind) legal status, perhaps an “electronic person” model with specifically delineated rights and responsibilities, becomes a plausible and necessary step to manage liability effectively. This status would not equate the robot with a human but would recognize it as a distinct entity within the legal ecosystem for the pragmatic purpose of responsibility assignment and redress.

The liability challenge manifests differently across the developmental continuum of medical robotics. Therefore, a bifurcated analysis is essential: one for the era of “weak” or “narrow” AI (where we currently reside) and another for a prospective era of “strong” or “general” AI in medical robots.

Liability in the Era of Weak AI Medical Robots

Today, most medical robots are highly advanced tools. They operate under the direct or supervisory control of healthcare professionals. When harm occurs, it typically arises from a confluence of potential factors: a product defect (in hardware or software), professional negligence by the user (the surgeon or hospital), or a patient’s unique physiological condition. The legal response, consequently, involves intertwining two traditional strands of tort law: medical malpractice (based on fault) and product liability (often strict).

The primary hurdle in applying these frameworks is the “black box” problem. In a malpractice suit, the patient bears the burden of proving the healthcare provider’s breach of the standard of care. How does one prove a surgeon mis-operated a robotic arm when the system’s own feedback logs are indecipherable? Similarly, in a product liability claim, proving a “defect” in the robot’s complex, self-adjusting algorithm is a formidable technical challenge. The standard legal tests seem inadequate. For instance, a risk-utility analysis for a design defect becomes speculative when the algorithm’s behavior is unpredictably emergent.

To adapt, I propose calibrated shifts within the existing legal principles. For medical malpractice, the doctrine of res ipsa loquitur (“the thing speaks for itself”) or statutory presumptions of fault could be judiciously applied to cases involving opaque medical robot errors. If a robot performs an action clearly outside any intended surgical or diagnostic path, the burden could shift to the hospital to prove its personnel were not negligent in their oversight, selection, or maintenance of the system. For product liability, courts might adopt a form of “enterprise liability” or a relaxed causation standard for complex, algorithm-driven devices. The focus could move towards whether the manufacturer provided adequate warnings, training, and system transparency logs, rather than demanding impossible proof of a specific algorithmic flaw. The liability nexus in this phase can be summarized by the following probability, which is difficult to decompose:

$$P(\text{Harm}) = P(\text{Harm} | \text{Product Defect}) \cdot P(\text{Defect}) + P(\text{Harm} | \text{Professional Error}) \cdot P(\text{Error}) + \epsilon$$

where $\epsilon$ represents the irreducible uncertainty from the black box.

Liability in the Prospective Era of Strong AI Medical Robots

Envision a future where a medical robot can autonomously conduct a diagnostic consultation, formulate a treatment plan, and execute a complex surgical procedure with only minimal human initiation. In this scenario, the human professional transitions from an operator to a supervisor or a mere “deployer.” The core of the liability inquiry then shifts decisively towards the autonomous agent itself and its creators. This necessitates a more fundamental legal innovation.

First, as argued, granting a specific legal status to such autonomous medical robots becomes pragmatic. This “electronic personhood” would allow the robot to be a direct holder of liabilities. Second, a new, multi-layered liability framework must be constructed. I propose a tiered model:

| Tier | Liable Party | Basis for Liability | Example Scenarios |

|---|---|---|---|

| 1. Robot Entity | The Autonomous Medical Robot | Direct fault for actions taken during autonomous operation. Liability funded by mandatory insurance or a compensation fund. | The robot makes an independent, erroneous anatomical decision during surgery leading to injury. |

| 2. Creator Liability | Designers, Programmers, Manufacturers | Negligence in core design, flawed foundational algorithms, inadequate safety constraints (“frame problem”), or intentional misuse of technology. | A latent bias in the training data causes the robot to systematically misdiagnose a patient demographic. |

| 3. Deployer Liability | Hospital / Healthcare Institution | Negligence in selecting unfit robots, failure to maintain systems, ignoring safety updates, or deploying the robot outside its certified scope. | A hospital uses an autonomous surgical robot for a procedure it was not validated for, without proper oversight. |

The attribution of fault would still rely on a principle of wrongfulness, but the standards would need redefinition. For the robot, “fault” could be defined as a deviation from its programmed ethical guidelines or operational parameters, provable through comprehensive and mandated “black box” recorders. The duty of care for designers would expand to encompass rigorous simulation testing for edge cases and the implementation of “explainable AI” (XAI) principles to mitigate opacity. The relationship between human oversight ($O$), robot autonomy ($A$), and expected harm ($H$) could be modeled as:

$$H = g(A, O, \Theta)$$

where $g$ is a function modeling risk, and $\Theta$ represents the inherent uncertainty and complexity of the medical environment. The legal goal is to define the optimal level of $O$ required as $A$ increases.

Central to managing liability for advanced medical robots is the mitigation of the “black box” problem. Legally mandating transparency and auditability is no longer a luxury but a prerequisite for accountability. This involves two key requirements:

- Explainability Mandates: Developers of medical AI should be legally required to integrate XAI techniques that provide post-hoc rationales for decisions, even if the core process remains complex.

- Secure Logging (“Black Box” Recorder): Every advanced medical robot must be equipped with an immutable data recorder that logs all sensor inputs, internal decision-making milestones (to the greatest extent possible), and actuator outputs. This data would be crucial for forensic analysis after an adverse event, serving a similar role to flight data recorders in aviation.

Furthermore, financial responsibility mechanisms must evolve in tandem. An autonomous medical robot, as a legal entity, cannot pay damages. Therefore, a system of mandatory insurance for operators/deployers of such robots, coupled with a potential industry-wide compensation fund (similar to oil spill funds), is essential to ensure victims have a viable path to redress. The European Parliament’s proposal for a specific legal status for robots with mandatory insurance schemes provides a valuable template for this.

In conclusion, the journey toward resolving medical robot liability is iterative and must mirror the technology’s own evolution. In the present phase of weak AI, we can and should strain the existing doctrines of medical malpractice and product liability through strategic adaptations like burden-shifting and a focus on enterprise responsibility. This pragmatic approach addresses current realities without premature legislation. However, we must simultaneously look forward. The prospect of strongly autonomous medical robots demands proactive legal conceptualization. This involves seriously considering novel legal statuses, constructing layered liability models that clearly distribute risk among robots, creators, and deployers, and enforcing rigorous technical standards for transparency and data logging. The overarching principle must be to foster an ecosystem where innovation in medical robotics flourishes, but not at the expense of patient safety or justice. By developing a clear, predictable, and fair liability framework, we can build the necessary trust to fully harness the incredible potential of medical robots to improve and save lives, ensuring that the legal architecture evolves as deftly as the technology it seeks to govern.