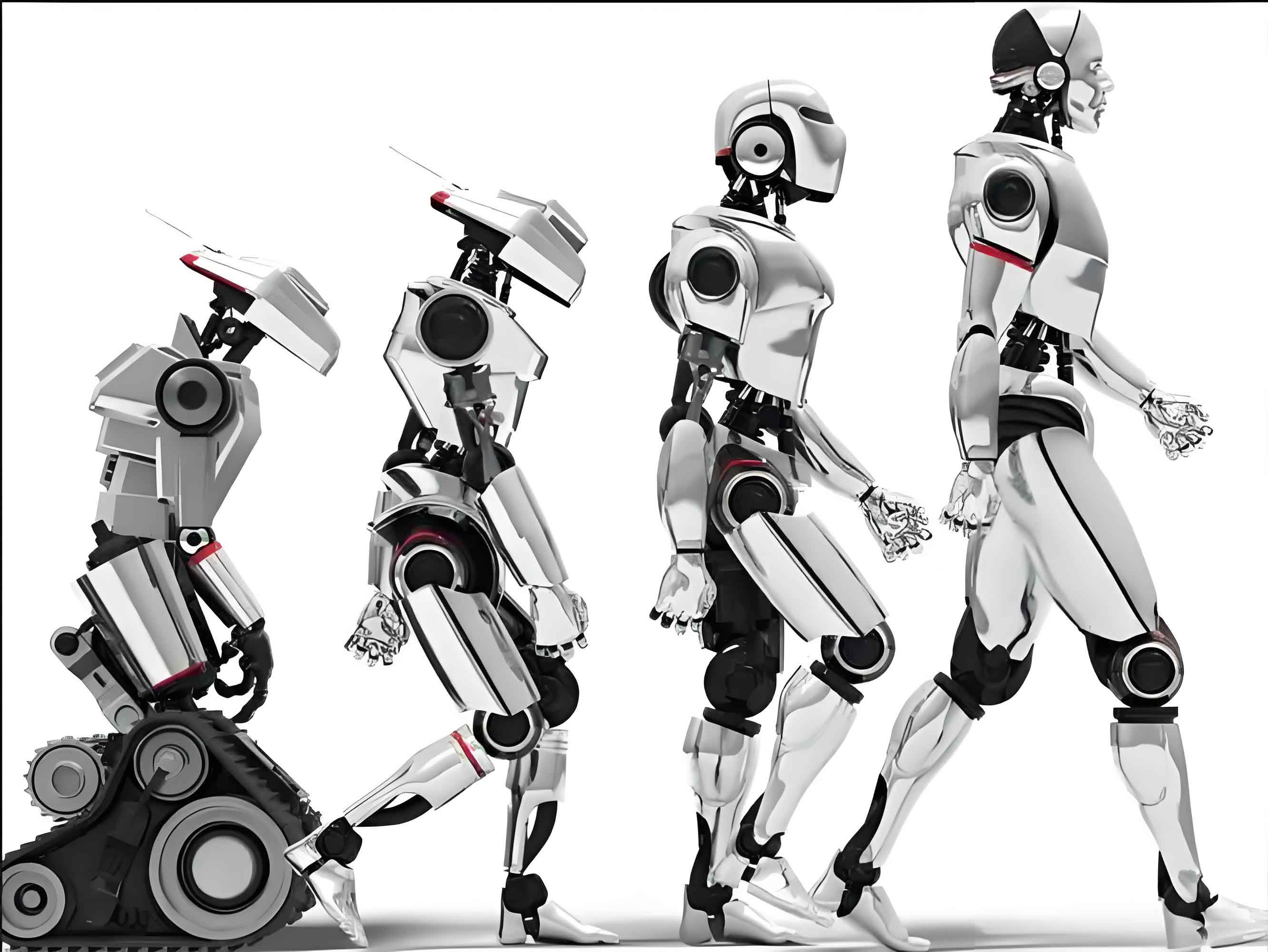

In this comprehensive review, I explore the evolutionary journey from Cyber-Physical Systems (CPS) to embodied intelligent robots, focusing on technological fusion and practical applications. The integration of control theory, artificial intelligence, and robotics has paved the way for advanced intelligent robots that interact seamlessly with their environments. This article delves into the theoretical foundations, key technological advancements, and real-world implementations, emphasizing the role of embodied intelligence in shaping the future of automation.

The concept of cybernetics, introduced by Norbert Wiener in 1948, laid the groundwork for understanding control and communication in animals and machines. This evolved into CPS, which merges computation, communication, and control to bridge physical and cyber domains. Today, embodied intelligent robots represent a paradigm shift, where intelligence emerges from the interaction between body, environment, and cognition. I will analyze this progression, highlighting how technologies like large models, reinforcement learning, and digital twins contribute to the development of intelligent robots. Throughout this discussion, the term “intelligent robot” will be frequently referenced to underscore its centrality in modern systems.

CPS serves as a foundational framework for intelligent robots, enabling real-time perception, analysis, decision-making, and execution. The core principles of CPS involve the 3C integration: Computation, Communication, and Control. This can be expressed mathematically through state-space representations. For an intelligent robot, the dynamics might be modeled as:

$$ \dot{\mathbf{x}}(t) = f(\mathbf{x}(t), \mathbf{u}(t), t) $$

where $\mathbf{x}(t)$ is the state vector (e.g., position, velocity of the intelligent robot), $\mathbf{u}(t)$ is the control input, and $f$ represents the system dynamics. The communication aspect ensures data flow between sensors, controllers, and actuators in the intelligent robot, often governed by protocols like OPC UA or real-time Ethernet. Control strategies, such as PID or model predictive control, are essential for stabilizing the intelligent robot’s movements. For instance, a PID controller for joint control in an intelligent robot can be formulated as:

$$ u(t) = K_p e(t) + K_i \int_0^t e(\tau) d\tau + K_d \frac{de(t)}{dt} $$

where $e(t)$ is the error between desired and actual joint angles, and $K_p$, $K_i$, $K_d$ are gains tuned for the intelligent robot’s dynamics. This integration facilitates the seamless operation of intelligent robots in complex environments.

The evolution from control theory to CPS has been driven by the need for higher-level system integration. CPS emphasizes a holistic approach where physical processes and computational algorithms are deeply intertwined. This is crucial for intelligent robots, which must adapt to dynamic conditions. Table 1 summarizes the key differences between traditional automation, CPS, and embodied intelligent robots, highlighting the progression towards autonomy.

| System Type | Core Features | Role of Intelligent Robots | Technological Enablers |

|---|---|---|---|

| Traditional Automation | Fixed programming, isolated control loops | Limited to repetitive tasks as basic intelligent robots | PLC, SCADA |

| Cyber-Physical Systems (CPS) | 3C integration, real-time data flow, virtual-physical coupling | Intelligent robots as integrated nodes with sensing and control | IoT, cloud computing, real-time OS |

| Embodied Intelligent Robots | Body-environment interaction, multimodal perception, cognitive decision-making | Intelligent robots as autonomous agents learning from interactions | AI models, reinforcement learning, digital twins |

In the context of intelligent robots, CPS provides a scalable architecture. For example, the perception-action cycle in an intelligent robot can be described as a feedback loop where sensor data $\mathbf{s}(t)$ is processed to generate actions $\mathbf{a}(t)$. This aligns with Wiener’s cybernetic principles, now enhanced with AI. The efficiency of such systems depends on latency and bandwidth, which are critical for intelligent robots operating in real-time. Communication delays can be modeled as:

$$ \tau_{total} = \tau_{sensing} + \tau_{processing} + \tau_{actuation} $$

where each component must be minimized to ensure the intelligent robot responds promptly to environmental changes.

Industrial robot control operating systems have undergone significant evolution, directly impacting the capabilities of intelligent robots. Early systems like ROS (Robot Operating System) offered flexibility but lacked real-time guarantees, limiting their use in industrial intelligent robots. In contrast, iROS (industrial Robot Operating System) was developed to address these gaps, providing a unified platform for controlling intelligent robots with millisecond-level precision. The architecture of iROS supports modular design, allowing for the integration of various intelligent robot functionalities. Key algorithms in iROS include kinematics and dynamics computations. For an intelligent robot with $n$ joints, forward kinematics calculates the end-effector pose $\mathbf{T}$:

$$ \mathbf{T} = \prod_{i=1}^{n} A_i(\theta_i) $$

where $A_i$ is the transformation matrix for joint $i$ with angle $\theta_i$. Inverse kinematics, essential for path planning in intelligent robots, solves for $\theta_i$ given $\mathbf{T}$, often using numerical methods like:

$$ \min_{\theta} || f(\theta) – \mathbf{T} ||^2 $$

This enables precise control of intelligent robots in tasks such as welding or assembly. Table 2 compares iROS and ROS in the context of intelligent robot applications.

| Feature | iROS (Industrial Focus) | ROS (Research Focus) |

|---|---|---|

| Real-time Performance | Guaranteed, suitable for high-speed intelligent robots | Best-effort, limited for critical intelligent robot tasks |

| Modularity | High, with plug-and-play modules for intelligent robots | High, but relies on community packages |

| Standard Compliance | Supports IEC standards for intelligent robot integration | Lacks industrial standards |

| Application Scope | Optimized for manufacturing intelligent robots | Broad, including mobile intelligent robots |

The fusion of artificial intelligence and control theory has been pivotal in advancing intelligent robots. AI techniques, from symbolic logic to deep learning, enhance the cognitive abilities of intelligent robots. For instance, deep neural networks enable intelligent robots to recognize objects or understand language. A convolutional neural network (CNN) for vision in an intelligent robot can be expressed as:

$$ \mathbf{y} = \sigma(\mathbf{W} * \mathbf{x} + \mathbf{b}) $$

where $\mathbf{x}$ is the input image from the intelligent robot’s camera, $\mathbf{W}$ are weights, $\mathbf{b}$ biases, $*$ denotes convolution, and $\sigma$ is an activation function. Reinforcement learning (RL) further allows intelligent robots to learn optimal policies through interaction. The Q-learning update rule for an intelligent robot is:

$$ Q(s,a) \leftarrow Q(s,a) + \alpha [r + \gamma \max_{a’} Q(s’,a’) – Q(s,a)] $$

where $s$ is state, $a$ action, $r$ reward, $\alpha$ learning rate, and $\gamma$ discount factor. This empowers intelligent robots to adapt to new tasks without explicit programming.

Generative AI and large language models (LLMs) have opened new frontiers for intelligent robots. Models like ChatGPT demonstrate how natural language processing can be leveraged for human-robot interaction. In embodied intelligent robots, LLMs serve as “brains” that process instructions and generate plans. The training of such models involves minimizing a loss function over a dataset $\mathcal{D}$:

$$ \mathcal{L} = -\sum_{(x,y) \in \mathcal{D}} \log P(y|x;\theta) $$

where $x$ are inputs (e.g., sensor data from intelligent robots), $y$ are outputs (e.g., control commands), and $\theta$ are model parameters. This enables intelligent robots to understand and execute complex commands, making them more versatile.

RobotCPS represents a conceptual fusion where intelligent robots are embedded within a CPS framework. This approach integrates robot control, information flow, and AI into a cohesive platform. The architecture of RobotCPS for intelligent robots includes four layers: physical, perception, cognition, and execution. Mathematically, this can be modeled as a hierarchical system. The physical layer involves dynamics of the intelligent robot, while the perception layer processes multimodal inputs $\mathbf{I}$ (e.g., vision, force):

$$ \mathbf{F} = g(\mathbf{I}) $$

where $g$ is a fusion function, perhaps using attention mechanisms: $\mathbf{F} = \sum_i \alpha_i \mathbf{I}_i$, with $\alpha_i$ learned weights. The cognition layer, powered by AI, makes decisions for the intelligent robot, and the execution layer implements control commands. This closed-loop system enhances the autonomy of intelligent robots in industrial settings.

Embodied intelligent robots leverage this fusion to achieve higher-level intelligence. The concept of “embodiment” stresses that intelligence in robots arises from physical interaction. For an intelligent robot, this means integrating sensors and actuators to form a perception-action cycle. The dynamics can be extended to include environmental feedback:

$$ \dot{\mathbf{x}} = f(\mathbf{x}, \mathbf{u}, \mathbf{e}) $$

where $\mathbf{e}$ represents environmental variables. This makes the intelligent robot responsive to changes, such as obstacles or varying payloads. The role of digital twins is crucial here, creating virtual models of intelligent robots for simulation and optimization. A digital twin might predict the intelligent robot’s performance using differential equations, reducing real-world testing.

The integration of large models with embodied intelligent robots is exemplified by platforms like RUDA (Robot Unified Device Architecture). RUDA combines the “cerebellum” (iROS for low-level control of intelligent robots) with the “cerebrum” (LLMs for high-level reasoning). This allows for the development of specialized intelligent robots tailored to specific domains. For instance, in manufacturing, an intelligent robot can use a proprietary LLM to interpret natural language instructions. The training process for such an intelligent robot involves fine-tuning a base model on domain-specific data, optimizing parameters $\theta$ via gradient descent:

$$ \theta \leftarrow \theta – \eta \nabla_\theta \mathcal{L} $$

where $\eta$ is the learning rate. This results in intelligent robots that are both adaptable and efficient. Table 3 outlines the components of RUDA for intelligent robots.

| Component | Function in Intelligent Robots | Technologies Involved |

|---|---|---|

| iROS Integration | Provides real-time control for intelligent robots | Kinematics algorithms, trajectory planning |

| LLM Brain | Enables natural language understanding for intelligent robots | Transformer models, fine-tuning |

| Multimodal Fusion | Combines sensor data for intelligent robot perception | CNNs, RNNs, attention networks |

| Digital Twin | Simulates intelligent robot behavior for optimization | Physics engines, data analytics |

In practical applications, RobotCPS and embodied intelligent robots demonstrate significant value. For example, in smart manufacturing, an intelligent robot can perform assembly tasks while communicating with other machines via CPS. The control system might use optimal control theory to minimize energy consumption. Consider an intelligent robot with dynamics $\dot{x} = Ax + Bu$. A linear-quadratic regulator (LQR) can be designed to minimize cost:

$$ J = \int_0^\infty (x^T Q x + u^T R u) dt $$

where $Q$ and $R$ are weighting matrices, tuned for the intelligent robot’s performance. This ensures efficient operation of intelligent robots in production lines. Additionally, AI modules assist in predictive maintenance for intelligent robots, analyzing sensor data to detect anomalies. A simple anomaly detection model for an intelligent robot could use statistical thresholds:

$$ \text{Alert if } |x – \mu| > k\sigma $$

where $x$ is a sensor reading from the intelligent robot, $\mu$ mean, $\sigma$ standard deviation, and $k$ a constant. This proactive approach reduces downtime for intelligent robots.

The fusion of technologies also enables intelligent robots to collaborate with humans. Through natural language interfaces, an intelligent robot can receive verbal commands and provide feedback. The integration of brain-computer interfaces (BCIs) might allow direct neural control of intelligent robots, though this is emerging. In such cases, signal processing algorithms decode neural signals $\mathbf{n}(t)$ into commands for the intelligent robot:

$$ \mathbf{a}(t) = h(\mathbf{n}(t)) $$

where $h$ is a decoding function, often learned via machine learning. This expands the versatility of intelligent robots in assistive scenarios.

From a theoretical perspective, the evolution from CPS to embodied intelligent robots represents a shift from system integration to cognitive empowerment. Intelligent robots are no longer mere tools but active agents that learn and adapt. This is underpinned by advancements in compute power, algorithms, and data availability. For intelligent robots, the scalability of solutions is critical. Cloud-edge computing architectures distribute processing: heavy AI tasks for intelligent robots are handled in the cloud, while real-time control occurs at the edge. This balances latency and computational load for intelligent robots.

Challenges remain in the development of intelligent robots, including explainability of AI decisions and safety assurance. Formal methods can be applied to verify intelligent robot behaviors. For instance, temporal logic can specify safety properties for an intelligent robot: $\square \neg \text{collision}$, meaning “always no collision.” Model checking ensures the intelligent robot’s control algorithms satisfy such specifications. Moreover, energy efficiency is vital for mobile intelligent robots. Battery dynamics can be modeled as:

$$ \frac{dE}{dt} = -P(\mathbf{u}, \mathbf{x}) $$

where $E$ is energy and $P$ power consumption, dependent on the intelligent robot’s actions and state. Optimization techniques help maximize the operational time of intelligent robots.

In conclusion, the technological fusion from CPS to embodied intelligent robots marks a transformative era in automation. Intelligent robots, equipped with advanced perception, cognition, and execution capabilities, are poised to revolutionize industries and daily life. The integration of control theory, AI, and robotics has enabled intelligent robots to transition from passive executors to active learners. As research progresses, intelligent robots will become more autonomous, collaborative, and ubiquitous, driving forward the vision of a human-robot symbiotic society. This journey underscores the importance of interdisciplinary approaches in shaping the future of intelligent robots.

The mathematical formulations, tables, and discussions presented here illustrate the depth of this fusion. For instance, the synergy between CPS and intelligent robots can be summarized through a unified framework. Let the overall performance metric for an intelligent robot system be a function $J$ of multiple factors:

$$ J = \lambda_1 \cdot \text{Accuracy} + \lambda_2 \cdot \text{Efficiency} + \lambda_3 \cdot \text{Adaptability} $$

where $\lambda_i$ are weights reflecting priorities for the intelligent robot. This holistic view guides the design and deployment of intelligent robots in diverse applications. As technology evolves, further innovations will continue to enhance the capabilities of intelligent robots, making them integral to our world.