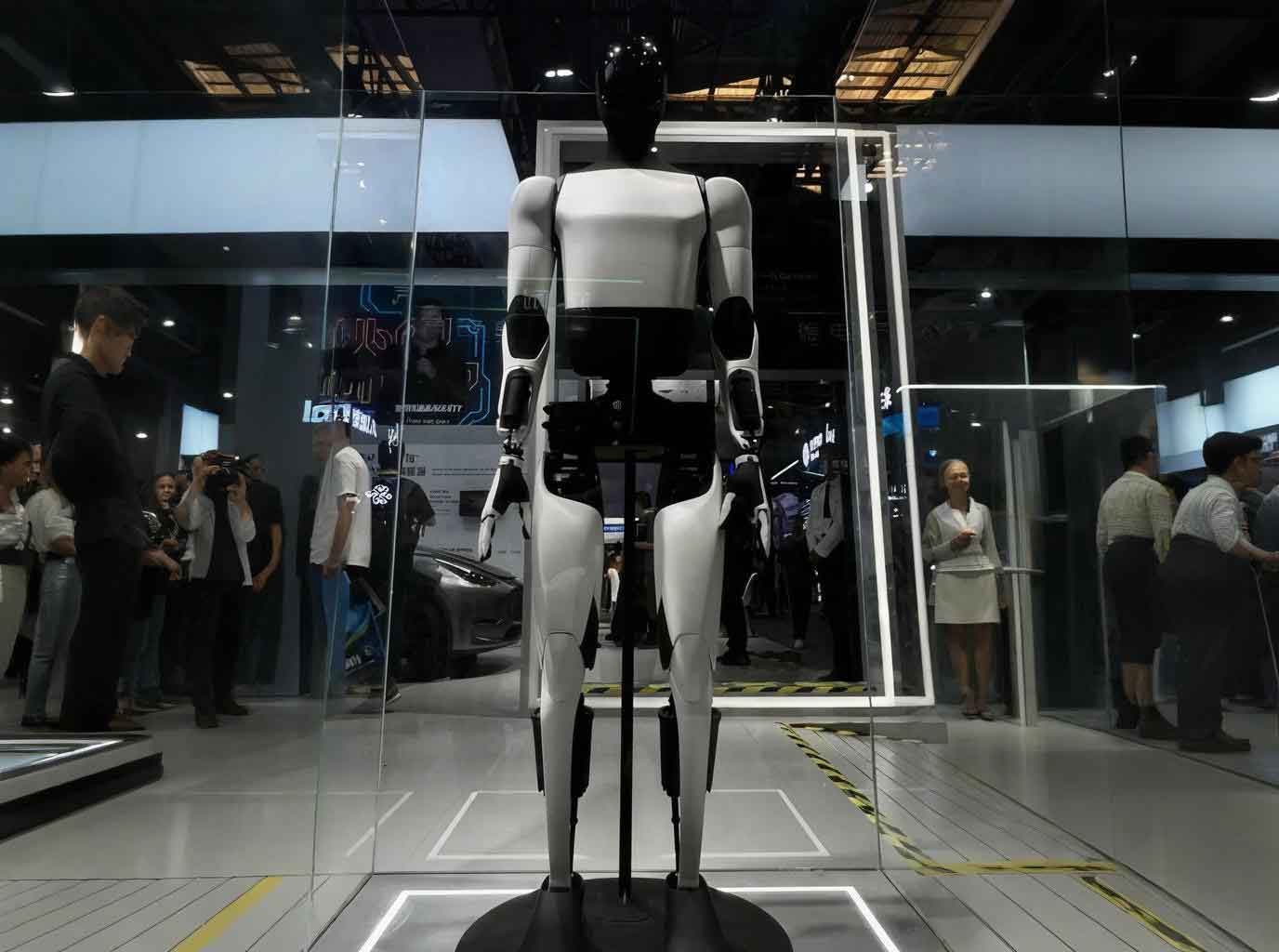

The advent of the era of robotics and artificial intelligence has ushered in new opportunities for embodied intelligence. Embodied intelligence refers to the integration of AI into a physical carrier, such as a robot, enabling the agent to interact with the environment, acquire information, understand problems, make decisions, and execute tasks in a human-like manner, thereby generating intelligent behavior and adaptability. This paradigm significantly enhances the智能化水平 and运动性能 of robots, leading to the continuous emergence of various embodied AI robots. Currently, these embodied AI robots are gradually being applied across numerous fields, including construction, security patrols, emergency rescue, and industrial production, continuously improving the quality of human life. A critical enabler for the autonomous navigation and task execution of embodied AI robots in real-world scenarios is the ability to construct high-precision maps and achieve real-time localization.

Simultaneous Localization and Mapping (SLAM) technology provides the essential high-precision maps and real-time pose estimates for embodied AI robots. It enables an embodied AI robot to move autonomously in an unknown environment without prior maps, estimate its position, and create a map of the surroundings by processing sensor data. SLAM is broadly categorized into visual SLAM and LiDAR SLAM based on the primary sensor used. While visual SLAM, which relies on cameras, is information-rich and compact, its performance is highly sensitive to lighting conditions. LiDAR SLAM, utilizing laser rangefinders to acquire high-precision distance information, is不受光照条件的影响 and capable of achieving high-precision定位与建图 in complex environments like construction sites.

Most mainstream 3D LiDAR SLAM algorithms are predicated on the assumption of a static environment. However, real-world application scenes such as construction sites, urban roads, and shopping centers are inherently dynamic, containing moving objects like pedestrians, vehicles, and animals. The presence of these dynamic objects introduces “ghosts” or artifacts in the 3D point cloud map. These artifacts can be misconstrued as obstacles, hindering subsequent path planning for the embodied AI robot, and can also obscure static structures, degrading map quality. More critically, LiDAR SLAM relies on point cloud registration for pose estimation. Using point clouds contaminated with dynamic points introduces errors in pose calculation, leading to a decline in the定位精度 of the embodied AI robot. Therefore, effectively removing dynamic point clouds to achieve accurate localization and mapping has become a prominent research direction within the field of embodied AI robots. This review aims to comprehensively survey the research on 3D LiDAR SLAM for embodied AI robots in dynamic environments.

1. Methods for Dynamic Point Cloud Removal

The core challenge in dynamic LiDAR SLAM is detecting and removing points belonging to moving objects. Traditional methods can be categorized based on their underlying detection principles: semantic segmentation-based, ray tracing-based, and visibility-based approaches.

1.1 Semantic Segmentation-Based Methods

These methods typically employ clustering or, more recently, deep learning to segment and classify point clouds, identifying and subsequently removing points belonging to known dynamic object categories (e.g., cars, pedestrians). The core idea is that if dynamic objects can be accurately recognized, their points can be easily eliminated. Recent advances in deep learning have been widely applied to dynamic point cloud semantic segmentation. Networks learn to assign semantic labels to each point, and points labeled as dynamic are filtered out. While effective for trained objects, these methods are highly dependent on large, annotated datasets, struggle with unlabeled or novel dynamic objects, and require significant computational resources (GPU). They may also fail to segment small parts of dynamic objects accurately.

| Year | Author | Key Innovation | Limitation |

|---|---|---|---|

| 2009 | Petrovskaya et al. | 2D bounding box modeling for vehicles. | Poor applicability to pedestrians, cyclists, etc. |

| 2018 | Ruchti et al. | Neural network estimates per-point dynamic probability. | Cannot detect objects not seen during training. |

| 2019 | Liu et al. (FlowNet3D) | End-to-end scene flow estimation from two point clouds. | Does not integrate motion information. |

| 2019 | Milioto et al. (RangeNet++) | Semantic segmentation on range images with efficient post-processing. | Low accuracy for small targets (e.g., pedestrians). |

| 2020 | Zhou et al. (Cylinder3D) | 3D cylinder convolution for point cloud semantic segmentation. | High computational cost, not real-time capable. |

| 2022 | Kim et al. (RVMOS) | Fuses motion and semantic features. | Difficulty detecting small dynamic object boundaries. |

| 2022 | Sun et al. (MotionSeg3D) | Fuses appearance and motion features without semantic supervision. | Performance degrades with additional training data. |

1.2 Ray Tracing-Based Methods

These methods discretize the 3D space into voxels and maintain a probabilistic occupancy state for each voxel based on how many times a laser ray has hit it. The principle is that dynamic objects are only temporarily present. Therefore, a voxel occupied by a dynamic object will be hit by laser rays only sporadically, whereas rays will mostly pass through it over time. By analyzing the hit/miss history of each voxel, those with a low hit probability are identified as dynamic, and points within them are removed. Methods like OctoMap provide a foundational framework using octree structures. While powerful for map cleaning, these approaches require significant memory and computational resources, depend on accurate pose estimates, and may struggle with transparent objects.

| Year | Author | Key Innovation | Limitation |

|---|---|---|---|

| 2013 | Hornung et al. (OctoMap) | Probabilistic 3D mapping using octrees. | Trade-off between voxel resolution and computation/memory. |

| 2018 | Schauer et al. (Peopleremover) | Voxel traversal with safe truncation ray for dynamic object removal. | High computational resource consumption. |

| 2020 | Pagad et al. | Octree-based occupancy map building for dynamic point identification. | Not suitable for very large-scale outdoor maps. |

| 2024 | Lyu et al. | Multi-ray tracing with height feature constraints for dynamic ground points. | Performance decreases on uneven or rugged terrain. |

1.3 Visibility-Based Methods

This approach leverages the principle that a laser ray travels in a straight line. If along the same ray path there exist two points at different distances, the closer one must belong to a dynamic object (as it occludes the farther, presumably static, one). By comparing current scans with a prior map (or previous scans), points that are closer than the mapped geometry are flagged as dynamic. This method is computationally simpler than ray tracing as it doesn’t require maintaining a full volumetric map. However, it suffers from issues like误杀 of ground points when the incidence angle is near 90 degrees, and it cannot handle points belonging to dynamic objects that were completely occluded in the prior map (e.g., a large truck hiding a pedestrian behind it).

| Year | Author | Key Innovation | Limitation |

|---|---|---|---|

| 2020 | Kim et al. (Removert) | Multi-resolution range image iterative static point recovery. | Aggressive removal误删 static points; ground point mislabeling. |

| 2021 | Qian et al. (RF-LIO) | Integrates adaptive multi-resolution range images into a LiDAR-Inertial Odometry. | Poor performance in open areas lacking reference structures. |

| 2024 | Jia et al. (BeautyMap) | Binary-encoded matrix for fast dynamic region identification. | Difficulty handling scenes with multiple ground levels. |

2. Handling Strategies for Dynamic Objects in LiDAR SLAM Frameworks

2.1 Classification of Objects by Dynamism

To apply appropriate strategies, it is useful to classify objects based on their temporal behavior:

- High-Dynamic Objects: Constantly moving (e.g., walking people, driving vehicles).

- Low-Dynamic Objects: Temporarily stationary (e.g., people standing still, vehicles at a traffic light).

- Semi-Dynamic Objects: Stationary within a single SLAM session but movable between sessions (e.g., chairs, parked cars, temporary materials).

- Static Objects: Permanently fixed (e.g., buildings, roads, fixed infrastructure).

The first three categories are collectively referred to as dynamic objects and require different handling strategies within the SLAM pipeline.

2.2 Online Real-Time Processing Strategy

This strategy focuses on removing dynamic points on-the-fly during the SLAM process, primarily targeting high-dynamic objects. It is crucial for obtaining accurate pose estimates in real-time. Removal can happen in the front-end (during scan matching) or the back-end (during map updating).

Front-End Integration: Many state-of-the-art methods integrate dynamic removal tightly with LiDAR-Inertial Odometry (LIO). For instance, RF-LIO uses visibility-based checks with adaptive resolution range images. The pose estimation can be formulated within an optimization framework where the cost function minimizes errors between static parts of the current scan and the map. If we denote the set of static points in scan $k$ as $\mathcal{P}_k^s$, the robot pose $\mathbf{T}_k$, and the map $\mathcal{M}$, the optimization aims to find:

$$\mathbf{T}_k^* = \arg\min_{\mathbf{T}_k} \sum_{\mathbf{p}_i \in \mathcal{P}_k^s} \rho( \| \mathbf{T}_k \cdot \mathbf{p}_i – \pi_{\mathcal{M}}(\mathbf{T}_k \cdot \mathbf{p}_i) \|^2 )$$

where $\rho$ is a robust loss function and $\pi_{\mathcal{M}}$ finds the corresponding point or plane in the map. By ensuring $\mathcal{P}_k^s$ is free of dynamic points, the optimization yields a more accurate $\mathbf{T}_k^*$.

| Location | Algorithm (Example) | Core Idea for Dynamic Handling |

|---|---|---|

| Front-End | RF-LIO | Adaptive multi-resolution range image for visibility check before scan matching. |

| Front-End | ID-LIO | Uses point height information and a delayed removal strategy. |

| Front-End | TRLO | Uses a 3D object detector (PointPillars, CenterPoint) to track and remove dynamic objects. |

| Back-End | Dynamicfilter | Two-stage process: fast visibility-based removal, followed by map-to-map optimization. |

Challenges: Online methods rely on short-term data and may leave residual动态物体 artifacts in the map or误删 static points, especially at long ranges or under occlusion.

2.3 Offline Post-Processing Strategy

This strategy is applied after a SLAM trajectory is obtained. It uses the entire sequence of scans and their optimized poses to build a global map and then iteratively refines it to remove both high and low-dynamic objects. It is not constrained by real-time requirements and can leverage global consistency.

Key Algorithms: ERASOR uses region-wise point cloud height differences to segment and remove dynamic objects, assuming a dominant ground plane. DORF combines ray tracing and visibility concepts in a coarse-to-fine manner, using a bird’s-eye view map to better separate ground and dynamic points. The process often involves evaluating a descriptor $d(\mathbf{V})$ for a voxel or region $\mathbf{V}$, such as height variation or temporal occupancy ratio, and classifying it as dynamic if $d(\mathbf{V}) > \tau_{dynamic}$.

| Algorithm | Core Mechanism | Typical Strength |

|---|---|---|

| ERASOR | Region-wise pseudo occupancy ratio based on height differences. | Fast, effective for urban environments. |

| Removert | Multi-resolution range image comparison and static point recovery. | Good at recovering误删 static points. |

| DORF | Coarse visibility removal + fine bird’s-eye view dynamic detection. | Balances removal and preservation well. |

Limitation: Offline processing cannot be used for real-time navigation and may fail in scenes with an extremely high percentage of dynamic objects.

2.4 Lifelong SLAM Strategy

This strategy is essential for handling semi-dynamic objects and long-term operation. It involves continuously updating the map over multiple sessions or the entire lifetime of the embodied AI robot. The system must detect changes, distinguish between temporary and permanent changes, and update the map accordingly without corrupting its consistency.

Approaches: Techniques include maintaining multi-session maps, using frequency-based models (FreMEn) to predict environmental changes, and employing modular frameworks like LT-mapper that use a “remove-then-revert” strategy across sessions. The core is to manage a persistent map representation $\mathcal{M}_t$ that evolves over time $t$. Updates involve change detection: $\Delta \mathcal{M} = \mathcal{F}(\mathcal{M}_{t}, \mathcal{S}_{t+1}, \mathbf{T}_{t+1})$, where $\mathcal{F}$ is a function identifying additions and deletions based on new scan $\mathcal{S}_{t+1}$ and pose $\mathbf{T}_{t+1}$. The map is then updated: $\mathcal{M}_{t+1} = \mathcal{M}_{t} \oplus \Delta \mathcal{M}$, where $\oplus$ denotes a map update operation that preserves global consistency.

| Approach | Key Concept | Challenge |

|---|---|---|

| Multi-Session Mapping | Store and align maps from different times to query changes. | High memory consumption, data association across time. |

| Spectral Models (FreMEn) | Model environment state changes in the frequency domain. | Assumes periodic changes, high computational cost. |

| Modular Frameworks (LT-mapper) | Separate modules for localization, dynamic removal, and map management. | System complexity, maintaining consistency during updates. |

Lifelong SLAM is the most comprehensive strategy but adds significant complexity to system design and maintenance.

3. Evaluation Metrics and Datasets

3.1 Evaluation Metrics

Performance is evaluated based on localization accuracy and static map quality.

Localization Accuracy:

1. Absolute Trajectory Error (ATE): Measures the global consistency of the estimated trajectory. For pose $\mathbf{x}_i$ and estimate $\hat{\mathbf{x}}_i$, the error is $\Delta \mathbf{x}_i = \mathbf{x}_i – \mathbf{R}_i \hat{\mathbf{x}}_i$, where $\mathbf{R}_i$ aligns the trajectories. The RMSE over $M$ poses is:

$$ATE = \sqrt{\frac{1}{M}\sum_{i=1}^{M} \Vert \Delta \mathbf{x}_i \Vert^2}$$

2. Relative Pose Error (RPE): Measures the drift over a fixed time interval $\Delta$. For a set of pose pairs ${(k, s_k, e_k)}$, the RPE for segment $k$ is:

$$RPE_k = \sqrt{\frac{1}{N_k}\sum_{i=0}^{N_k-1} \Vert \mathbf{x}_{i,k} – \mathbf{R}_{i,k} \hat{\mathbf{x}}_{i,k} \Vert^2}$$

A lower ATE and RPE indicate better robustness to dynamic interference.

Static Map Quality:

1. Preservation Rate (PR) & Rejection Rate (RR): More suitable than precision/recall when dynamic points are sparse.

– $PR = \frac{P_{ss}}{P_{is}} \times 100\%$, where $P_{ss}$ is static points kept in final map, $P_{is}$ is total static points in original data.

– $RR = (1 – \frac{P_{id}}{P_{sd}}) \times 100\%$, where $P_{id}$ is dynamic points incorrectly kept, $P_{sd}$ is total dynamic points.

High PR and RR indicate effective dynamic removal with minimal loss of static structure.

3.2 Public Datasets

| Dataset | Environment | Key Features for Dynamic SLAM |

|---|---|---|

| KITTI | Outdoor (Urban, Rural, Highway) | Standard benchmark with GPS/INS ground truth. Contains moving vehicles. |

| SemanticKITTI | Outdoor | Dense per-point semantic labels (28 classes). Distinguishes moving vs. parked cars. |

| NCLT | Indoor & Outdoor | Long-term, changing environment over seasons. Includes dynamic objects and structural changes. |

| UrbanLoco | Outdoor (Dense Urban) | Focus on challenging urban canyons with rich dynamic traffic. |

| DOALS | Indoor (Crowded) | Specifically captures dense pedestrian crowds for dynamic object evaluation. |

| Dynablox | Indoor & Outdoor | Contains atypical dynamic objects (e.g., thrown balls, rolling luggage). |

4. Future Research Directions

The field of dynamic LiDAR SLAM for embodied AI robots continues to evolve rapidly. Future research is likely to focus on the following key areas:

1. Deeper Integration with Deep Learning: Moving beyond semantic segmentation to end-to-end learning of SLAM components that are inherently robust to dynamics. This includes learning better scene flow estimators, dynamic-aware feature descriptors for odometry, and direct prediction of static scenes from dynamic inputs. A major challenge remains the accurate real-time 3D detection of small, fast-moving objects in cluttered environments relevant to embodied AI robots, such as construction workers on a site.

2. Advanced Multi-Sensor Fusion: While LiDAR-IMU fusion is now common, the integration of cameras is crucial for providing rich texture and color information, aiding in dynamic object segmentation (especially for distinguishing temporarily stationary objects), and enhancing loop closure detection in changing environments. Tightly coupled LiDAR-Visual-Inertial Odometry (LVIO) systems that can robustly handle dynamics will be vital for embodied AI robots operating in complex, unstructured domains.

3. Lightweight and Scalable Architectures: For deployment on resource-constrained embodied AI robot platforms, algorithms must be made more efficient in both computation and memory. This involves developing sparse or adaptive map representations, efficient change detection algorithms, and scalable lifelong mapping frameworks that can operate for extended periods without unbounded memory growth. Techniques like neural implicit representations or hashed data structures show promise for compact and scalable mapping.

5. Conclusion

LiDAR SLAM is a cornerstone technology for enabling autonomy in embodied AI robots. The assumption of a static world, however, breaks down in practical applications, leading to degraded localization and mapping. This review has surveyed the principal methods for dynamic point cloud removal—semantic, ray tracing, and visibility-based—and analyzed the strategies for integrating them into the SLAM pipeline based on object dynamism: online, offline, and lifelong. While significant progress has been made, challenges remain, including dependency on labeled data, high computational costs, handling of occlusions and ground points, and system complexity for lifelong operation. The future lies in leveraging deeper learning integration, robust multi-sensor fusion, and efficient, scalable algorithms to empower embodied AI robots with reliable and long-term autonomy in our ever-changing world.