As I observe the rapid evolution of machines around us, a fundamental shift is becoming clear. We are moving beyond artificial intelligence that merely thinks to intelligence that acts, perceives, and learns through a physical form. This paradigm is known as Embodied Intelligence, and its most compelling avatar is the humanoid robot, or what I prefer to call the embodied AI robot. The core thesis is simple yet profound: true, generalizable intelligence for interacting with our world is not a disembodied algorithm but one that is grounded in sensory experience and physical action. An embodied AI robot is not just a machine that executes pre-programmed tasks; it is a system that uses its body to gather data, form understandings, and generate adaptive behaviors in real-time. This marks a departure from traditional robotics and pure software AI, converging into a discipline where the mind is inseparable from its physical vessel.

The conceptual foundation of Embodied Intelligence, or Embodied AI, can be traced back to mid-20th century cybernetics, but its modern realization is powered by breakthroughs in machine learning and sensor fusion. In my analysis, an embodied AI robot operates on a continuous loop of perception, reasoning, and action. Its “body”—comprising actuators, cameras, force-torque sensors, lidar, and microphones—serves as the primary interface with the physical world. The “intelligence”—increasingly a large, multimodal model—processes this stream of heterogeneous data to build a contextual understanding and decide on actions. The action then changes the state of the robot and its environment, leading to new perceptual data. This cycle, formalized below, is the essence of being embodied:

$$ s_{t+1} = f(s_t, a_t) $$

$$ o_t = g(s_t) $$

$$ a_t = \pi_\theta(o_t, o_{t-1}, …) $$

Here, \( s_t \) is the state of the world and robot at time \( t \), \( a_t \) is the action taken, leading to a new state \( s_{t+1} \). The robot does not have direct access to the true state \( s_t \); instead, it receives observations \( o_t \) through its sensors. The policy \( \pi_\theta \), typically parameterized by a neural network with weights \( \theta \), maps the history of observations to actions. The goal of embodied learning is to optimize \( \theta \) so that the cumulative reward over time is maximized. This framing underscores that intelligence is not a static model but a dynamic policy for successful interaction.

Core Technological Pillars of an Embodied AI Robot

Building a capable embodied AI robot requires synergizing several advanced technologies. It is a systems engineering challenge of the highest order, where progress in one domain often bottlenecks the entire system. From my perspective, the key pillars can be summarized as follows:

| Pillar | Description | Key Challenges |

|---|---|---|

| Multimodal Perception & World Modeling | The robot’s ability to fuse visual, auditory, tactile, and proprioceptive data into a coherent, 3D understanding of its environment. This often involves building a “world model” – a neural network that can predict future states from current states and actions. | Sensor calibration, data synchronization, handling occlusion and noise, learning computationally efficient representations. |

| Motor Control & Dexterous Manipulation | Translating high-level goals into stable, precise, and energy-efficient motions. This includes whole-body balancing, walking, and fine manipulation with multi-fingered hands. | Under-actuation, high degrees of freedom (DoF), contact dynamics, ensuring safety during physical interaction. |

| Embodied Learning Frameworks | Algorithms that allow the robot to learn from interaction, either through simulation (SIM2REAL), reinforcement learning (RL), imitation learning, or large-scale pre-training on video and action data. | Sample inefficiency of RL, reality gap in simulation, scarcity of real-world robot interaction data. |

| AI Infrastructure & Compute | The backbone for training large embodied models. This includes distributed computing clusters for simulation, massive datasets of human and robot activity, and efficient inference engines on the robot itself. | Astronomical compute costs, latency for real-time control, power consumption constraints on-board. |

The interplay between these pillars defines the capabilities of an embodied AI robot. For instance, a sophisticated world model is useless if the motor controller cannot execute the planned motions smoothly. Similarly, brilliant mechanical design cannot compensate for a lack of perceptual understanding. The central equation governing the performance \( P \) of such a system could be viewed as a constrained product of its components:

$$ P = \left( \frac{1}{C_{\text{perception}}} \cdot I_{\text{model}} \right) \times \left( \frac{1}{C_{\text{control}}} \cdot E_{\text{motion}} \right) \times L_{\text{learning}} \times A_{\text{infra}} $$

$$ \text{subject to: } \text{Power, Safety, Cost} \leq \text{Thresholds} $$

Here, \( C \) terms represent complexity or error, \( I \) is inference accuracy, \( E \) is motion efficiency, \( L \) is learning scalability, and \( A \) is infrastructure scaling factor. This illustrates that overall performance is multiplicative and can be nullified by a weakness in any single pillar.

From Labs to Factories: The March Towards Utility

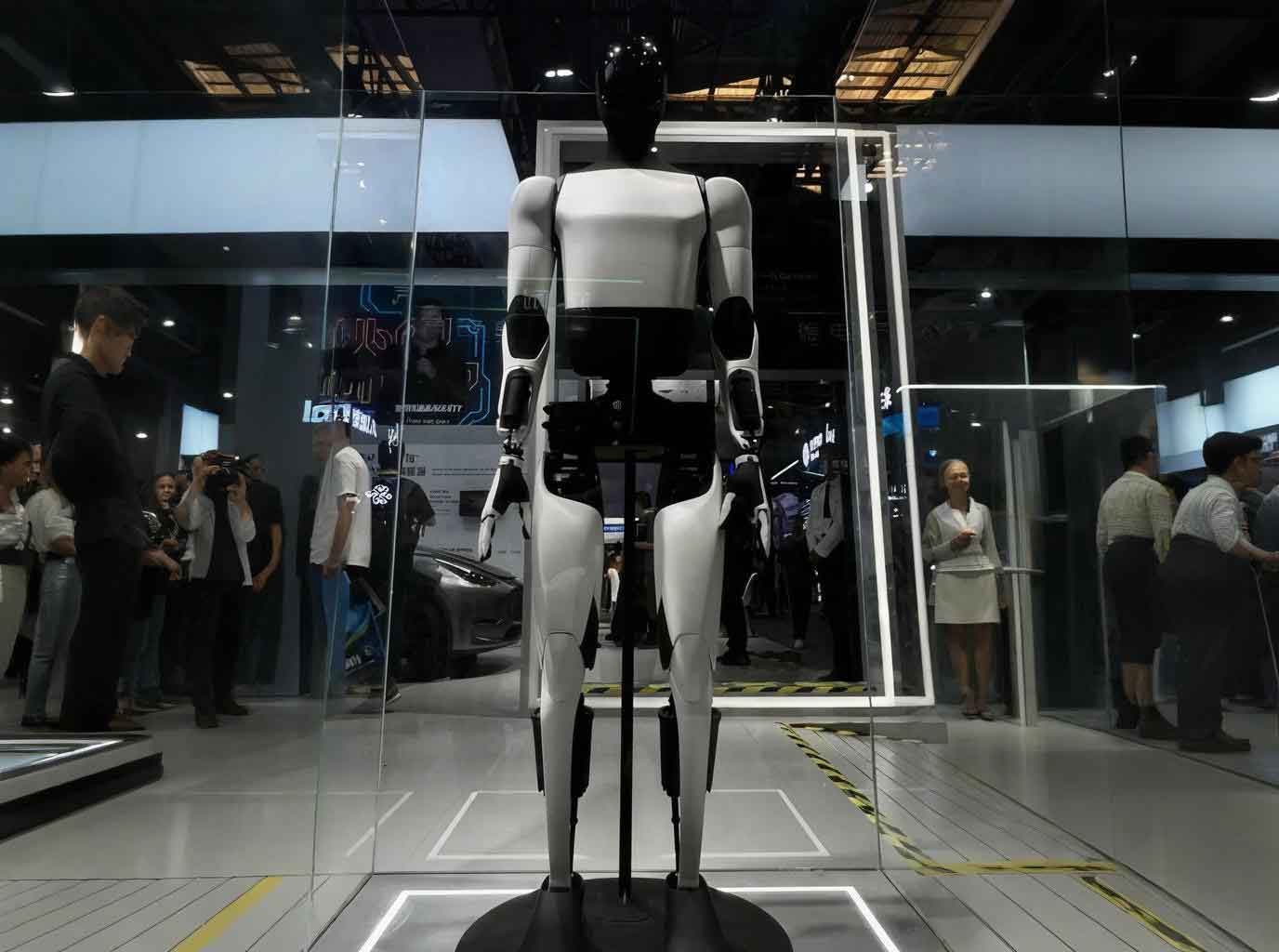

The theoretical promise of Embodied Intelligence is now being stress-tested in real-world environments, most notably in industrial logistics and manufacturing. This is where the embodied AI robot transitions from a research prototype to an economic asset. The value proposition is clear: a general-purpose, humanoid form factor can adapt to workspaces designed for humans without extensive and costly re-engineering of the environment. In warehouses, one can witness such machines performing tasks like loading and unloading, material transfer between stations, and visual inspection. The workflow typically involves:

- Perception: Identifying target objects (e.g., a specific box on a pallet) and environmental landmarks (conveyor belts, autonomous guided vehicles) using RGB-D cameras.

- Planning: Computing a collision-free trajectory for the arm and base to approach, grasp, and relocate the object.

- Control & Execution: Executing the plan with closed-loop feedback from force/torque sensors to ensure a secure grip and gentle placement.

- Adaptation: Using outcomes (success/failure) to refine the models for future trials, embodying the learning loop.

The economic logic driving adoption can be modeled by comparing total cost of ownership (TCO) against a baseline of fixed automation or human labor. For a task with variable geometry and payload, the flexibility of an embodied AI robot can be decisive.

| Cost Factor | Fixed Automation | Human Labor | Embodied AI Robot |

|---|---|---|---|

| Initial Capex | High (custom tooling) | Very Low | Very High (robot unit) |

| Changeover Cost | Very High (re-tooling) | Low (retraining) | Low (software re-task) |

| Operational Scope | Single, repetitive task | Broad, flexible | Broad, flexible (target) |

| Uptime / Availability | >95% | ~70-80% | Target >90% |

| Long-term Trajectory | Static, depreciates | Wages increase | Cost decreases, capability improves |

The formula for a simplified Return on Investment (ROI) over \( N \) years for deploying an embodied AI robot might look like:

$$ \text{ROI} = \frac{\sum_{t=1}^{N} \left( (L_t – R_t) + \Delta F_t \right)}{C_0 + \sum_{t=1}^{N} M_t} $$

where \( L_t \) is the cost of equivalent human labor in year \( t \), \( R_t \) is the operating cost of the robot (energy, maintenance), \( \Delta F_t \) is the value of increased flexibility and quality, \( C_0 \) is the initial capital cost, and \( M_t \) is annual maintenance/software cost. The case becomes compelling when the numerator grows over time due to rising labor costs and the robot’s increasing capability through software updates, while the denominator’s impact diminishes.

Overcoming the Hurdles: Simulation, Data, and Transfer Learning

Training an embodied AI robot entirely in the physical world is prohibitively slow, dangerous, and expensive. A dropped box is inconsequential; a fall that damages a million-dollar prototype is not. Therefore, the primary playground for developing embodied intelligence is high-fidelity simulation. Massive parallel simulations run on GPU clusters allow for the equivalent of centuries of robot experience to be accumulated in days. The central challenge here is the “reality gap”: policies that perform flawlessly in simulation often fail miserably in reality due to unmodeled physics, sensor noise, and actuator dynamics.

The state-of-the-art approach involves a combination of domain randomization and learning residual models. In domain randomization, simulation parameters (e.g., friction coefficients, object masses, visual textures, lighting) are varied widely during training. This forces the policy \( \pi_\theta \) to become robust to a vast family of worlds, increasing the chance it will work in the real world. Formally, we optimize for:

$$ \theta^* = \arg \max_\theta \mathbb{E}_{p \sim P_{\text{rand}}}[ \mathbb{E}_{\tau \sim \pi_\theta, s_{t+1}=f_p(s_t, a_t)}[ \sum_t \gamma^t r_t ] ] $$

Here, \( p \) represents parameters of the simulator sampled from a broad distribution \( P_{\text{rand}} \), and \( f_p \) is the forward dynamics under those parameters. The policy learns to succeed across this distribution.

Furthermore, a major focus is on collecting large-scale datasets of robot interactions, akin to the image-text datasets that powered vision-language models. A unified architecture for an embodied AI robot might involve a foundational “Embodiment Model” pre-trained on millions of video clips of humans and robots performing tasks, paired with language instructions and teleoperation data. This model learns a shared embedding space for perception, language, and action. Fine-tuning for a specific downstream task (e.g., “unload the blue bin from the cart”) then becomes data-efficient. This two-stage process can be summarized as:

$$ \text{Pre-training: } \Phi_{\text{emb}} = \text{Train}(\mathcal{D}_{\text{human}}, \mathcal{D}_{\text{robot-demo}}, \mathcal{D}_{\text{web-video}}) $$

$$ \text{Fine-tuning: } \pi_{\theta} = \text{Adapt}(\Phi_{\text{emb}}, \mathcal{D}_{\text{target-task}}) $$

Where \( \Phi_{\text{emb}} \) is the learned embodiment foundation model, capturing common-sense physics and affordances.

The Future Embodied: From Factories to Homes and Beyond

The trajectory for the embodied AI robot points toward increasing generality and accessibility. The initial phase is undeniably industrial, where the business case is clearest and environments are more structured. Success in these domains will drive down hardware costs, improve reliability, and enrich the software stack. The next frontier is the service sector and, ultimately, the domestic environment—a significantly more complex challenge. A home is unstructured, cluttered, and full of unique objects and social norms. Progress here will require leaps in common-sense reasoning, safe human-robot interaction, and intuitive communication.

I anticipate a convergence where the distinction between different types of embodied agents—humanoid robots, mobile manipulators, autonomous vehicles—blurs at the intelligence level. They will all rely on similar core models for understanding 3D space, predicting physical outcomes, and planning actions. The “body” becomes a peripheral, optimized for a specific niche, while the embodied intelligence core is shared. This leads to a vision of a networked ecosystem of embodied agents. A robot in a factory could query a central “skill library” to learn a new manipulation technique demonstrated by a robot on another continent. A domestic embodied AI robot could receive over-the-air updates that give it new abilities, much like a smartphone today.

The ultimate metric for this technology will be its positive impact. The potential to augment human capabilities, take over dangerous or tedious jobs, provide care and companionship for an aging population, and explore environments hostile to humans is immense. The journey to create a truly intelligent embodied AI robot is perhaps the most ambitious engineering and scientific endeavor of our time, weaving together threads from computer science, mechanical engineering, neuroscience, and cognitive psychology. As the physical and digital worlds continue to fuse, the machines that move among us, learning and adapting, will become the most tangible expression of artificial intelligence.