The core challenge for autonomous intelligent robots operating in real-world scenarios lies in their ability to navigate efficiently and safely through dynamic, unstructured, and partially observable environments. Traditional path planning algorithms, such as A* or Rapidly-exploring Random Trees (RRT), often demonstrate limitations in adaptability and real-time performance when faced with moving obstacles or complex, unknown terrains. To address these challenges, this research formulates the path planning problem as a Markov Decision Process (MDP) and proposes a novel algorithm based on Deep Reinforcement Learning (DRL). The algorithm is designed to enable intelligent robots to learn optimal navigation policies through interaction with simulated environments. Key contributions include the design of a comprehensive state representation method that effectively captures the robot’s perception, the formulation of a finely-tuned reward function to guide policy optimization, and the construction of a DRL framework for policy training. Comparative experiments in various simulated environments validate the algorithm’s effectiveness in terms of success rate, path optimality, and computational efficiency, providing a new paradigm for robust autonomous navigation in intelligent robots.

1. Introduction

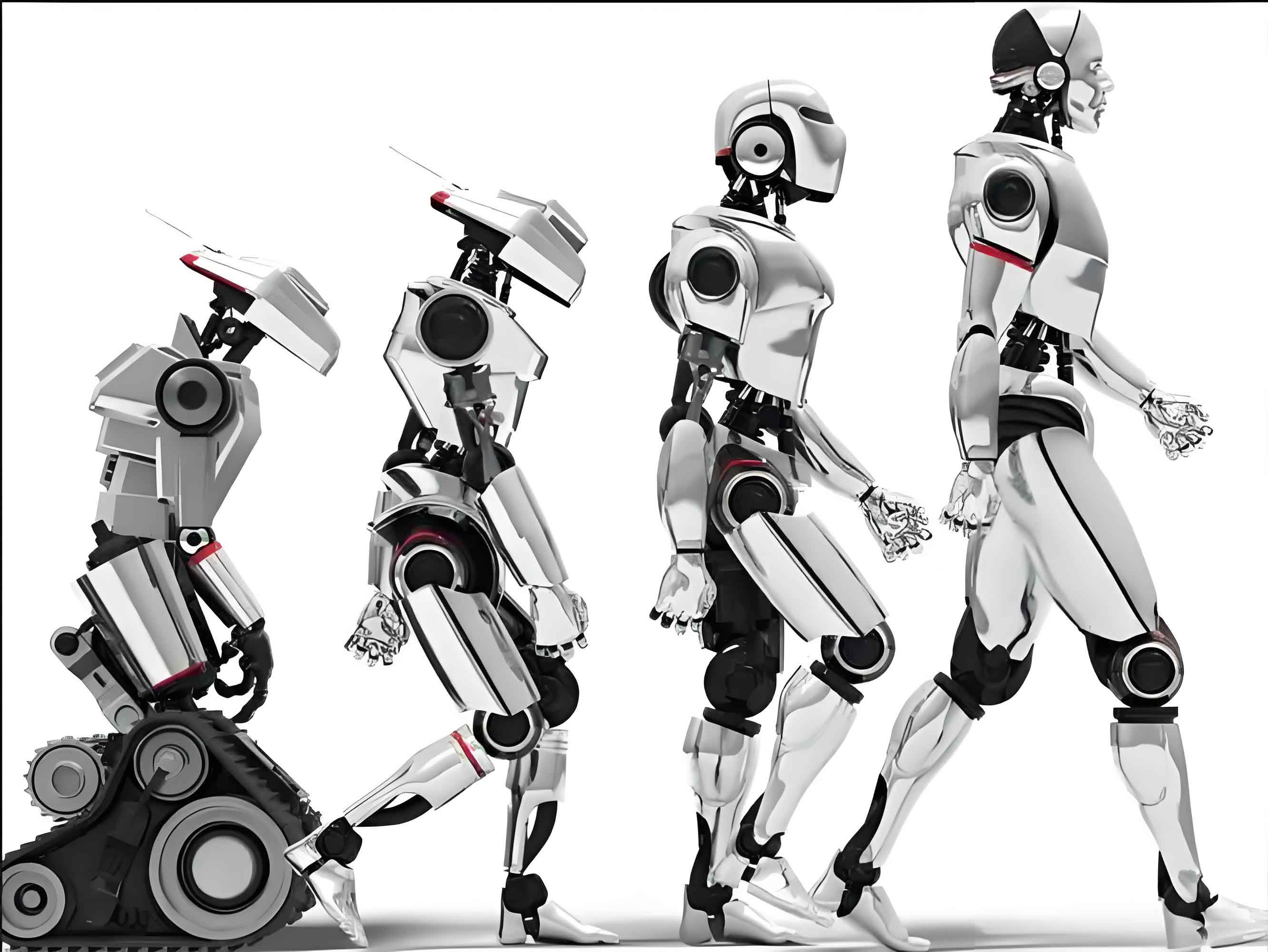

An intelligent robot represents a sophisticated integration of mechanics, electronics, computer science, and artificial intelligence. Equipped with advanced sensing, decision-making, and execution capabilities, these systems are designed to simulate or replace humans in performing complex tasks across diverse fields such as warehouse logistics, disaster rescue, and smart home assistance. The fundamental enabler for such autonomous operation is intelligent navigation, with path planning serving as its critical technical cornerstone. The primary objective of path planning is to compute a collision-free and efficient trajectory from a start point to a goal point within a given environment. While classical algorithms perform well in static, fully-known maps, they struggle with the uncertainties and dynamism inherent in real-world applications. This gap necessitates the exploration of learning-based approaches that can adapt to environmental changes. Reinforcement Learning (RL), a paradigm where an agent learns optimal behavior through trial-and-error interactions with its environment, offers a promising solution for sequential decision-making problems like path planning. By framing navigation as an MDP, an intelligent robot can learn policies that generalize across a variety of scenarios, enhancing robustness and operational intelligence. This paper delves into the design and implementation of a DRL-based path planning algorithm, specifically tailored for the continuous control challenges faced by mobile intelligent robots.

2. Overview of Path Planning Algorithms

Path planning algorithms for intelligent robots can be broadly categorized into several classes, each with distinct characteristics and application domains, as summarized in the table below.

| Algorithm Class | Representative Methods | Key Principle | Strengths | Weaknesses |

|---|---|---|---|---|

| Graph Search & Sampling-Based | A*, Dijkstra, RRT, RRT* | Search discrete graph nodes or sample the configuration space to find a path. | Provable completeness/optimality (for some), effective in static environments. | Poor adaptability to dynamic changes; high computational cost for high-resolution maps; not inherently real-time. |

| Artificial Potential Field (APF) | Classical APF, Improved APF variants | Models goal as an attractive force and obstacles as repulsive forces. | Simple, computationally efficient, generates smooth paths. | Prone to local minima (trapping); oscillations in narrow passages; parameters are hard to tune. |

| Bio-inspired & Heuristic | Genetic Algorithm (GA), Ant Colony Optimization (ACO), Particle Swarm Optimization (PSO) | Simulates natural processes like evolution or swarm behavior to optimize the path. | Can handle complex, non-linear constraints; good global search capability. | High computational load; convergence speed and quality depend heavily on parameters; not strictly real-time. |

| Reinforcement Learning (RL) | Q-learning, DQN, DDPG, PPO | Agent learns a policy by maximizing cumulative reward from environment interaction. | Adapts to dynamic/unknown environments; learns complex strategies; suitable for sequential decisions. | Requires extensive training; sample inefficient; reward function design is critical and non-trivial. |

In practice, hybrid approaches are often employed. For instance, a global path might be generated using A* on a coarse map, while a local planner like APF or, more recently, an RL-based controller handles real-time obstacle avoidance. The inherent adaptability of RL makes it a powerful supplement, particularly for enabling intelligent robots to operate in unpredictable settings where traditional algorithms falter.

3. Reinforcement Learning Foundation and Problem Formulation

3.1 Core Principles of Reinforcement Learning

Reinforcement Learning models an interaction loop between an agent (the intelligent robot) and an environment. At each timestep \( t \), the agent observes a state \( s_t \in \mathcal{S} \), executes an action \( a_t \in \mathcal{A} \), receives a scalar reward \( r_{t+1} \), and transitions to a new state \( s_{t+1} \). The agent’s behavior is defined by a policy \( \pi(a|s) \), which is a probability distribution over actions given a state. The goal is to learn a policy that maximizes the expected cumulative discounted reward, or return \( G_t \):

$$ G_t = \sum_{k=0}^{\infty} \gamma^k r_{t+k+1} $$

where \( \gamma \in [0, 1] \) is the discount factor, balancing immediate and future rewards.

The value function \( V^{\pi}(s) \) represents the expected return starting from state \( s \) and following policy \( \pi \). The action-value function \( Q^{\pi}(s, a) \) represents the expected return after taking action \( a \) in state \( s \) and thereafter following \( \pi \). These functions satisfy the Bellman expectation equations:

$$ V^{\pi}(s) = \mathbb{E}_{a \sim \pi, s’ \sim P}\left[ r + \gamma V^{\pi}(s’) \right] $$

$$ Q^{\pi}(s, a) = \mathbb{E}_{s’ \sim P}\left[ r + \gamma \mathbb{E}_{a’ \sim \pi}\left[ Q^{\pi}(s’, a’) \right] \right] $$

where \( P(s’ | s, a) \) is the state transition probability and \( r = R(s, a, s’) \) is the reward.

3.2 Modeling Path Planning as a Markov Decision Process (MDP)

To apply RL, the path planning task for an intelligent robot must be formalized as an MDP, defined by the tuple \( (\mathcal{S}, \mathcal{A}, P, R, \gamma) \).

- State Space \( \mathcal{S} \): A comprehensive state representation is crucial. For a wheeled mobile intelligent robot, the state typically includes:

- Pose: \((x, y, \theta)\) representing 2D position and heading.

- Local Environment Perception: Often encoded via a lidar scan (distance readings over 360°) or, more effectively for learning, a processed 2D egocentric occupancy grid. This grid, centered on the robot, marks cells as free, occupied, or unknown.

- Goal Information: The relative vector from the robot to the goal \(( \Delta x_g, \Delta y_g )\) or its polar coordinates \(( d_g, \phi_g )\).

- Historical Information: Recent actions or velocities to encourage smooth motion.

- Action Space \( \mathcal{A} \): This can be discrete (e.g., {move forward, turn left, turn right}) or continuous (e.g., linear velocity \( v \) and angular velocity \( \omega \)). Continuous actions are more natural for smooth control of an intelligent robot.

- Transition Dynamics \( P(s’ | s, a) \): This describes the environment’s physics. In simulation, this is determined by the robot’s kinematic/dynamic model and the environment. Model-free RL algorithms learn without explicit knowledge of \( P \).

- Reward Function \( R(s, a, s’) \): This is the most critical design element for guiding the intelligent robot. It must translate the high-level task “reach the goal quickly and safely” into a numerical signal.

The figure above conceptually illustrates an intelligent robot with a localized perception field navigating towards a goal while avoiding obstacles, embodying the core MDP interaction loop.

4. Design of the Reinforcement Learning-Based Path Planning Algorithm

4.1 Algorithm Selection: Deep Deterministic Policy Gradient (DDPG)

Given the need for continuous, smooth velocity control and the high-dimensional nature of perceptual state inputs (e.g., occupancy grids), the Deep Deterministic Policy Gradient (DDPG) algorithm is selected. DDPG is an actor-critic, model-free algorithm designed for continuous action spaces. It maintains four neural networks:

- Actor (Policy) Network \( \mu(s|\theta^\mu) \): Maps state \( s \) directly to a deterministic action \( a \).

- Critic (Q-value) Network \( Q(s, a|\theta^Q) \): Estimates the value of taking action \( a \) in state \( s \).

- Target Networks \( \mu’ \) and \( Q’ \): Time-delayed copies of the actor and critic networks, used to stabilize training.

The critic is updated by minimizing the loss based on the Bellman equation:

$$ L(\theta^Q) = \mathbb{E}_{(s,a,r,s’) \sim \mathcal{D}}\left[ \left( Q(s, a|\theta^Q) – y \right)^2 \right] $$

where \( y = r + \gamma Q'(s’, \mu'(s’|\theta^{\mu’})|\theta^{Q’}) \) and \( \mathcal{D} \) is a replay buffer storing experience tuples \((s, a, r, s’)\).

The actor is updated using the deterministic policy gradient:

$$ \nabla_{\theta^\mu} J \approx \mathbb{E}_{s \sim \mathcal{D}}\left[ \nabla_a Q(s, a|\theta^Q)|_{a=\mu(s)} \nabla_{\theta^\mu} \mu(s|\theta^\mu) \right] $$

This approach allows the intelligent robot to learn precise velocity commands directly from rich sensory data.

4.2 State Representation and Feature Engineering

We design a multi-channel state tensor for the intelligent robot:

$$ \mathbf{s}_t = [ \mathbf{G}_t, \mathbf{g}_t, \mathbf{h}_t ] $$

where:

- \( \mathbf{G}_t \) is a \( 40 \times 40 \) local occupancy grid (5m x 5m, 0.125m resolution). Each cell contains three normalized values: occupancy probability, relative distance to the robot, and relative angle. This provides a structural snapshot.

- \( \mathbf{g}_t = [d_g / d_{max}, \sin(\phi_g), \cos(\phi_g)] \) is the goal vector, normalized for learning stability.

- \( \mathbf{h}_t \) contains the previous two actions \( (v_{t-1}, \omega_{t-1}), (v_{t-2}, \omega_{t-2}) \) to promote motion smoothness.

This representation provides a balance between sufficient environmental context and manageable input dimensionality for the neural networks.

4.3 Fine-Tuned Reward Function Design

A well-shaped reward function is paramount. We design a composite reward \( r_t \) at each step:

$$

r_t = r_{\text{goal}} + r_{\text{collision}} + r_{\text{step}} + r_{\text{progress}} + r_{\text{safety}}

$$

The components are defined as follows:

| Component | Formula | Purpose |

|---|---|---|

| Goal Reward \( r_{\text{goal}} \) | \( +R_{\text{succ}} \) (if \( d_g < \text{threshold} \)) | Sparse, high positive reward (+1000) for task completion. |

| Collision Penalty \( r_{\text{collision}} \) | \( R_{\text{coll}} \) (if collision) | High negative penalty (-500) for safety violation. |

| Step Penalty \( r_{\text{step}} \) | \( c_{\text{time}} \) (e.g., -0.1) | Encourages efficiency by penalizing each time step. |

| Progress Reward \( r_{\text{progress}} \) | \( \alpha \cdot (d_{g, t-1} – d_{g, t}) \) | Dense shaping reward. Positive for moving closer to the goal (\( \alpha=2.0 \)). |

| Safety Penalty \( r_{\text{safety}} \) | \( -\beta \cdot \exp(-\lambda \cdot d_{obs}) \) if \( d_{obs} < D_{\text{thresh}} \) | Creates a repulsive field near obstacles (\( \beta=10, \lambda=2, D_{\text{thresh}}=0.5m \)). |

Here, \( d_g \) is the distance to the goal, and \( d_{obs} \) is the distance to the nearest obstacle. This composite reward explicitly balances the competing objectives of goal-reaching, efficiency, and safety for the intelligent robot.

5. Experimental Simulation and Results Analysis

5.1 Experimental Setup

We utilize the PyBullet physics engine to create high-fidelity simulation environments for training and testing the intelligent robot agent.

- Env1: Static Simple: A 10m x 10m arena with 5 randomly placed cylindrical obstacles.

- Env2: Static Complex Maze: A 20m x 20m maze with structured corridors and a minimum passage width of 0.6m.

- Env3: Dynamic Obstacles: Based on Env2, with 2-3 obstacles moving along predefined linear paths.

The intelligent robot is modeled as a differential-drive platform. The DDPG networks are implemented with PyTorch. The Actor and Critic networks are 3-layer fully connected networks with 256 and 128 hidden units, using ReLU activations. Key hyperparameters are listed below:

| Hyperparameter | Value |

|---|---|

| Discount Factor (\( \gamma \)) | 0.99 |

| Replay Buffer Size | 100,000 |

| Batch Size | 64 |

| Actor Learning Rate | 0.0001 |

| Critic Learning Rate | 0.001 |

| Target Soft Update (\( \tau \)) | 0.005 |

| Exploration Noise | Ornstein-Uhlenbeck (θ=0.15, σ=0.2) |

Each environment is trained for 500 episodes, with each episode having a maximum of 1000 steps. Evaluation is performed over 100 test episodes with exploration noise disabled.

5.2 Performance Metrics

The algorithm’s performance is evaluated using the following metrics:

- Success Rate (SR): Percentage of episodes where the intelligent robot reaches the goal without collision.

- Average Path Length (APL): Mean Euclidean distance of the traversed path in successful episodes.

- Average Time per Step (ATS): Mean computation time for the algorithm to produce an action (in milliseconds).

- Average Cumulative Reward (ACR): Mean total reward per episode, indicating overall policy quality.

- Minimum Obstacle Distance (MOD): The average, over an episode, of the minimum distance to any obstacle during traversal. Higher is safer.

5.3 Comparative Results and Analysis

Our proposed DDPG-based approach is compared against several baseline algorithms: A* (global planner), RRT* (sampling-based), and a discrete-action Deep Q-Network (DQN). The results are presented below.

| Environment | Algorithm | Success Rate (%) | Avg. Path Length (m) | Avg. Step Time (ms) | Avg. Cum. Reward | Avg. MOD (m) |

|---|---|---|---|---|---|---|

| Static Simple (Env1) | DDPG (Ours) | 98.0 | 12.5 | 5.2 | 892.3 | 0.41 |

| A* | 100.0 | 14.1 | 1.1 | N/A | 0.52 | |

| DQN | 90.0 | 13.8 | 4.8 | 805.7 | 0.32 | |

| Static Complex Maze (Env2) | DDPG (Ours) | 94.0 | 29.8 | 5.5 | 778.5 | 0.28 |

| RRT* | 88.0 | 36.7 | 18.3 | N/A | 0.25 | |

| DQN | 70.0 | 34.2 | 4.9 | 621.4 | 0.19 | |

| Dynamic Obstacles (Env3) | DDPG (Ours) | 85.0 | 26.3 | 5.7 | 745.1 | 0.25 |

| DQN | 62.0 | 28.9 | 5.0 | 540.8 | 0.18 | |

| RRT* (Re-planned) | 45.0 | 33.1 | 25.7 | N/A | 0.15 |

Analysis:

- Effectiveness & Optimality: In all environments, the DDPG-based algorithm achieves the highest success rate and the shortest average path length among learning-based methods, demonstrating its effectiveness and ability to learn near-optimal paths. While A* achieves 100% success in the simple static case, it requires a perfect map and cannot handle dynamic environments (Env3).

- Adaptability: The key strength of our approach is evident in Env3 (Dynamic). DDPG maintains an 85% success rate, significantly outperforming DQN (62%) and the frequently re-planned RRT* (45%). This shows the learned policy’s capacity to anticipate and react to moving obstacles.

- Efficiency & Safety: DDPG’s inference time (~5-6 ms) is suitable for real-time control on the intelligent robot. The consistently higher Average MOD compared to DQN confirms that the designed safety penalty \( r_{\text{safety}} \) effectively encourages safer navigation.

- Reward Convergence: The high ACR values for DDPG across environments validate the design of the composite reward function, confirming it successfully guides the intelligent robot toward the desired behavior.

6. Conclusion

This research addresses the critical challenge of path planning for intelligent robots in dynamic and complex environments by developing a Deep Reinforcement Learning-based solution. By meticulously modeling the problem as a Markov Decision Process and employing the DDPG algorithm, we enable an intelligent robot to learn continuous navigation policies directly from sensory inputs. The carefully engineered state representation, which fuses local occupancy information, goal-relative data, and motion history, provides a rich perceptual basis for decision-making. Furthermore, the hierarchically structured reward function, incorporating dense progress shaping and proactive safety penalties, proves essential for training performant and robust policies.

Experimental results across static and dynamic simulated environments demonstrate the superior adaptability, efficiency, and safety of the proposed approach compared to classical and other learning-based baselines. The algorithm equips the intelligent robot with the capability to navigate narrow passages, avoid static and moving obstacles, and generate smooth trajectories. This work provides a solid foundation and a promising new direction for achieving higher levels of autonomy in intelligent robots, with direct applicability to fields such as autonomous logistics, robotic search and rescue, and interactive service robotics. Future work will focus on transferring the simulated policy to a physical intelligent robot platform and investigating meta-RL techniques for rapid adaptation to entirely novel environments.