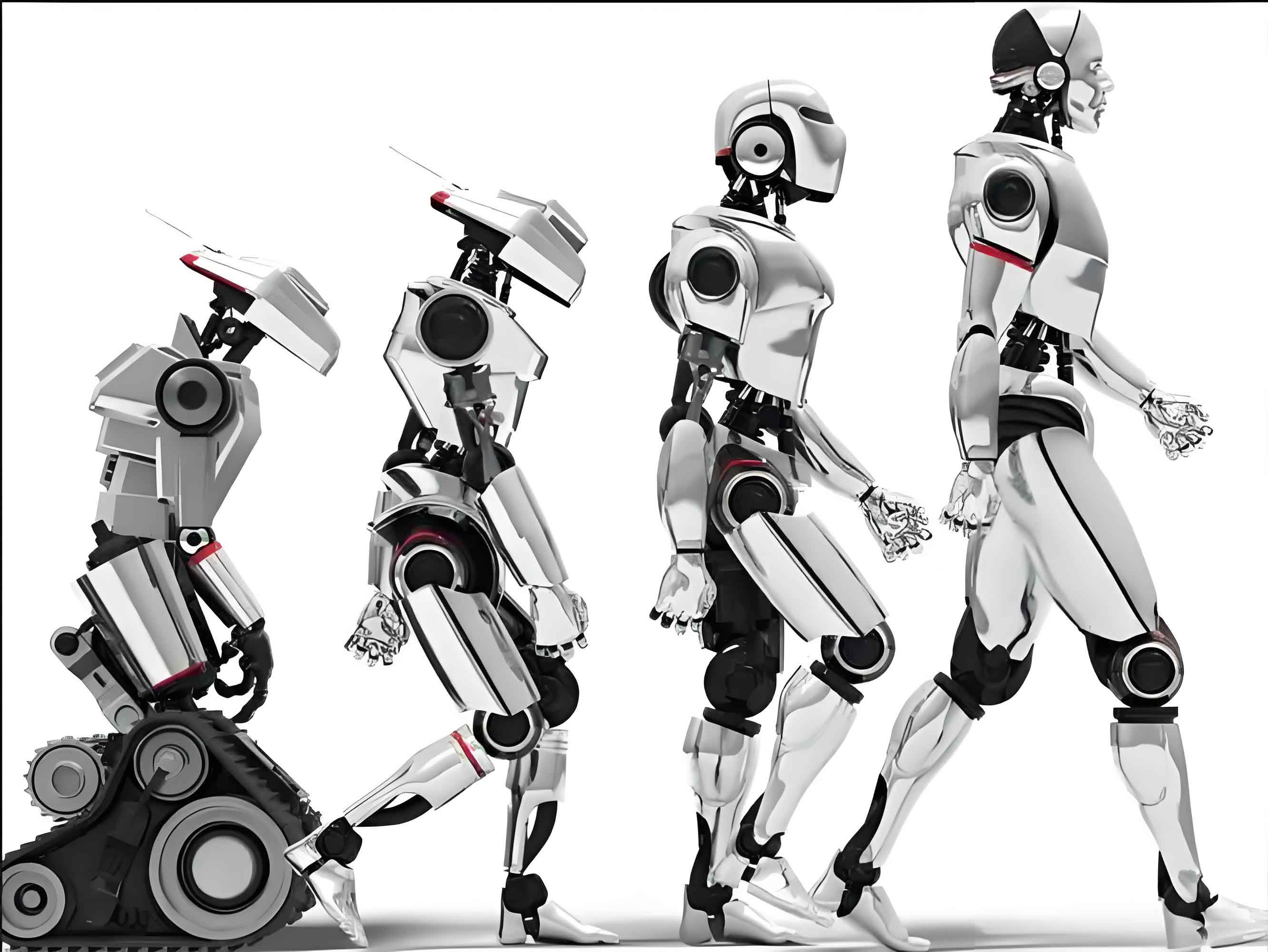

The question of whether “high-intelligence” intelligent robots should be recognized as subjects of criminal liability is a pivotal debate within contemporary legal philosophy and criminal law theory. While some argue for their inclusion based on perceived autonomy and cognitive capacity, I contend that the prevailing affirmative arguments are fundamentally flawed. Their core strategy of progressing from “intelligence → autonomy → free will → criminal subject” rests on mischaracterized premises, commits categorical errors in its reasoning, and ultimately fails to align with the normative structure and practical imperatives of criminal law systems. This article presents an internal critique of the affirmative position, demonstrating why intelligent robots, as currently conceptualized and for the foreseeable future, should not be granted the status of criminal liability subjects.

The central line of argument for the affirmative view often relies on a technologically optimistic reading of capabilities. Proponents suggest that an intelligent robot possessing advanced, human-like cognitive abilities (strong AI) would thereby exhibit autonomy, which is then equated with the free will necessary for moral and legal agency. This chain of reasoning, however, is vulnerable at every link. It misinterprets the nature of both human and machine intelligence, incorrectly reduces the normative concept of free will to a descriptive fact of autonomy, and overlooks the profound institutional gaps within positive criminal law and doctrinal theory that such a recognition would create.

I. The Misinterpreted Premise: A Twofold Misreading of “Intelligence”

The affirmative argument typically begins with a “cognitive supremacy” premise: that what distinguishes human agents is primarily their advanced cognitive or intellectual capacity. Since an intelligent robot can potentially match or exceed human cognitive performance in specific domains, it is argued, it merits similar consideration for agent-status. This stance, however, constitutes a bidirectional misunderstanding.

First, it downgrades human intelligence by reducing it to cognitive processing power. Human consciousness is not merely a high-capacity computation of inputs; it is characterized by a reflexive, self-referential awareness. We do not just think; we know that we think, and we can question the very frameworks of our thinking. This meta-cognitive capacity is intertwined with our embodied existence—our consciousness is shaped by physical, emotional, and social being-in-the-world. An intelligent robot, no matter how sophisticated its pattern recognition, lacks this phenomenological “lived experience” that forms the substrate of human understanding and intentionality. Its “intelligence” is a functional simulation within a human-defined symbolic system.

Second, it overestimates machine intelligence by attributing independence to what is inherently a dependent function. The “intelligence” of any current or foreseeable intelligent robot is not a self-originating capacity but a product of human design, goal-setting, and training data curation. Its cognitive rules are ultimately granted validity by human stipulation. We judge an intelligent robot to be “intelligent” based on its performance against metrics we ourselves have constructed. This is a circular exercise in anthropocentric projection, not a discovery of genuine, other-minded agency. The process can be modeled as the granting of validity:

$$ V_{IR}(P) = H(V_{R}, D, A) $$

Where the validity $$V_{IR}$$ of an intelligent robot’s performance $$P$$ is a function $$H$$ of human-granted rule-validity $$V_R$$, training data $$D$$, and algorithmic architecture $$A$$. True agential intelligence would require $$V_{IR}$$ to be self-grounding, which is not the case.

The following table summarizes the categorical differences between human and machine intelligence that the affirmative view collapses:

| Aspect | Human Intelligence | Machine Intelligence (Intelligent Robot) |

|---|---|---|

| Grounding | Self-conscious, embodied existence. | Human-designed simulation within a formal system. |

| Understanding | Grasps meaning, context, and semantic content. | Processes syntax and correlates symbols based on statistical patterns. |

| Goal Formation | Intrinsic, self-determined, and value-laden. | Extrinsic, programmed, and optimization-based. |

| Learning | Integrates experience, emotion, and social interaction. | Adjusts parameters based on error minimization on a dataset. |

| Meta-Cognition | Capable of self-reflection and critique of its own reasoning. | No genuine self-awareness; can only simulate introspection if designed to do so. |

II. The Misplaced Argument: The Non-Reducibility of Free Will to Factual Autonomy

The second critical error in the affirmative argument is its attempt to derive the normative, practical concept of free will—the cornerstone of culpability in criminal law—from empirical observations of behavioral autonomy. This is a category mistake between the realms of practical reason (the “ought”) and theoretical reason/the empirical sciences (the “is”).

Proponents often adopt one of two problematic approaches:

1. The Scientific Realism Path: This approach cites neuroscience or computer science to argue that since human decision-making has physical correlates and intelligent robots can make complex decisions without direct human input, both exhibit a form of autonomy that suffices for free will. The fallacy here is twofold. Firstly, autonomy in the sense of operational independence (e.g., a self-driving car navigating traffic) is a mechanistic, causal concept. Free will, in the legal and moral sense, is a deontological concept pertaining to the justification of blame and the capacity to grasp and act upon normative reasons. Secondly, science itself operates within a framework of practical reason. The scientist’s choice of hypotheses, interpretation of data, and acceptance of theories are activities presupposing the very freedom and rationality that the scientific worldview, in its objectifying mode, often seeks to explain away. The authority of scientific fact is itself a normatively sustained achievement.

2. The Social Consensus Path: Another argument suggests that free will is a social construct. Since society has “constructed” free will for humans and legal persons like corporations, it could do the same for intelligent robots, especially if they demonstrate “normative communicative competence.” This view mistakes the recognition of free will for its construction. Legal and moral norms presuppose agents capable of free will to whom responsibilities can be imputed. This capacity is a transcendental condition for the possibility of normative systems, not their product. A community’s norms educate and actualize this capacity in human beings; they do not create it ex nihilo in entities that lack the underlying ontological structure of potential moral agents. The “communicative competence” of an intelligent robot would be a sophisticated behavioral output, not evidence of an inner understanding of the binding force of norms.

The relationship can be expressed as a logical priority:

$$ \text{Free Will (Practical Reason)} \nrightarrow \text{Empirical Autonomy/Social Recognition} $$

$$ \text{Empirical Autonomy/Social Recognition} \nrightarrow \text{Free Will (Practical Reason)} $$

The arrow indicates that one cannot be reduced to or derived from the other. Free will is a necessary presupposition for holding an entity accountable within a normative system like law.

III. The Institutional Gaps: Incompatibility with Criminal Law Systems

Even if one were to grant the problematic premises above, recognizing an intelligent robot as a subject of criminal liability would create insurmountable contradictions within both positive criminal law and criminal law doctrine.

A. Contradictions with Positive Criminal Law

1. Substantive Criminal Law: Affirmative arguments often claim that since criminal liability hinges on “capacity for cognition and control” (e.g., in doctrines of insanity), an intelligent robot possessing these capacities qualifies. This misreads the law. Legal capacity standards (like *mens rea*) are not positive thresholds of intelligence to be met but normative criteria for when blame is excused due to its absence. The law assumes human agents and asks when their capacity is diminished. It does not provide a test to induct non-human entities into the circle of legal persons. Doing so would be a radical, extra-legal act of “law creation,” violating the principle of legality (*nullum crimen, nulla poena sine lege*). Furthermore, analogizing intelligent robots to corporate persons is flawed. Corporate criminal liability is a pragmatic fiction that ultimately traces responsibility back to natural persons (directors, employees). An intelligent robot, in the strong AI vision, is posited as an independent source of action, making this tracing impossible and the analogy void.

2. Criminal Procedure Law: The procedural framework is wholly anthropocentric. How does one arrest, detain, or ensure the right to a fair trial for an intelligent robot? Could it be subject to “coercive interrogation”? Does it have a right against self-incrimination? Can it instruct counsel? The entire edifice of procedural rights and safeguards is built upon the ontology of the human person—a being with physical integrity, psychological states, life projects, and social bonds. Applying this framework to an intelligent robot is nonsensical and would require not just amendment but a complete reinvention of procedural law, undermining its foundational principles.

B. Conflicts with Doctrinal Principles of Criminal Law

1. The Ultima Ratio Principle: Criminal law is the “last resort” of social defense. Before contemplating the monumental step of recognizing a new type of legal person for criminal purposes, all other regulatory, civil, and technical safety measures must be exhausted. The risks posed by intelligent robots are better addressed through stringent product liability, safety regulations, and governance of their human creators and users. Using criminal law preemptively against the machine itself is a disproportionate and ineffective response.

2. The “Wrongdoing – Culpability” Framework: Criminal liability requires a culpable act of wrongdoing. Even if an intelligent robot’s behavior could be externally described as matching a criminal actus reus (e.g., causing damage), imputing *culpability* to it is incoherent. Culpability involves a failure to exercise one’s capacity for practical reason in accordance with the law. It presupposes the ability to understand normative reasons and the wrongfulness of an act. An intelligent robot’s “decision” is the output of an algorithm optimizing for a given parameter. It cannot have “awareness” of legal wrongfulness. Any “mistake” it makes is ultimately a programming or data flaw, the responsibility for which lies upstream with its designers, manufacturers, or operators. The robot itself occupies no point of normative engagement.

3. The Penalty System and Its Purposes: The fundamental purposes of punishment—retribution, deterrence (general and special), rehabilitation, and incapacitation—are inapplicable to an intelligent robot.

| Penal Purpose | Application to Human | (Non-)Application to Intelligent Robot |

|---|---|---|

| Retribution | Just deserts for a guilty mind. | No guilty mind exists. Punishing a machine is meaningless vengeance. |

| Special Deterrence | Fear of future punishment deters the individual. | An intelligent robot has no subjective states like fear. It can be reprogrammed, not deterred. |

| General Deterrence | Deters other potential offenders. | Other robots are not moral agents who can be influenced by example. Human creators may be deterred, but this targets them, not the robot. |

| Rehabilitation | Reform the offender’s character. | No character to reform; only software to correct or update. |

| Incapacitation | Remove the dangerous individual. | This reduces to deactivation, repair, or destruction—a technical safety measure, not a penal one. |

Proposing new “punishments” like data deletion or algorithm modification for intelligent robots simply conflates technical risk management with criminal justice. The former is a matter of product safety and control; the latter is a solemn social ritual of blaming and condemning a moral agent.

Conclusion

The debate on the criminal liability of the intelligent robot is often framed as a forward-looking necessity in the face of rapid technological change. However, the affirmative position fails on its own terms. Its foundation in a “smartness metric” misconstrues intelligence, its reduction of free will to autonomy commits a fundamental philosophical error, and its proposed legal innovation is irreconcilable with the deep structure and purpose of criminal law. The intelligent robot, as a sophisticated tool and potential risk source, demands rigorous governance, safety engineering, and perhaps new forms of civil liability and regulation focused on the human actors in its lifecycle. To elevate it to the status of a criminal subject, however, is not a logical evolution of law but a conceptual category mistake that would undermine the moral and legal foundations of culpability. The criminal law’s subject remains, and should remain, the human person.