In this article, I explore the pivotal role of sensor fusion technology in the development and operation of intelligent robots. As the demand for autonomous systems grows across industries, the ability of intelligent robots to perceive, navigate, and interact with dynamic environments has become increasingly critical. Sensor fusion, by integrating data from multiple sensors, enables these robots to achieve higher accuracy, reliability, and robustness. I will delve into the fundamental concepts, classifications, advantages, challenges, and applications of sensor fusion, followed by an in-depth analysis of the functional requirements and architectural design of intelligent robot control systems. Throughout this discussion, I will emphasize how sensor fusion underpins the core functionalities of intelligent robots, supported by tables and formulas to summarize key points. The goal is to provide a thorough understanding that spans over 8000 tokens, ensuring comprehensive coverage while maintaining a first-person perspective.

To begin, let me define sensor fusion technology. It refers to the process of combining data from multiple sensors to produce more accurate, reliable, and comprehensive information than what could be obtained from any single sensor. This is essential for intelligent robots, as they operate in complex, unpredictable environments where no single sensor can capture all necessary details. For example, in an industrial setting, an intelligent robot might use laser radar for precise distance measurements, cameras for visual recognition, inertial measurement units (IMUs) for orientation tracking, and ultrasonic sensors for close-range obstacle detection. By fusing these data streams, the intelligent robot can build a holistic model of its surroundings, enabling tasks such as autonomous navigation, object manipulation, and real-time decision-making. I will now break down the classifications of sensor fusion to illustrate its hierarchical nature.

Sensor fusion can be categorized into three levels based on the processing stage: data-level fusion, feature-level fusion, and decision-level fusion. Data-level fusion, also known as low-level fusion, involves direct integration of raw sensor data. This approach is computationally efficient and suitable for real-time applications, but it requires precise time synchronization and is sensitive to noise. A common example is the fusion of accelerometer and gyroscope data in an IMU to estimate orientation, often using complementary filters. The formula for a simple weighted average fusion at this level can be expressed as:

$$ \hat{x} = \sum_{i=1}^{n} w_i x_i $$

where $\hat{x}$ is the fused estimate, $x_i$ are the raw sensor measurements, and $w_i$ are the weights assigned to each sensor based on reliability. Feature-level fusion, or mid-level fusion, involves extracting features from sensor data before combining them. This reduces data dimensionality and focuses on relevant information, such as edges from images or keypoints from point clouds. Decision-level fusion, or high-level fusion, merges decisions or classifications from individual sensors, such as combining obstacle detections from laser radar and cameras to confirm an object’s presence. The following table summarizes these levels:

| Fusion Level | Description | Advantages | Challenges | Example in Intelligent Robots |

|---|---|---|---|---|

| Data-Level | Direct fusion of raw sensor data | High precision, real-time capability | Requires strict synchronization, noise-sensitive | IMU data fusion for attitude estimation |

| Feature-Level | Fusion of extracted features | Reduced data size, efficient processing | Feature selection complexity | Combining visual features with LiDAR points for mapping |

| Decision-Level | Fusion of sensor-based decisions | Robust to sensor failures, flexible | May lose detailed information | Integrating obstacle alerts from multiple sensors for collision avoidance |

The advantages of sensor fusion in intelligent robots are manifold. Firstly, it enhances data reliability by compensating for individual sensor limitations. For instance, in low-light conditions, a camera may fail, but laser radar can still provide distance data, ensuring the intelligent robot continues to operate safely. Secondly, it improves accuracy through complementary data; laser radar offers precise ranging, while cameras add color and texture context. This synergy is crucial for tasks like 3D environment reconstruction. Thirdly, it boosts system robustness, allowing intelligent robots to adapt to dynamic environments like weather changes or moving obstacles. However, challenges persist, including data synchronization issues, algorithm complexity, and computational demands. To address these, I will later discuss hardware and software optimizations in control system design.

In terms of applications, sensor fusion is integral to navigation, environmental perception, and motion control in intelligent robots. For navigation, fusion of GPS, IMU, and visual data enables precise localization even in GPS-denied areas, using techniques like simultaneous localization and mapping (SLAM). In environmental perception, combining laser radar point clouds with camera images allows intelligent robots to detect and classify objects, such as identifying humans in a crowded space. For motion control, sensor fusion provides real-time feedback on robot pose and velocity, enabling precise trajectory tracking. A common formula for path planning in intelligent robots is the A* algorithm, which uses a cost function:

$$ f(n) = g(n) + h(n) $$

where $f(n)$ is the total cost, $g(n)$ is the cost from the start node to node $n$, and $h(n)$ is the heuristic estimate to the goal. This relies on fused sensor data to accurately model the environment. Now, let me transition to analyzing the functional requirements of intelligent robot control systems, as these dictate how sensor fusion is implemented.

Intelligent robot control systems must meet several key functional requirements to ensure effective operation. First, environmental perception is foundational, requiring real-time processing of multi-sensor data to detect obstacles, recognize targets, and map surroundings. This demands high data accuracy and low latency, as any delay could lead to collisions or task failures. Second, path planning involves generating optimal routes based on perceived data, balancing factors like distance, time, and energy consumption. Intelligent robots must dynamically adjust paths to avoid moving obstacles, which necessitates efficient algorithms. Third, motion control executes planned actions with precision, such as moving limbs or wheels. This requires robust control algorithms that can handle disturbances, often implemented using proportional-integral-derivative (PID) controllers:

$$ u(t) = K_p e(t) + K_i \int_0^t e(\tau) d\tau + K_d \frac{de(t)}{dt} $$

where $u(t)$ is the control output, $e(t)$ is the error signal, and $K_p$, $K_i$, $K_d$ are tuning parameters. These functions must satisfy performance indicators like real-time responsiveness, robustness to uncertainties, high precision, and fault tolerance. For example, in a logistics intelligent robot, motion control must ensure stable cargo transport while avoiding sudden stops. The challenges include synchronizing multi-sensor data streams and optimizing algorithm complexity for embedded platforms. Below is a table summarizing these requirements:

| Functional Requirement | Description | Key Performance Indicators | Challenges | Role of Sensor Fusion |

|---|---|---|---|---|

| Environmental Perception | Real-time sensing of surroundings via multi-sensor data | Accuracy, latency, completeness | Data synchronization, noise filtering | Integrates LiDAR, camera, etc., for holistic view |

| Path Planning | Generating optimal routes based on environmental data | Efficiency, adaptability, safety | Dynamic obstacle avoidance, computational load | Uses fused data for accurate map building and obstacle detection |

| Motion Control | Executing precise movements using control algorithms | Precision, stability, responsiveness | Disturbance rejection, real-time feedback | Provides fused pose and velocity data for control loops |

Having outlined the functional needs, I will now delve into the overall architectural design of intelligent robot control systems, which encompasses both hardware and software components. This design is crucial for realizing the benefits of sensor fusion in practical intelligent robot applications.

The hardware architecture of an intelligent robot control system forms the physical backbone, enabling data acquisition, processing, and actuation. It primarily consists of multi-sensor integration and controller hardware platforms. Multi-sensor integration involves strategically deploying various sensors to capture diverse environmental cues. For instance, laser radar is often mounted on top for 360-degree scanning, cameras are placed at the front for forward vision, IMUs are embedded internally for orientation tracking, and ultrasonic sensors are positioned at the base for near-ground obstacle detection. This arrangement ensures comprehensive coverage, essential for intelligent robots operating in cluttered spaces. Each sensor has specific characteristics, as summarized in the table below:

| Sensor Type | Key Function | Typical Data Format | Advantages | Limitations | Integration Role in Intelligent Robots |

|---|---|---|---|---|---|

| Laser Radar (LiDAR) | Distance measurement via point clouds | 3D coordinates | High range accuracy, works in darkness | Costly, sensitive to reflective surfaces | Provides precise environmental mapping for navigation |

| Camera | Visual imaging for color and texture | 2D/3D image arrays | Rich detail, object recognition | Affected by lighting, limited depth data | Enables target identification and scene understanding |

| Inertial Measurement Unit (IMU) | Tracking orientation and acceleration | Angular rates, linear accelerations | High short-term precision, compact | Drift over time, cumulative errors | Supports attitude stabilization and motion estimation |

| Ultrasonic Sensor | Short-range distance sensing | Analog/digital distance values | Low cost, reliable for close objects | Limited range, affected by air conditions | Assists in fine obstacle avoidance and docking tasks |

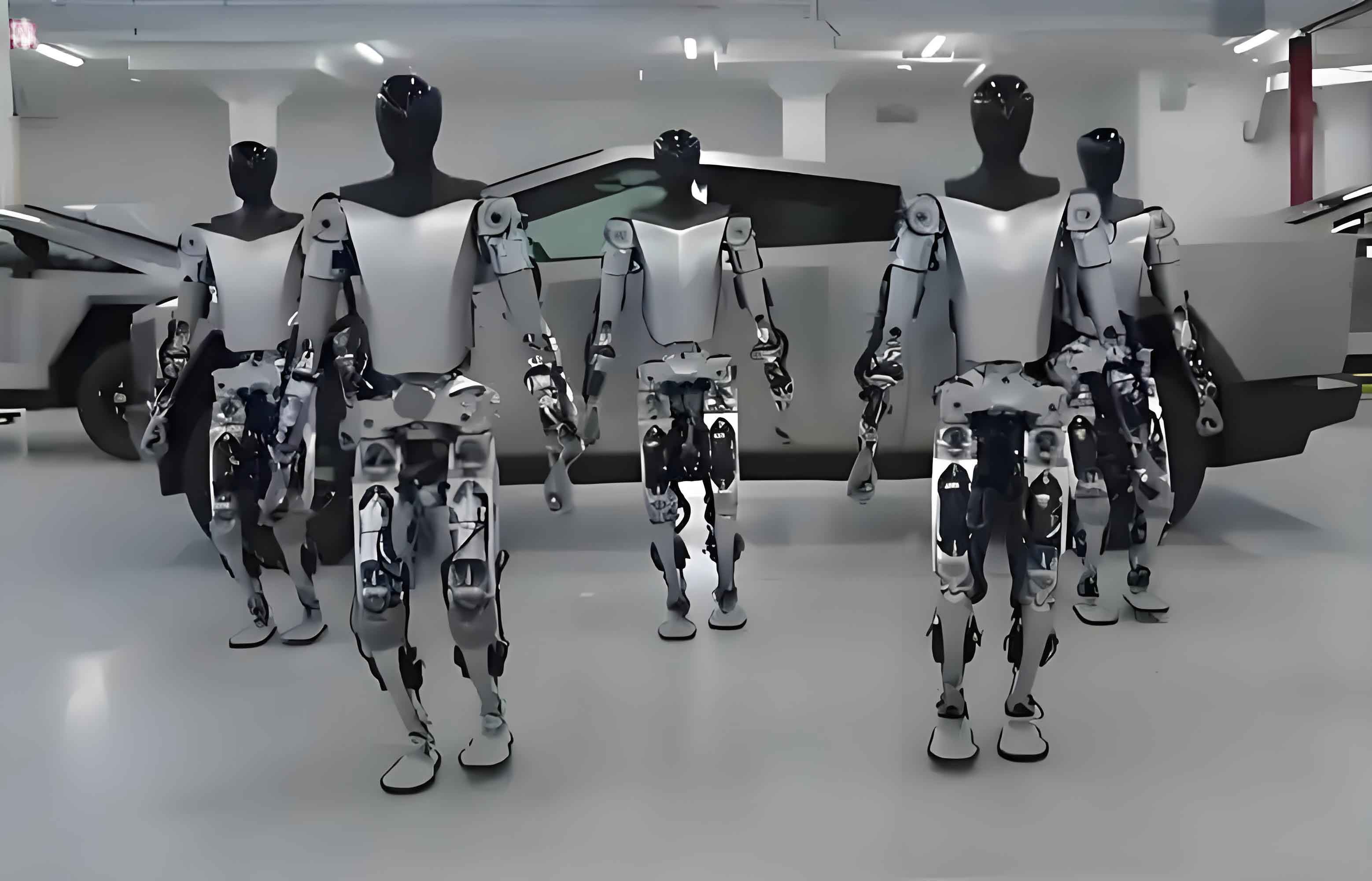

The controller hardware platform processes sensor data and executes control algorithms. Embedded systems, such as ARM-based processors, are popular for their low power consumption and real-time capabilities, suitable for lightweight intelligent robots. For more complex tasks, industrial computers with multi-core CPUs and expandable interfaces offer greater computational power, enabling advanced fusion algorithms and machine learning models. These platforms must support high-speed communication buses like Ethernet or CAN to handle data from multiple sensors efficiently. In my design approach, I prioritize modularity to allow easy upgrades, ensuring that the intelligent robot can adapt to evolving requirements. Now, let me insert an illustrative image of an intelligent robot leveraging such hardware, which highlights the integration of sensors in a real-world setup.

Moving to the software architecture, it is the brain of the intelligent robot, orchestrating data flow, fusion, and control actions. I divide it into three main modules: data acquisition and fusion, control algorithms, and communication and interfaces. The data acquisition and fusion module is responsible for collecting raw sensor data, synchronizing it, and applying fusion techniques. This module must handle diverse protocols—for example, LiDAR data over Ethernet, camera streams via USB, IMU data through SPI, and ultrasonic signals via GPIO. Time synchronization is critical here; I often use timestamp-based methods or hardware triggers to align data streams. For fusion, algorithms like Kalman filters are employed to combine data optimally. A simplified version for sensor fusion can be represented as:

$$ \hat{x}_{k|k} = \hat{x}_{k|k-1} + K_k (z_k – H \hat{x}_{k|k-1}) $$

where $\hat{x}_{k|k}$ is the updated state estimate, $K_k$ is the Kalman gain, $z_k$ is the measurement vector from sensors, and $H$ is the observation matrix. This enables the intelligent robot to maintain accurate state estimates despite sensor noise.

The control algorithm module encompasses environment perception, path planning, and motion control algorithms. For perception, I implement obstacle detection using fused LiDAR and camera data, often applying clustering techniques on point clouds paired with image segmentation. Path planning leverages algorithms like Rapidly-exploring Random Trees (RRT) for dynamic environments, which require real-time updates based on fused sensor inputs. The cost function for optimization might include terms for smoothness and safety, expressed as:

$$ J = \int (w_1 \| \dot{x} \|^2 + w_2 \| \ddot{x} \|^2 + w_3 \cdot \text{collision\_risk}) dt $$

where $w_1, w_2, w_3$ are weights, and $\dot{x}$ and $\ddot{x}$ are velocity and acceleration. Motion control uses PID or model predictive control (MPC) to track planned trajectories, with feedback from fused IMU and encoder data. This module ensures that the intelligent robot executes tasks efficiently and safely.

The communication and interface module manages data exchange between hardware components and external systems. It employs protocols like ROS (Robot Operating System) for middleware communication, CAN bus for real-time control messages, and Ethernet for high-bandwidth sensor data. In my designs, I optimize these protocols for low latency and reliability. For instance, CAN bus uses message prioritization to ensure critical control commands are transmitted promptly. The software architecture must also support interoperability, allowing the intelligent robot to integrate with other devices or cloud platforms for advanced analytics. Below is a table summarizing the software modules:

| Software Module | Primary Functions | Key Algorithms/Techniques | Inputs | Outputs | Impact on Intelligent Robot Performance |

|---|---|---|---|---|---|

| Data Acquisition and Fusion | Collect and synchronize multi-sensor data; apply fusion algorithms | Kalman filter, weighted average, timestamp synchronization | Raw sensor data (LiDAR, camera, IMU, etc.) | Fused environmental state estimates | Enhances perception accuracy and reliability for decision-making |

| Control Algorithm | Environment perception, path planning, motion control | Obstacle detection, A*/RRT planning, PID/MPC control | Fused sensor data, task commands | Planned paths, control signals for actuators | Ensures efficient, safe, and precise task execution in dynamic settings |

| Communication and Interface | Manage data protocols and device interoperability | ROS, CAN bus, Ethernet protocols | Internal/external data streams | Formatted messages for hardware or networks | Facilitates real-time coordination and scalability of the intelligent robot system |

Throughout this discussion, I have emphasized how sensor fusion technology is woven into every aspect of intelligent robot design. From hardware integration to software algorithms, it enables intelligent robots to overcome the limitations of individual sensors, achieving higher levels of autonomy and efficiency. The synergy between hardware and software architectures is vital; for example, a well-designed controller platform can accelerate fusion computations, while optimized algorithms reduce latency in critical control loops. In practice, this allows intelligent robots to perform complex tasks—such as autonomous navigation in warehouses, precision assembly in factories, or exploration in hazardous environments—with remarkable reliability.

To further illustrate the mathematical underpinnings, let me provide additional formulas commonly used in sensor fusion for intelligent robots. For multi-sensor data fusion, a Bayesian approach can be applied to update beliefs based on new evidence. The posterior probability after fusion is given by:

$$ P(x | z_1, z_2) = \frac{P(z_1, z_2 | x) P(x)}{P(z_1, z_2)} $$

where $x$ is the state variable, and $z_1, z_2$ are measurements from two sensors. In path planning, the Dijkstra algorithm uses a cost matrix derived from fused sensor data to find shortest paths, with the update rule:

$$ d[v] = \min(d[v], d[u] + w(u,v)) $$

where $d[v]$ is the distance to node $v$, $u$ is the current node, and $w(u,v)$ is the edge weight based on environmental constraints. These formulas highlight how sensor fusion feeds into higher-level decision processes in intelligent robots.

In conclusion, the application of sensor fusion technology in intelligent robots is a multifaceted endeavor that requires careful consideration of both hardware and software elements. By designing robust architectures that integrate diverse sensors and efficient fusion algorithms, intelligent robots can achieve enhanced perception, navigation, and control capabilities. The tables and formulas presented here summarize key concepts, from fusion classifications to control system requirements, providing a structured understanding. As technology advances, I anticipate further innovations in sensor fusion, such as deep learning-based fusion methods, which will propel intelligent robots into even more sophisticated roles across industries. This exploration underscores the transformative potential of sensor fusion in making intelligent robots more adaptive, reliable, and intelligent, ultimately driving progress toward fully autonomous systems.