The rapid advancement of social and economic development has catalyzed an escalating demand for intelligent machines capable of interacting with and augmenting the human world. This demand has found a compelling answer in the convergence of robotics and artificial intelligence, giving rise to the paradigm of embodied intelligence. The core tenet of this paradigm posits that intelligence is not merely a disembodied computational process but is fundamentally shaped by an agent’s physical interactions with its environment. An embodied AI robot, therefore, is not just a machine that “thinks” but one that “perceives, thinks, and acts” within a physical context. It represents the ideal vessel for embodied intelligence, leveraging its programmability, anthropomorphic potential, and powerful executive capabilities to bridge the digital and physical realms. The significance of research in this field is profound, promising to address complex societal needs, revolutionize human-robot collaboration, and drive foundational technological progress across sectors such as healthcare, advanced manufacturing, domestic assistance, and logistics.

This article delves into the conceptual framework, core technological pillars, prevailing challenges, and prospective evolution of the embodied AI robot. We will systematically analyze its architecture, dissect the critical technologies enabling its functionality, confront the significant hurdles impeding its maturation, and propose pathways for future development. The objective is to provide a comprehensive overview that can inform research strategies and contribute to the establishment of a robust industrial and academic ecosystem for embodied intelligence.

Conceptual Overview and Architectural Analogy

At its essence, an embodied AI robot integrates advanced AI capabilities into a physical robotic platform, endowing it with the ability to perceive its surroundings, understand tasks, formulate plans, and execute physical actions. A useful analogy for understanding its architecture is the human neurobiological system. The system can be broadly partitioned into two interdependent segments: a “brain-like” computational core and a “body-like” physical apparatus.

The computational core mirrors higher-order cognitive and regulatory functions:

- The “Cerebrum” (High-Level Cognition & Decision-Making): This corresponds to the central AI processing unit, often powered by large foundation models. It is responsible for task comprehension, strategic planning, abstract reasoning, and interaction with human instructions via natural language. It transforms high-level goals into actionable plans.

- The “Cerebellum” (Motion Coordination & Control): This module focuses on the fine-grained coordination and real-time adjustment of movement. It takes the abstract motion plans from the “cerebrum” and translates them into stable, coordinated, and dynamically balanced trajectories for the robot’s actuators, handling complexities like balance and multi-limb coordination.

- The “Brainstem” (Signal Relay & Basic Functions): This represents the critical low-level interface and communication layer. It manages the routing of sensor data streams to the cognitive modules and transmits refined motor commands from the control modules to the physical actuators, ensuring timely and synchronized operation.

The physical apparatus, or the robot本体, constitutes the tangible interface with the world:

- Sensors (Perception): These are the robot’s sensory organs, including but not limited to RGB-D cameras, LiDAR, inertial measurement units (IMUs), tactile sensors, and microphones. They generate multimodal data streams (visual, depth, auditory, haptic) that form the perceptual foundation.

- Actuators (Execution): These are the muscles and joints, such as electric motors, hydraulic pistons, or pneumatic systems. They execute the physical motions dictated by the control system, enabling manipulation, locomotion, and interaction with objects.

The synergistic operation of these components enables the closed perception-cognition-action loop that defines an embodied AI robot. With the advent of powerful large language models (LLMs) and vision-language models (VLMs), these robots are evolving from pre-programmed automata into systems that can “understand” natural language commands, decompose them into sub-tasks, and exhibit remarkable versatility and decision-making autonomy in unstructured environments.

Core Technological Pillars of the Embodied AI Robot

The realization of a fully functional embodied AI robot rests on the integration and advancement of several interdependent key technologies. The following table summarizes these pillars and their primary objectives:

| Technological Pillar | Primary Objective | Key Enabling Methods & Models |

|---|---|---|

| Multimodal Perception | To construct a comprehensive, unified, and actionable representation of the environment from heterogeneous sensor data. | Sensor Fusion (Kalman/ Particle Filters), Transformer-based VLMs (PaLM-E, Florence), 3D Scene Understanding |

| Autonomous Decision & Learning | To generate context-aware, goal-oriented action sequences and policies through learning from interaction and instruction. | Reinforcement Learning (PPO, DDPG), LLM-based Planning, Imitation Learning, World Models |

| Motion Control & Planning | To compute and execute physically feasible, efficient, and safe trajectories for the robot’s body and manipulators. | Path Planning (A*, RRT*, PRM), Kinematic/Dynamic Control, MPC, Imitation Learning |

| Natural Human-Robot Interaction (HRI) | To enable seamless, intuitive, and empathetic communication and collaboration between humans and robots. | Natural Language Processing (ChatGPT, LLaMA), Affective Computing, Gesture Recognition, Voice Synthesis |

1. Multimodal Perception Technology

An embodied AI robot operates in a rich, multisensory world. Multimodal perception technology is tasked with fusing data from disparate sources—cameras, depth sensors, LiDAR, microphones, and tactile arrays—into a coherent environmental model. This involves low-level signal processing, feature extraction, and high-level semantic understanding. Modern approaches heavily leverage transformer-based architectures pre-trained on vast multimodal corpora. For instance, models like Google’s PaLM-E demonstrate “embodiment” by integrating language and visual data to guide robotic tasks directly. The core challenge in fusion can be mathematically framed as estimating a unified state $\mathbf{x}_t$ from multiple noisy observations $\mathbf{z}_t^{(i)}$ from different sensors $i$. A simplified form of a Kalman filter update step for sensor fusion is:

$$ \hat{\mathbf{x}}_t = \hat{\mathbf{x}}_{t|t-1} + \mathbf{K}_t \left( \mathbf{z}_t – \mathbf{H} \hat{\mathbf{x}}_{t|t-1} \right) $$

where $\hat{\mathbf{x}}_{t|t-1}$ is the predicted state, $\mathbf{K}_t$ is the Kalman gain, and $\mathbf{H}$ is the observation model. Advanced methods use deep neural networks to learn these fusion dynamics directly from data.

2. Autonomous Decision and Learning Technology

This is the cognitive core of the embodied AI robot. The decision-making system must contextualize perceptual inputs within the framework of a given task or instruction and generate a sequence of actions. A standard formulation is the Markov Decision Process (MDP) defined by the tuple $(\mathcal{S}, \mathcal{A}, \mathcal{P}, \mathcal{R}, \gamma)$, where:

- $\mathcal{S}$ is the state space (from perception).

- $\mathcal{A}$ is the action space (available motions).

- $\mathcal{P}(s_{t+1} | s_t, a_t)$ is the state transition dynamics.

- $\mathcal{R}(s_t, a_t)$ is the reward function encoding the task goal.

- $\gamma$ is a discount factor.

The goal is to learn a policy $\pi(a|s)$ that maximizes the expected cumulative reward: $$ J(\pi) = \mathbb{E}_{\pi}\left[ \sum_{t=0}^{\infty} \gamma^t \mathcal{R}(s_t, a_t) \right] $$

Reinforcement learning (RL) algorithms like Proximal Policy Optimization (PPO) directly optimize this objective through trial and error. A pivotal recent trend is leveraging the commonsense reasoning and planning capabilities of Large Language Models (LLMs). Here, the LLM acts as a high-level planner, decomposing a natural language instruction (“Make me a cup of coffee”) into a symbolic action sequence (“1. Find kettle, 2. Fill with water, 3. …”), which is then translated into low-level robot commands by a separate system.

3. Motion Control and Planning Technology

This pillar translates abstract decisions into safe and precise physical motion. It is typically hierarchical: Motion Planning finds a collision-free path from start to goal configuration in the robot’s configuration space $\mathcal{C}$. Algorithms like Rapidly-exploring Random Tree (RRT*) probabilistically search this space:

$$ q_{\text{rand}} \leftarrow \text{RandomSample}(\mathcal{C}) $$

$$ q_{\text{near}} \leftarrow \text{NearestNeighbor}(T, q_{\text{rand}}) $$

$$ q_{\text{new}} \leftarrow \text{Steer}(q_{\text{near}}, q_{\text{rand}}) $$

If the path from $q_{\text{near}}$ to $q_{\text{new}}$ is collision-free, it is added to the tree $T$. Motion Control then ensures the robot’s actuators follow the planned trajectory $q_d(t)$ accurately. For a simple robotic joint, a PID controller computes the torque $\tau$:

$$ \tau(t) = K_p e(t) + K_i \int_0^t e(\tau) d\tau + K_d \frac{de(t)}{dt} $$

where $e(t) = q_d(t) – q(t)$ is the tracking error. For complex whole-body control, Model Predictive Control (MPC) or learned policies are employed to handle dynamics and constraints.

4. Natural Human-Robot Interaction (HRI) Technology

For an embodied AI robot to be truly useful and accepted, interaction must be intuitive. Modern HRI leverages the breakthroughs in generative AI. Systems like Microsoft’s integration of ChatGPT can convert conversational language into executable robot code. More advanced frameworks, such as LM-Nav, combine LLMs, VLMs, and navigation models to follow natural language instructions like “Go to the wooden desk near the blue chair.” The underlying process often involves generating a probability distribution over possible actions or responses given the interaction history $h$ and current context $c$. For an LLM-based dialog manager, this is:

$$ P(a | h, c) = \text{Softmax}(\text{LLM}([h; c])) $$

where $a$ is the next action or utterance. Furthermore, affective computing, as seen in models like Hume AI’s EVI, aims to recognize user emotion from vocal tone and facial expression, enabling empathetic responses and building trust—a crucial aspect for collaborative and assistive embodied AI robot applications.

Critical Challenges and Research Frontiers

Despite remarkable progress, the development of sophisticated embodied AI robot systems faces profound technical and ethical hurdles. The table below outlines these challenges and associated research directions.

| Challenge Category | Specific Technical Hurdles | Potential Research Directions |

|---|---|---|

| Perception & Understanding | Sensor noise in dynamic environments; Semantic gap in 3D scene parsing; Real-time fusion of heterogeneous data streams. | Neuromorphic sensors; Self-supervised learning for 3D representation; Efficient transformer variants for edge computing. |

| Decision & Learning | Sample inefficiency of RL; Catastrophic forgetting; Poor sim-to-real transfer; Lack of common sense in LLM plans. | World models and offline RL; Modular and compositional learning; Advanced domain randomization; Grounding LLMs in physical cause-effect. |

| Control & Planning | High-dimensional, non-linear dynamics; Real-time planning in cluttered spaces; Guaranteeing safety and stability. | Hybrid learning/model-based control; Reactive and predictive safety filters; Formal verification of control policies. |

| Natural Interaction | Understanding implicit intent and non-verbal cues; Generating expressive and context-aware robot behavior; Long-term personalization. | Multimodal dialog models; Theory of mind modeling for robots; Lifelong adaptation of interaction policies. |

| Ethics & Safety | Data privacy and security; Algorithmic bias and fairness; Accountability for autonomous actions; Human psychological safety. | Federated learning for robot training; Algorithmic auditing frameworks; Legal and technical frameworks for liability; Trust-calibrating interfaces. |

Technical Bottlenecks in Depth

1. Environmental Perception & Understanding: The accuracy of sensors like Time-of-Flight (ToF) cameras degrades under conditions of strong ambient light, specular reflections, or occlusion, with errors potentially reaching several centimeters. Fusing this noisy, asynchronous data from LiDAR, cameras, and IMUs in real-time remains computationally intensive. While deep learning models offer superior performance, their high computational cost ($O(n^2)$ attention complexity in standard Transformers) challenges deployment on resource-constrained embodied AI robot platforms.

2. Performance Limits in Autonomy: Deep Reinforcement Learning algorithms require millions of interaction steps to converge, which is prohibitively expensive and time-consuming in the real world. Training for weeks in simulation often leads to policies that fail to transfer to reality due to the “reality gap”—the discrepancy between simulated and real physics. The sample inefficiency can be described by the need to learn a value function $V^\pi(s)$ or policy $\pi(a|s)$ from trajectories with high variance, often requiring complex variance-reduction techniques.

3. Complexity of Motion Control: The computational complexity of optimal motion planning grows exponentially with the degrees of freedom (DOF) of the robot and the complexity of the environment. Controlling a high-DOF humanoid embodied AI robot for dynamic tasks like walking or running involves solving complex, under-actuated, and non-linear dynamics equations in real-time:

$$ \mathbf{M}(\mathbf{q})\ddot{\mathbf{q}} + \mathbf{C}(\mathbf{q}, \dot{\mathbf{q}}) + \mathbf{g}(\mathbf{q}) = \boldsymbol{\tau} $$

where $\mathbf{M}$ is the inertia matrix, $\mathbf{C}$ captures Coriolis and centrifugal forces, $\mathbf{g}$ is gravity, and $\boldsymbol{\tau}$ are the joint torques. Ensuring sub-millimeter precision for manipulation amidst sensor and actuator noise is a persistent challenge.

4. The “Naturalness” Barrier in HRI: While LLMs excel at syntax, they struggle with true semantic understanding and grounding, especially with prosody, sarcasm, or context-dependent instructions. For an embodied AI robot, the instruction “Can you give me a hand?” could be literal or figurative. Affective recognition from facial expressions or voice is highly sensitive to lighting, cultural differences in expression, and individual idiosyncrasies, making robust generalization difficult.

5. The Looming Specter of Ethical Risks: An embodied AI robot continuously collects sensitive visual, auditory, and spatial data, raising major privacy concerns. Encryption standards (AES-256, etc.) protect data at rest and in transit but cannot fully eliminate risks of side-channel attacks or physical tampering. Furthermore, an autonomous system making decisions in human spaces must be designed with embedded ethical guardrails to avoid biased or harmful outcomes, necessitating interdisciplinary collaboration between engineers, ethicists, and legal scholars to define frameworks for accountability and safety.

Future Trajectories and Strategic Recommendations

The future evolution of the embodied AI robot will be characterized by deeper convergence across disciplines and technology stacks. The following trends are anticipated to shape the next generation of systems.

| Trend Dimension | Expected Development | Potential Impact |

|---|---|---|

| Design & Morphology | Bio-inspired designs; Soft robotics; Modular and reconfigurable bodies; Advanced actuator materials (e.g., artificial muscles). | Enhanced adaptability, safety in human spaces, and efficiency in diverse environments (underwater, rough terrain). |

| AI & Computing Fusion | Neuro-symbolic AI integration; Foundation models specifically for embodiment; Widespread edge-cloud hybrid computing. | Robots with robust reasoning, faster adaptation to novel tasks, and more efficient real-time processing. |

| Learning Paradigms | Large-scale embodied pre-training in simulation; Social and cultural learning from human interaction; Lifelong learning. | Dramatic reduction in deployment time for new skills; More socially intelligent and personalized robots. |

| Ecosystem & Application | Open platforms and standardized interfaces; Proliferation in personalized healthcare, education, and custom manufacturing. | Accelerated innovation through shared resources; Democratization of robot development; New service-based economies. |

To effectively navigate these trajectories and solidify leadership in the field, a multi-pronged strategic approach is recommended:

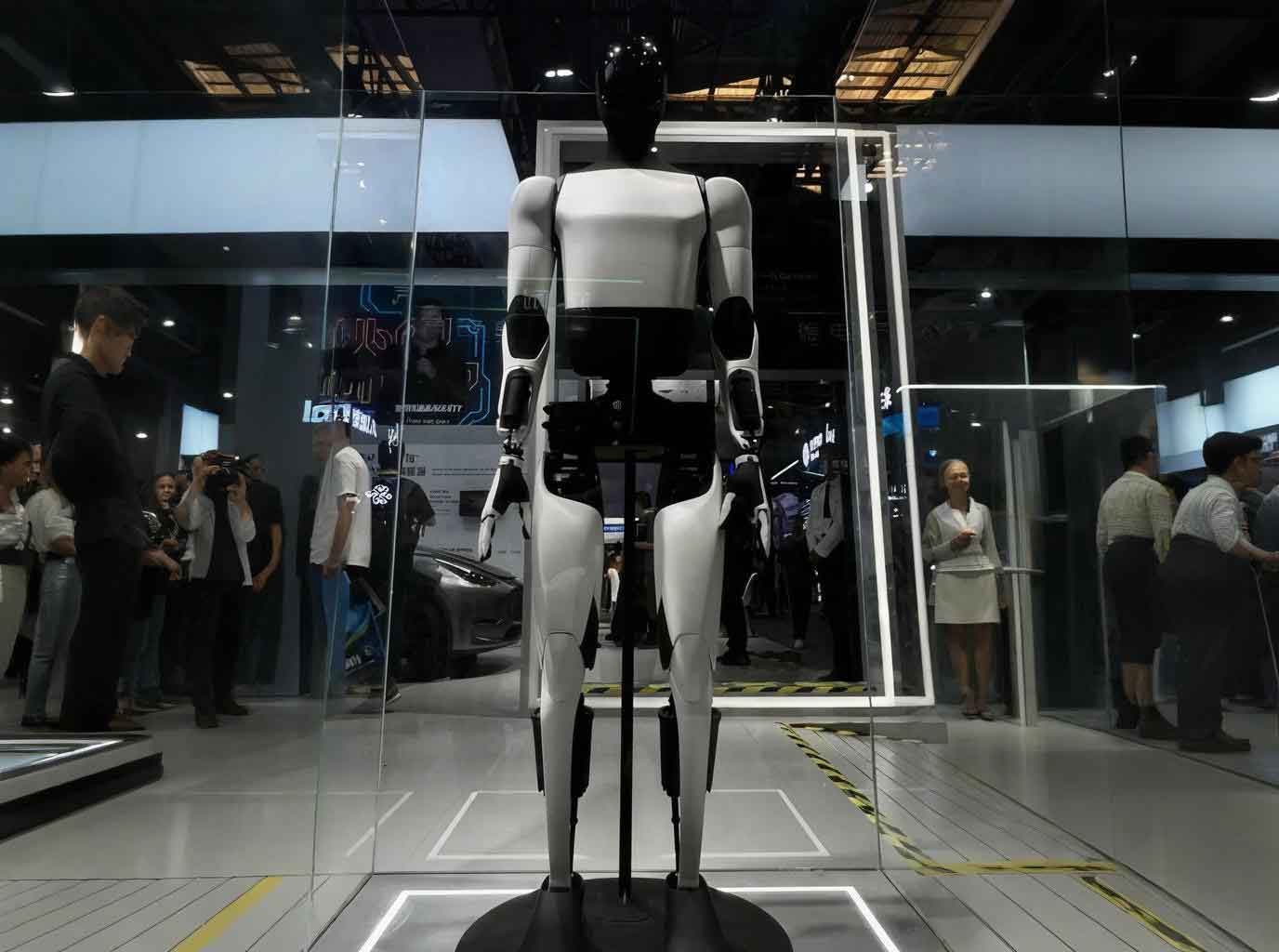

1. Fostering Cross-Disciplinary Innovation in Design: Investment should be directed toward foundational research in bio-inspired mechanics and novel materials. The humanoid form factor, being a natural fit for human-centric environments, warrants focused efforts to overcome its unique challenges in balance and dexterity, making it the premier platform for general-purpose embodied AI robot research.

2. Deepening the AI-Robotics Integration: Research must focus on creating “embodiment-aware” foundation models. These models would be pre-trained not just on text and images, but on physics simulations, robotic sensorimotor data, and action sequences. Furthermore, optimizing these models for deployment on edge devices within the embodied AI robot itself is crucial for real-time responsiveness and privacy.

3. Cultivating a New Generation of Researchers: Academic programs must break down silos between computer science, robotics, mechanical/electrical engineering, cognitive science, and ethics. Hands-on curriculum involving real embodied AI robot platforms is essential to translate theoretical knowledge into practical engineering intuition.

4. Building Collaborative, Open Ecosystems: Progress will be accelerated by open-sourcing benchmarks (like Meta’s Habitat or NVIDIA’s Isaac Sim), standardized robot middleware (ROS 2), and shared hardware designs. Governments can play a pivotal role by funding pre-competitive research consortia and creating regulatory sandboxes to safely test and deploy advanced embodied AI robot applications in public domains like smart cities, assisted living, and precision agriculture.

Conclusion

The embodied AI robot stands at the frontier of a transformative shift in artificial intelligence, one where intelligence is inextricably linked to physical presence and interaction. This article has outlined its conceptual architecture, dissected the core technological pillars—multimodal perception, autonomous learning, motion control, and natural interaction—and confronted the significant technical and ethical challenges that lie ahead. The future of this field is undeniably promising, poised to unlock new levels of automation, assistance, and collaboration. However, realizing this potential requires sustained, coordinated efforts in fundamental research, cross-disciplinary education, and the careful construction of ethical and industrial frameworks. By addressing the current limitations and strategically pursuing the outlined trajectories, we can steer the development of embodied AI robot technology toward a future that is not only technologically advanced but also robust, safe, and profoundly beneficial to society.