The convergence of Artificial Intelligence (AI) and robotics is heralding a new era of autonomous agents. Among these, embodied AI robots represent a paradigm shift, distinguished by their fundamental reliance on a physical form to perceive, interact with, and learn from the real world. This deep integration, often termed “AI+”, is not merely an upgrade but a redefinition of robotic capabilities. Moving beyond pre-programmed sequences executed in structured environments, embodied AI robots leverage advanced AI to achieve a “perception-cognition-decision-execution” closed loop. This article, from my perspective as an observer and analyst of this field, systematically explores the conceptual evolution, technological core, practical applications, and future trajectory of embodied AI robots empowered by the “AI+” wave.

The journey from simple automata to intelligent entities began with symbolic AI and connectionism, but these approaches often treated intelligence as a disembodied process. The concept of embodied intelligence emerged as a corrective, positing that true intelligence arises from the dynamic interaction between an agent’s body, its sensors/actuators, and the environment. An embodied AI robot is the engineering manifestation of this philosophy. It is a physical system that acquires information about its surroundings through multimodal sensors, processes and understands this data using cognitive models, makes informed decisions, and finally, executes physical actions that alter its environment, thereby generating new sensory feedback for continuous learning.

The “AI+” initiative accelerates this development by injecting powerful capabilities into every layer of the robotic stack. To understand the transformative impact, it is useful to contrast an embodied AI robot with other forms of AI-empowered robotics. The distinction lies in the necessity of deep physical interaction and closed-loop learning.

| Feature | Traditional Robot | AI+ Robot (General) | Embodied AI Robot (Subset of AI+ Robot) |

|---|---|---|---|

| Core Driver | Programmed Control | AI Algorithm-Driven | AI Algorithm-Driven |

| Intelligence Level | Low (Executes preset tasks) | Medium-High (Has perception/decision skills) | High (Emphasizes perception-cognition-decision-execution loop) |

| Interaction Depth | Shallow (Limited environmental interaction) | Variable (Depends on application) | Deep (Active interaction & feedback learning via physical body) |

| Physical Embodiment | Required, but passive | May or may not be central | Essential and central to intelligence |

| Learning Capability | None or Weak | Present (Data/Model-based) | Strong (Emphasizes continuous learning from environmental interaction) |

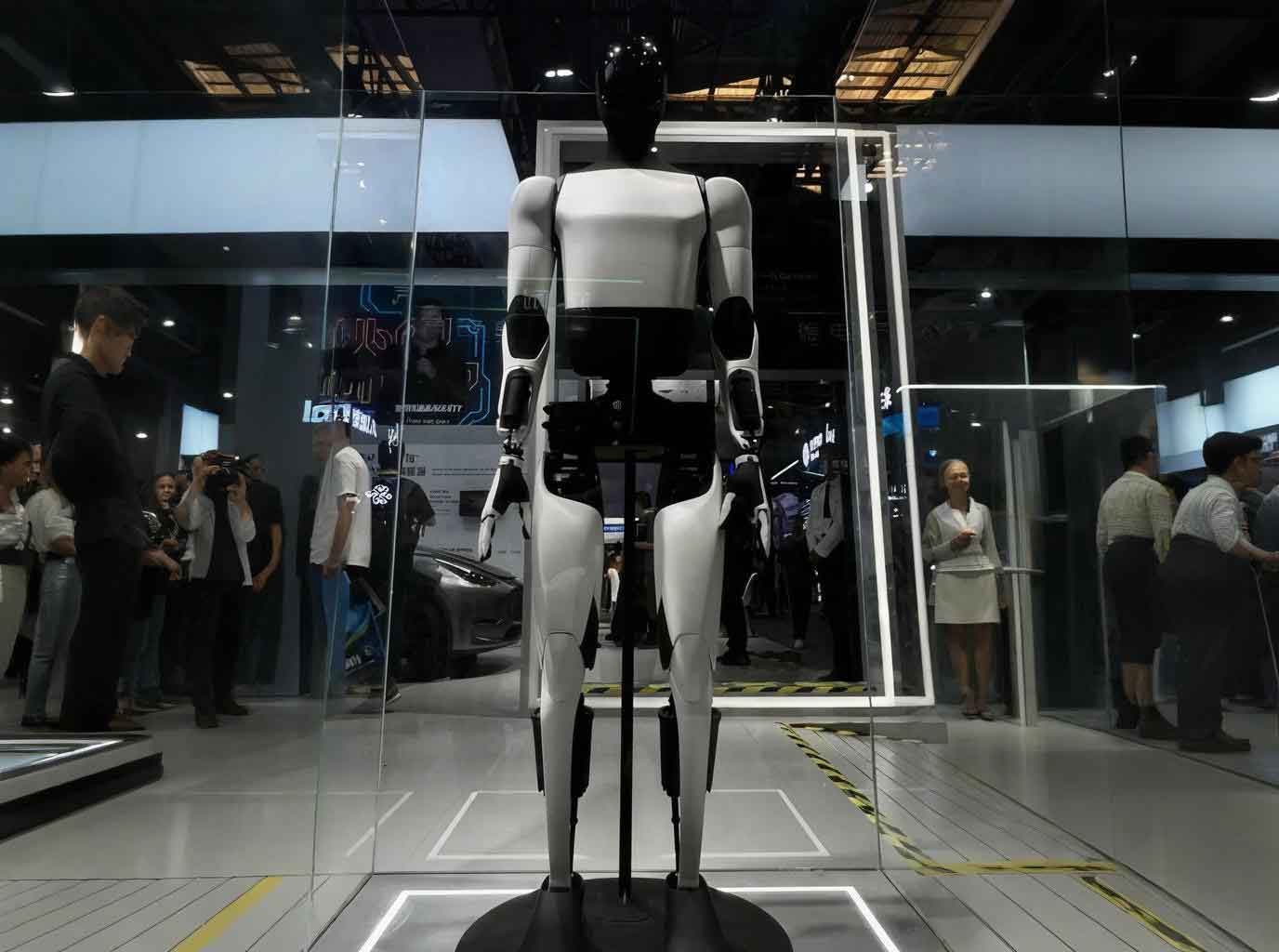

| Typical Example | Industrial Arm (Basic functions) | Smart Vacuum, Chatbot | Humanoid Robot, Advanced Surgical Robot |

The “AI+” Technological Stack for Embodied AI Robots

The empowerment of an embodied AI robot by “AI+” can be deconstructed into a four-layer technological stack that forms a synergistic closed loop. This stack integrates data generation, multimodal perception, cognitive planning, and adaptive control.

1. Multimodal Perception and Scene Understanding

For an embodied AI robot, perception is the gateway to understanding. It goes beyond simple object detection to constructing a rich, semantically annotated model of the world. This is achieved through multimodal fusion and advanced scene modeling.

a) Multimodal Fusion with Foundational Models: Large Vision-Language Models (VLMs) like CLIP and GPT-4V provide a breakthrough. They allow the robot to associate visual scenes with linguistic concepts, enabling open-vocabulary recognition and task understanding. For instance, a robot can use a VLM to interpret the command “hand me the metallic tool next to the red cup” by jointly processing visual input and language. The process can be seen as learning a joint embedding space:

$$ E_{image} = f_{\theta}(I), \quad E_{text} = g_{\phi}(T) $$

where the model parameters $\theta$ and $\phi$ are trained so that the similarity $sim(E_{image}, E_{text})$ is high for corresponding image-text pairs. An embodied AI robot uses this to ground language instructions in its visual perception.

b) 3D Scene Reconstruction and Semantic Mapping: Navigation and manipulation require 3D spatial understanding. Modern techniques combine geometric mapping with semantic labels. Traditional SLAM (Simultaneous Localization and Mapping) solves for the robot’s pose $x_{1:t}$ and the map $m$ given sensor observations $z_{1:t}$ and controls $u_{1:t}$:

$$ P(x_{1:t}, m | z_{1:t}, u_{1:t}) $$

Semantic SLAM enhances this by attaching semantic class labels $l_i$ to map elements (e.g., points, voxels). A revolutionary approach uses 3D Gaussian Splatting, which represents a scene as a set of anisotropic 3D Gaussians with attributes like color, opacity, and semantic feature vectors. This allows for real-time, photorealistic rendering and efficient querying of semantic information in 3D space, providing an ideal environment model for an embodied AI robot.

2. Cognitive Planning and Decision-Making

This layer acts as the “brain” of the embodied AI robot, translating high-level goals and perceptual understanding into actionable plans. Large Language Models (LLMs) and World Models are key enablers.

a) LLM-based Task Decomposition and Planning: LLMs excel at breaking down abstract instructions into sequences of sub-tasks. Given a command like “clean up the spilled coffee,” an LLM can generate a plan: 1) Locate spill, 2) Navigate to kitchen, 3) Find sponge, 4) Pick up sponge, 5) Navigate to spill, 6) Wipe spill. For an embodied AI robot, this plan must be grounded in its specific capabilities and environment. This is often done by mapping sub-tasks to predefined skill primitives or by using the LLM to generate code for control.

b) World Models for Imagination and Prediction: A core challenge is predicting the outcomes of actions. World Models are learned simulators that allow the robot to “imagine” the future. A 3D Vision-Language-Action (VLA) model, for example, can predict the next state $s_{t+1}$ given the current state $s_t$ and an action $a_t$:

$$ \hat{s}_{t+1} = M(s_t, a_t; \Theta) $$

where $M$ is the world model parameterized by $\Theta$. By rolling this model forward, the robot can evaluate potential action sequences $a_{t:t+H}$ to find one that achieves a goal $g$, optimizing a reward function $R$:

$$ a^*_{t:t+H} = \arg\max_{a_{t:t+H}} \sum_{k=0}^{H} R(\hat{s}_{t+k}) $$

This enables the embodied AI robot to plan in a mentally simulated world before acting in the real one, improving safety and efficiency.

3. Adaptive Motion and Force Control

The “brain’s” plans must be executed by the “body” with precision, adaptability, and compliance. The control paradigms for an embodied AI robot have evolved significantly.

| Control Paradigm | Representative Methods | Advantages | Limitations |

|---|---|---|---|

| Rule-Based | ZMP, PID Control | High real-time performance, simple implementation | Poor adaptability, struggles with strong nonlinearities |

| Model-Based | Model Predictive Control (MPC), Whole-Body Control (WBC) | High precision, can incorporate physical constraints | High development cost, sensitive to model accuracy |

| Learning-Based | Deep Reinforcement Learning (DRL), Imitation Learning (IL) | Autonomous exploration, strong generalization | High demand for data and simulation resources |

Model Predictive Control (MPC) is a cornerstone for dynamic stability, especially in legged embodied AI robots. It solves a finite-horizon optimal control problem at each time step:

$$ \begin{aligned}

\min_{u_{t:t+N-1}} & \quad \sum_{k=0}^{N-1} ( x_{t+k}^T Q x_{t+k} + u_{t+k}^T R u_{t+k} ) + x_{t+N}^T P x_{t+N} \\

\text{s.t.} & \quad x_{t+k+1} = f(x_{t+k}, u_{t+k}), \quad k = 0,…,N-1 \\

& \quad u_{min} \leq u_{t+k} \leq u_{max} \\

& \quad g(x_{t+k}, u_{t+k}) \leq 0

\end{aligned} $$

where $x$ is the state, $u$ is the control input, $f$ is the dynamics model, and $Q, R, P$ are cost matrices. Only the first control input $u_t$ is applied before the problem is re-solved, allowing for reactive adaptation.

Deep Reinforcement Learning (DRL) complements this by learning control policies $\pi_\theta(a|s)$ that maximize cumulative reward in complex environments where accurate models are hard to obtain. The policy is trained using algorithms like Proximal Policy Optimization (PPO) to optimize the objective:

$$ J(\theta) = \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T} \gamma^t r(s_t, a_t) \right] $$

The future lies in hybrid approaches, where a learning-based high-level policy selects goals or parameters for a low-level, robust MPC/WBC controller, combining the flexibility of learning with the safety and precision of model-based control for the embodied AI robot.

4. Multimodal Generative AI for Data and Simulation

Training and validating the aforementioned AI models for an embodied AI robot requires massive, diverse datasets of interactions, which are prohibitively expensive to collect in the real world. Multimodal Generative AI fills this gap by synthesizing high-fidelity training data and simulation environments.

| Paradigm | Key Technologies | Generation Advantage | Primary Application in Embodied AI |

|---|---|---|---|

| Learning-Driven Generation | Diffusion Models, Transformer-based Models (e.g., DALL·E, Sora) | High semantic consistency and visual fidelity, flexible text control | Synthesizing 2D/3D training images/videos for perception models, creating varied scenarios for policy training. |

| Physics-Driven Generation | Physics-informed GANs, VAEs, Neural Radiance Fields (NeRF), 3D Gaussian Splatting | Strong physical consistency, direct 3D scene/object output with material properties | Building high-fidelity, dynamic simulation environments for DRL training and “sim-to-real” transfer. |

For instance, a diffusion model generates an image by iteratively denoising random noise, conditioned on a text description $y$:

$$ p_\theta(x_{0:T}|y) = p(x_T) \prod_{t=1}^{T} p_\theta(x_{t-1}|x_t, y) $$

This can create countless realistic images of cluttered tables, which are used to train the object detection system of an embodied AI robot. Meanwhile, physics-based generative models can create entire 3D scenes with accurate lighting, object dynamics, and material interactions, forming digital twins for safe and scalable robot training. The dominant paradigm is becoming a hybrid one, where a foundation of synthetic data is fine-tuned with targeted real-world data.

Application Frontiers for the Embodied AI Robot

The integration of the “AI+” stack is unlocking transformative applications across diverse sectors. The embodied AI robot moves from being a simple tool to an intelligent, adaptive collaborator.

| Domain | Core Challenge | How Embodied AI Robot Addresses It | Exemplary Task |

|---|---|---|---|

| Industrial Manufacturing | High-mix, low-volume production; complex assembly; unsafe environments. | Multimodal perception for part recognition and pose estimation; flexible, learning-based control for dexterous assembly; ability to adapt to new product lines. | Kitting disparate parts from bins, wiring harness assembly, sanding/polishing irregular surfaces, operating in paint booths. |

| Healthcare & Assisted Living | Aging populations, caregiver shortage, need for consistent physical therapy. | Safe human-robot physical interaction (HRI); ability to understand contextual commands; precise, repeatable motion for assistance. | Assisting patients from bed to wheelchair, fetching objects, providing gait support, monitoring vital signs through interaction. |

| Logistics & Warehousing | Demand for flexible automation beyond fixed conveyor belts; unpacking complex shipments. | Mobile manipulation in semi-structured spaces; ability to pick and place a vast array of unknown objects (bin picking). | Unloading trucks, sorting mixed SKUs in a fulfillment center, palletizing irregular boxes. |

| Domestic Service | Unstructured, dynamic environments; diverse, long-horizon tasks. | Comprehensive scene understanding; robust navigation among moving people/pets; long-term task planning and learning from interaction. | Loading/unloading a dishwasher, tidying a child’s room, preparing simple meals, providing companionship. |

Persistent Challenges on the Path to Ubiquity

Despite rapid progress, the widespread deployment of sophisticated embodied AI robots faces significant hurdles.

1. Computational Efficiency and Energy Consumption: The “AI+” stack is computationally voracious. Running large multimodal models for real-time perception and planning, coupled with high-frequency control loops, requires substantial onboard computing power, which conflicts with size, weight, and power (SWaP) constraints, especially for mobile or humanoid platforms. The energy demand limits operational duration. Breakthroughs in efficient model architectures (e.g., Mixture of Experts), neuromorphic computing, and edge-cloud hybrid processing are critical.

2. Generalization and Robustness Under Uncertainty: An embodied AI robot trained in one environment or on a specific set of objects often fails catastrophically when faced with novel situations—a change in lighting, a slightly different object texture, or an unexpected obstacle. The learned policies and perception models suffer from a lack of true out-of-distribution generalization. Enhancing robustness requires not just more data, but more diverse data (often synthetic), and algorithmic advances in causality, continual learning, and uncertainty quantification within AI models.

3. Safety and Trust in Human-Centric Environments: As embodied AI robots move closer to humans, safety is paramount. This includes both physical safety (avoiding collisions, applying appropriate force) and functional safety (ensuring the AI’s decision-making is reliable and predictable). Formal verification of learned controllers is exceedingly difficult. Furthermore, establishing trust requires transparent, explainable actions and intuitive human-robot interaction, which remains a major research frontier.

Future Trajectories: Toward Truly General Embodied Agents

The evolution of the embodied AI robot will be shaped by several converging trends.

1. Foundation Models for Embodiment: The next leap will be the development of true “Embodiment Foundation Models.” These are large, pre-trained models that understand not just language and images, but physical concepts, action spaces, and cause-effect relationships in the real world. A single model could be adapted (via fine-tuning or prompting) to control diverse robotic embodiments for a multitude of tasks, drastically reducing the need for task-specific training.

2. Intrinsic Motivation and Lifelong Learning: Current embodied AI robots are primarily goal-driven. Future systems will exhibit intrinsic motivation—a drive to explore, experiment, and learn new skills without explicit external reward. This, combined with lifelong learning algorithms that accumulate knowledge without catastrophically forgetting previous skills, will enable robots to operate and adapt autonomously over years in dynamic environments like homes or hospitals.

3. Swarm Embodied Intelligence: The coordination of multiple embodied AI robots—a swarm—will solve problems intractable for a single agent. Using decentralized communication and collective intelligence inspired by nature, swarms could perform complex construction, large-scale environmental monitoring, or disaster response. The “AI+” here extends to multi-agent planning and emergent cooperative behaviors.

4. Neuro-Symbolic Integration: Combining the pattern recognition power of neural networks (the “connectionist” sub-symbolic approach) with the logical reasoning, transparency, and knowledge representation of symbolic AI is a promising path. A neuro-symbolic embodied AI robot could use a neural network to perceive a scene, a symbolic reasoner to infer that “a glass near the table edge might fall,” and then plan a safe action using logical constraints, making its behavior more interpretable and reliable.

In conclusion, the “AI+” paradigm is fundamentally transforming robotics, giving rise to the embodied AI robot as a new class of intelligent machine. By integrating multimodal perception, cognitive reasoning, adaptive control, and data generation into a physical form, these systems are transitioning from limited, specialized tools to general-purpose assistants capable of learning and operating in our complex world. While challenges in efficiency, robustness, and safety are substantial, the technological trajectory points toward increasingly capable, autonomous, and collaborative embodied agents. Their successful development and integration will not only redefine industries but also reshape our daily lives, demanding thoughtful collaboration across engineering, ethics, and design to ensure they benefit society as a whole.