The convergence of embodied AI and humanoid robotics represents not merely a technological trend, but a fundamental shift in how artificial intelligence interacts with and understands our physical world. I observe this fusion as the critical next step in the evolution of machine intelligence, moving beyond pattern recognition in data to active, physical engagement with complex environments. This transition from virtual to physical embodiment is what will unlock truly adaptive and useful machines.

At its core, embodied AI robot intelligence refers to systems where cognition is grounded in a physical entity’s sensorimotor experience. The canonical loop is “perception-decision-action,” where intelligence emerges from continuous interaction rather than from processing static datasets alone. This principle, with roots in early cybernetics and later in Brooks’ “intelligence without representation,” posits that to truly understand the world, an agent must be situated within it. A pure language model can describe an apple, but an embodied AI robot can locate it on a cluttered table, gauge its ripeness through tactile feedback, and execute a gentle grip to pick it up without bruising it. This physical grounding is the defining characteristic.

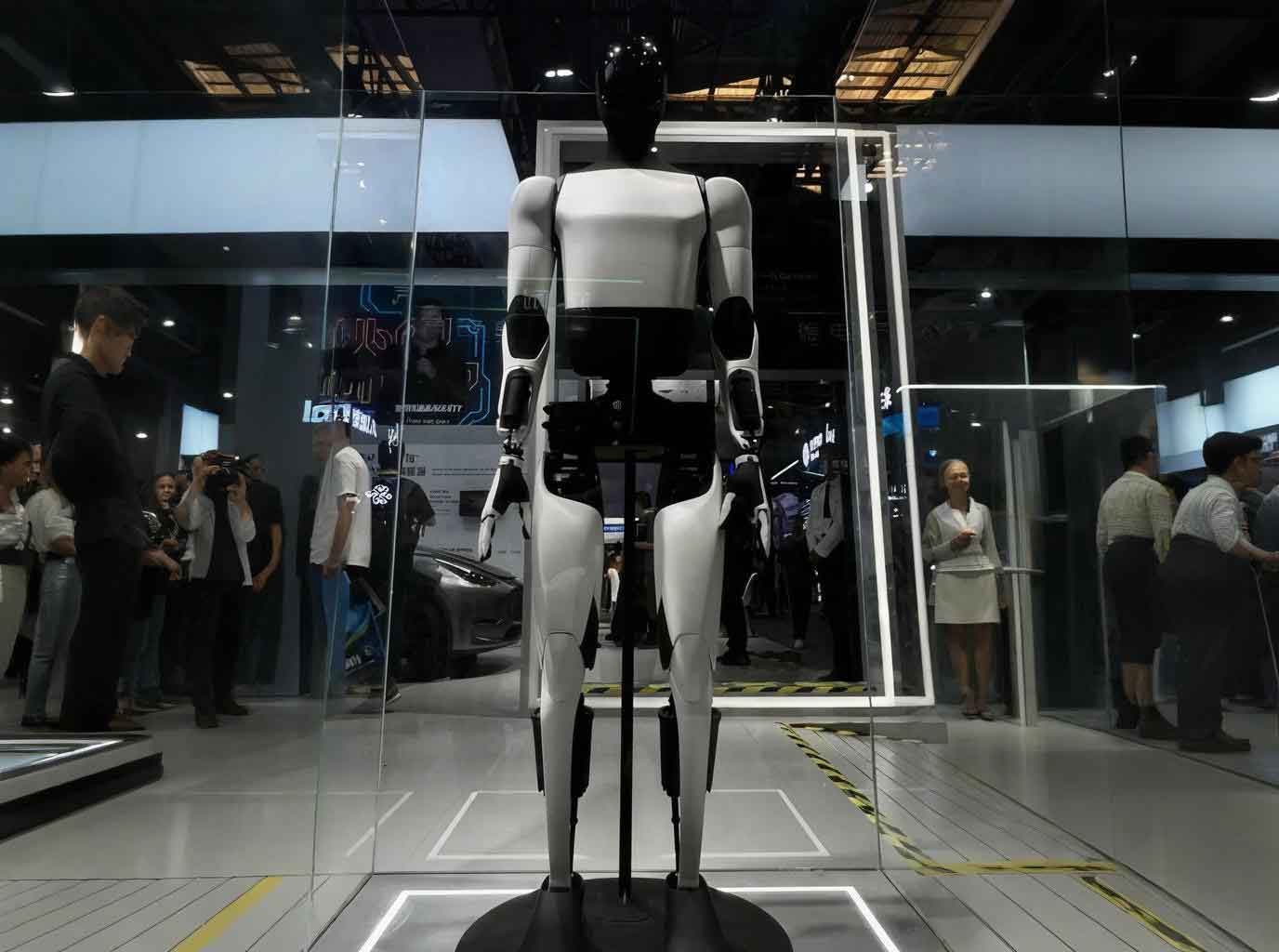

Humanoid robots, in turn, provide the quintessential physical platform for this intelligence. Their anthropomorphic form is not an aesthetic choice but a profound functional one. Our world—its tools, infrastructures, and social interfaces—is built for the human body. A wheeled robot cannot climb standard stairs; a fixed robotic arm cannot navigate a living room. A humanoid form grants an embodied AI robot immediate compatibility with human environments, allowing it to serve as a seamless “interface” between advanced AI and our daily lives. The development of these robots has progressed from rigid, pre-programmed machines to highly dynamic systems like Boston Dynamics’ Atlas, and now to platforms like Tesla’s Optimus, which aim to integrate advanced AI for generalized task execution.

The synergy between the “body” (the humanoid robot) and the “mind” (the embodied AI) creates a virtuous cycle of advancement. The body demands smarter control and perception from the mind, while the mind’s evolving capabilities drive requirements for more sophisticated sensing and actuation in the body. This symbiotic relationship is the engine of the current intelligent revolution in robotics.

Core Technological Architecture of the Embodied AI Robot

The technological stack enabling sophisticated embodied AI robot systems can be deconstructed into three interconnected layers: the Hardware Foundation, the Core Capabilities, and the System Applications. Each layer consists of critical modules that must evolve in concert.

| Layer | Core Modules | Key Technological Components | Function & Current State |

|---|---|---|---|

| Hardware Foundation | Mechanical Body | Lightweight composites (CFRP), bio-inspired design, modular joints. | Provides the physical structure. Weight, durability, and degrees of freedom are key metrics. Current challenge: balancing strength, weight, and cost. |

| Power & Actuation | Harmonic drives, torque-dense motors, integrated actuators. | Converts energy into motion. Precision, power-to-weight ratio, and reliability are critical. High-precision reducers remain a bottleneck. | |

| Energy & Thermal | High-density batteries, dynamic power management, liquid cooling. | Ensures operational longevity. Balancing energy capacity with discharge rates and managing heat from high-power actuators are ongoing challenges. | |

| Core Capabilities | Motion Control | Whole-body dynamics, model predictive control, reinforcement learning. | Enables stable locomotion and dexterous manipulation. The core challenge is real-time adaptation to uncertain dynamics and disturbances. Control can be modeled as: $$ \tau = M(q)\ddot{q} + C(q, \dot{q}) + G(q) + J^T F_{ext} $$ where robustly estimating and compensating for external forces $F_{ext}$ is key. |

| Embodied Perception | Multi-modal sensing (vision, tactile, force), sensor fusion, scene understanding. | Creates a rich, actionable model of the environment. Fusion latency and sensor resolution (e.g., tactile) limit fine-grained interaction. | |

| Embodied Intelligence | Large foundation models (VLMs), reinforcement learning, world models. | Provides high-level reasoning and task planning. Models like RT-2 translate internet-scale knowledge into actionable policies for the embodied AI robot. | |

| System Applications | Tooling & OS | Robot OS (ROS 2), simulation platforms (Isaac Sim), development SDKs. | Accelerates development and deployment. Sim-to-real transfer and standardized middleware are vital for ecosystem growth. |

| Application Layer | Vertical-specific software (logistics, caregiving, manufacturing). | Delivers end-user value. Requires close integration with core capabilities to solve real-world tasks reliably. |

The interdependence is absolute. Breakthroughs in actuator design (Hardware) enable more dynamic motions, which demand more robust control algorithms (Core Capabilities). These advanced algorithms, in turn, require more processing power and efficient thermal designs (Hardware). Similarly, sophisticated application software requires stable APIs provided by a robust operating system and predictable behavior from the core intelligence modules.

The Symbiotic Relationship: A Deeper Analysis

The relationship between the humanoid form and embodied intelligence is not one of simple host and guest; it is a deep, co-evolutionary symbiosis. Each element fundamentally shapes and enables the other, creating a whole that is greater than the sum of its parts.

The Humanoid Robot as the Physical Enabler: The humanoid morphology is the optimal physical substrate for an embodied AI robot intended for human-centric spaces. This form factor provides:

1. Environmental Compatibility: Direct access to environments and tools designed for the human body schema.

2. Natural Interaction: A form that facilitates intuitive human-robot collaboration and social acceptance.

3. General-Purpose Potential: A versatile platform that can be software-updated for a vast array of tasks, from factory maintenance to domestic assistance.

The hardware itself acts as the constraint and the catalyst for intelligence. The resolution of its sensors, the latency of its control loops, and the strength of its joints define the “reality” within which the AI must learn and operate.

The Embodied AI as the Cognitive Engine: The intelligence system is what transforms a sophisticated mechanized puppet into an autonomous agent. It provides:

1. Contextual Understanding: Interpreting natural language commands like “tidy the workshop” into a sequence of actionable steps.

2. Adaptive Control: Modifying grip force based on tactile feedback or adjusting gait for slippery floors.

3. Continual Learning: Improving task performance over time through interaction, using frameworks like reinforcement learning where the agent learns a policy $ \pi(a|s) $ that maximizes the expected cumulative reward:

$$ J(\pi) = \mathbb{E}_{\tau \sim \pi} \left[ \sum_{t=0}^{T} \gamma^t r(s_t, a_t) \right] $$

Here, the state $s_t$ is provided by the robot’s sensors, and the action $a_t$ is executed by its motors, closing the physical loop.

The technical协同效应 can be summarized as follows:

| Synergy Dimension | Contribution of Humanoid Form | Contribution of Embodied AI | Resulting协同价值 |

|---|---|---|---|

| Scene Adaptation | Provides native compatibility with human spaces & tools. | Enables autonomous operation via perception-action loops. | Drastically reduces environmental retrofitting costs; enables deployment in homes, factories, hospitals. |

| Perception & Execution | Supplies multi-modal sensory data and high-DOF actuators. | Processes data for decision-making and optimizes motion plans. | Enables complex task execution like delicate assembly and dynamic movement over rough terrain. |

| Autonomous Decision-Making | Offers the computational hardware and real-time control systems. | Generates adaptive strategies in response to dynamic environmental changes. | Transforms robot from pre-programmed tool to adaptive agent capable of handling unstructured scenarios. |

| Evolutionary Mechanism | Iterates hardware (better sensors, actuators) to support smarter AI. | Generates new performance demands and learning paradigms through interaction. | Creates a positive feedback loop (“AI progress drives hardware innovation drives AI progress”) accelerating overall advancement. |

Prevailing Challenges and Bottlenecks

Despite rapid progress, the path to ubiquitous, capable embodied AI robot systems is fraught with significant technical and commercial hurdles. These challenges are interconnected and must be addressed holistically.

1. Core Technological Shortcomings:

* Sensor Limitations: Tactile and force-torque sensing fidelity, longevity, and cost are far behind their visual counterparts. This creates a perceptual gap in fine manipulation.

* Control Reliability: Ensuring stable, robust, and energy-efficient operation over long durations in unpredictable environments remains a grand challenge. Joint overheating, actuator wear, and balance algorithm failures are common.

* The Data Scarcity Problem: Unlike internet-scale text and image data, high-quality, labeled physical interaction data is extremely scarce and expensive to collect. This limits the training and generalization of embodied AI robot models. The cost of data collection $C_{data}$ for robotics is often prohibitive:

$$ C_{data} = N_{episodes} \cdot (T_{human} \cdot C_{human} + T_{robot} \cdot C_{depreciation} + C_{damage}) $$

where $N_{episodes}$ is the number of trials, introducing a major barrier to scaling.

* Lack of Standardization: Proprietary component interfaces, communication protocols, and software frameworks stifle interoperability, increase costs, and slow down ecosystem innovation.

2. Commercialization and Adoption Barriers:

* Scene Complexity: Real-world environments like homes are incredibly messy and variable. Performance often drops significantly from controlled lab demos to actual deployment.

* Ethical and Safety Gray Areas: Clear standards for liability, behavior boundaries, and fail-safe mechanisms in close human-robot interaction are still under development.

* The Cost-Performance Paradox: Industrial users require near-human efficiency to justify high costs, while consumer markets are highly price-sensitive. This tension strains product development roadmaps. The value proposition $V$ must satisfy:

$$ V = \frac{\text{Performance}(\text{Reliability, Speed, Versatility})}{\text{Cost}(\text{BOM, Development, Maintenance})} > \text{Threshold} $$

for widespread adoption, a balance yet to be struck.

| Challenge Category | Specific Issues | Potential Mitigation Pathways |

|---|---|---|

| Technological | – Low-resolution tactile sensing – Control instability in long-duration, complex tasks – Scarce, costly real-world training data – Proprietary standards hindering integration |

– Investment in novel sensor materials & designs. – Advanced model-based & learning-based robust control. – Massive-scale simulation & synthetic data generation. – Industry consortiums to drive open interfaces. |

| Commercialization | – Poor generalization from lab to real homes/factories. – Unclear safety protocols and liability frameworks. – High unit cost vs. performance expectations. |

– “Focused generalization” in constrained verticals first. – Rapid iteration of safety standards and certification. – Design for manufacturability and supply chain optimization. |

Strategic Recommendations for Accelerated Development

To navigate these challenges and solidify the symbiotic advancement of embodied AI and humanoid robotics, a multi-pronged, coordinated strategy is essential. I believe the following pillars are critical:

1. Deepening Industry-Academia-Research Integration: Establishing long-term collaborative frameworks is vital. Universities should focus on fundamental breakthroughs in materials, sensor principles, and novel AI paradigms. Corporations must articulate clear market-driven technical requirements and provide real-world testing grounds. Joint labs and shared testing facilities can bridge the gap between theoretical research and practical application, creating a virtuous “basic research → applied development → scene validation” innovation cycle specifically for the embodied AI robot domain.

2. Building Efficient Technology Transfer Pipelines: The journey from a laboratory prototype to a reliable product is fraught with “valleys of death.” Professional technology transfer offices are needed to manage the entire pipeline—patent strategy, prototyping, pilot-scale production, and business incubation. Creating shared intellectual property pools for core technologies can lower entry barriers for startups. Furthermore, incentivizing researchers through spin-off ventures and equity stakes can align interests and accelerate commercialization.

3. Fostering a Collaborative Full-Industry-Chain Ecosystem: No single entity can master all layers of the embodied AI robot stack. Leading companies should act as ecosystem anchors, promoting modular design and open standards for key interfaces (mechanical, electrical, communication). This enables specialization—companies excelling in actuator design, sensor fusion, or AI model training can interoperate seamlessly. Such an ecosystem builds resilience against supply chain shocks and pools collective R&D resources to tackle systemic bottlenecks like high-performance减速器量产.

4. Implementing Strategic Policy Guidance and Resource Support: Governments have a pivotal role in de-risking the early, capital-intensive stages of this strategic industry. This includes:

* Directed Funding: Establishing national research programs and venture funds focused on core bottlenecks (e.g., tactile sensing, embodied foundation models).

* Testbed Creation: Opening public infrastructure (hospitals, transportation hubs, government facilities) as real-world testing grounds for embodied AI robot applications.

* Regulatory Sandboxes: Developing adaptive, performance-based regulatory frameworks that ensure safety without stifling innovation, particularly for liability and data privacy in human-in-the-loop systems.

* Talent Development: Reforming educational curricula to produce interdisciplinary talent skilled in robotics, AI, mechanical design, and cognitive science.

In conclusion, the fusion of embodied intelligence with the humanoid form is more than an engineering endeavor; it is the creation of a new kind of interactive, physical intelligence. The symbiosis is fundamental and inescapable. The challenges are substantial, spanning technical feasibility, economic viability, and societal integration. However, by strategically strengthening the bonds between research, industry, and policy, and by consciously nurturing a collaborative ecosystem, we can steer the development of the embodied AI robot towards a future where these machines become safe, reliable, and transformative partners in advancing human productivity and well-being. The intelligent revolution will be embodied, or it will be incomplete.