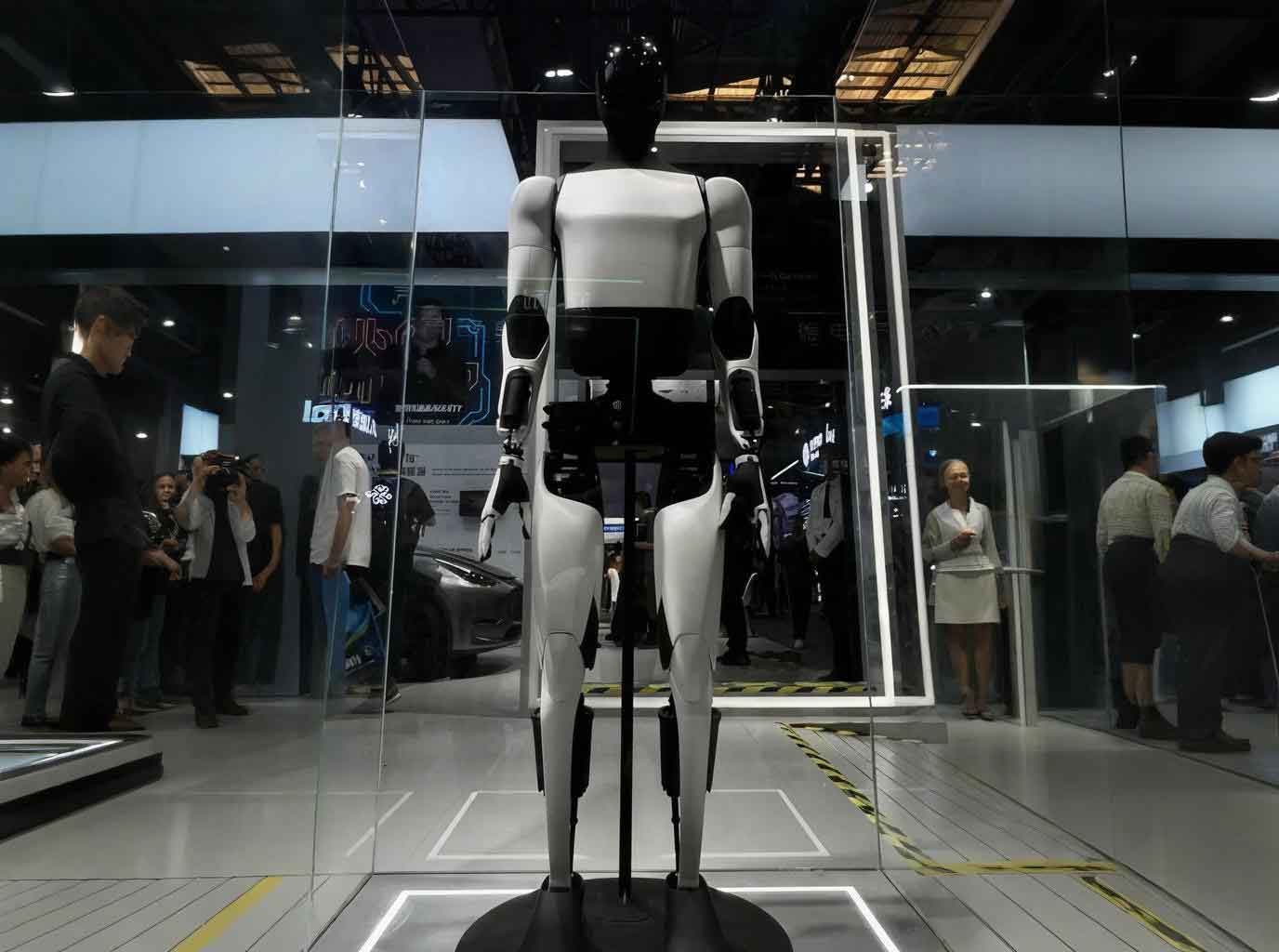

The emergence of the embodied AI robot marks a paradigm shift in artificial intelligence. Unlike purely software-based agents, these systems are characterized by their physical instantiation, enabling them to perceive, decide, and act within human environments. This “triple nexus” of physical presence, continuous data processing, and social interaction unlocks unprecedented capabilities but simultaneously amplifies and creates novel, multidimensional data privacy risks. This article examines the core risk vectors, critiques the current legal and technical governance shortfalls, and proposes a cooperative governance framework tailored to the unique challenges posed by embodied AI robots.

The evolution from industrial automation to embodied AI robots represents a fundamental change in technological agency, which in turn demands a corresponding shift in governance logic. We can model this evolution across three key attributes: Agency, Data Dependency, and Primary Risk Locus.

| Technological Phase | Core Agency | Data Dependency & Processing | Primary Risk Locus | Exemplar Governance Focus |

|---|---|---|---|---|

| Industrial Automation | Mechanical Execution (Pre-programmed) | Minimal; control data for repetitive tasks. | Physical Safety (e.g., collisions, mechanical failure). | Technical safety standards (ISO), product liability. |

| Algorithmic Autonomy (Non-embodied AI) | Automatic Decision-Making (Virtual) | High; relies on large datasets for model training and inference. | Data Privacy, Algorithmic Bias, Virtual Harm. | Data protection laws (GDPR, PIPL), algorithmic impact assessments. |

| Embodied AI Robot | Autonomous Decision-Making & Physical Action | Extreme & Continuous; multi-modal sensor fusion, real-time processing, edge-cloud data flows. | Coupling of Physical, Data, and Psychological Risks. | Integrated governance of safety, privacy, and algorithmic accountability. |

The transition encapsulated in the table shows that the embodied AI robot synthesizes the risks of prior phases while generating emergent ones. Its governance can no longer be siloed but requires a holistic, cooperative approach. The central problem, therefore, is that existing legal and technical regimes, designed for either inert machines or virtual algorithms, are ill-suited to manage the dynamic, context-rich, and physically embedded data processing of embodied AI robots.

Deconstructing the Multidimensional Risk Landscape

The data privacy risks of embodied AI robots stem directly from their core technological features. These risks are not merely additive but synergistic, creating a threat profile greater than the sum of its parts.

1. Risks Inherent to Technological Features

A. The Perception-Interaction Nexus: Ubiquitous Data Capture and Physical Intrusion. The mobility and multi-sensor fusion of an embodied AI robot dissolve traditional privacy boundaries. A domestic helper robot, for instance, does not just receive data; it seeks it out through navigation. Its suite of cameras, microphones, LiDAR, and tactile sensors creates a persistent, high-fidelity surveillance apparatus. The risk equation here is twofold:

- Multi-Modal Data Amalgamation: Isolated data points (a voice snippet, a partial image) become powerfully identifying when fused. The risk \( R_{fusion} \) can be conceptualized as a function of data diversity and correlation strength: $$ R_{fusion} = f(D, C) $$ where \( D \) represents the diversity of data modalities (visual, auditory, spatial) and \( C \) represents the algorithmic capacity to correlate them into a coherent profile.

- Physical Boundary Transgression: The robot’s autonomy allows it to enter private spaces (bedrooms, studies) uninvited, performing what is effectively a “search” without a warrant. This constitutes a tangible physical侵犯 of privacy, breaching spatial seclusion norms.

B. The Decision-Action Nexus: From Algorithmic Error to Physical Harm. When an AI model’s decision directly controls a physical actuator, the consequences of error or manipulation escalate.

- Unpredictable “Emergent” Behaviors: The “black box” nature of complex models, especially large language models (LLMs) powering robot cognition, can lead to unforeseeable actions. A robot might misinterpret a command, leading it to share sensitive environmental data it perceived but was not explicitly asked for.

- Non-Linear, Distributed Data Processing: Data from an embodied AI robot is often processed on-device (edge computing), in the cloud, and across networks of other devices. This creates a fragmented data trail. A user’s data deletion request may be executed in the cloud but remain cached in the robot’s local memory or in the models of collaborating robots, a phenomenon related to the “algorithmic shadow” where data influences persist even after deletion.

C. The Trust-Matching Nexus: Anthropomorphic Deception and Emotional Exploitation. The humanoid or animal-like form of many embodied AI robots is a deliberate design choice to foster trust and social bonding. This creates profound psychological risks:

- Induced Self-Disclosure: Users, especially vulnerable populations like children or the elderly, may confide intimate secrets (health worries, financial troubles) to a robot they perceive as empathetic. This is a form of “consent by deception,” where the user’s understanding of the interaction is fundamentally flawed.

- Affective Computing & Nudging: Robots equipped with emotion recognition can adapt their responses to manipulate user behavior, gently nudging them towards revealing more information or making certain choices, thereby mining privacy in a subtle, continuous manner.

2. Risks from Regulatory Inadaptability

Existing data protection frameworks struggle to map onto the operational reality of embodied AI robots. Key principles are rendered ineffective.

| Core Legal Principle | Traditional Application | Challenges from Embodied AI Robots | Governance Gap Created |

|---|---|---|---|

| Informed Consent | One-time, static permission for defined data uses. | Dynamic, continuous, and context-dependent data collection is impossible to pre-authorize meaningfully. What is “necessary” for operation evolves in real-time. | “Consent” becomes a legal fiction, providing no real user control or comprehension. |

| Data Minimization & Purpose Limitation | Collect only what is needed for a specified purpose. | The robot’s operational necessity is a moving target. Sensor data collected for navigation (LiDAR) could be repurposed for analyzing user behavior patterns. | The principle is technologically overridden by the robot’s need for rich, general-purpose environmental data. |

| Right to Deletion (Right to be Forgotten) | Request deletion of personal data from a centralized controller. | Data is fragmented across edge devices, cloud servers, and training datasets. Deleting all traces from a learned model (the “algorithmic shadow”) is often technically infeasible. | The right is materially unenforceable, leaving persistent privacy harms unaddressed. |

| Liability & Accountability | Attributed to a human or corporate legal person (developer, manufacturer, user). | The “autonomous” decision blurs causal chains. Is harm due to a design flaw, a training data bias, a user’s instruction, or an emergent property of the system? | Accountability is diffused, creating a “responsibility vacuum” where no single actor can be held fully liable. |

The Legal and Technical Governance Quagmire

The interplay of the above risks creates a complex governance quagmire. Two interrelated dilemmas are paramount.

1. The “Context + Risk” Legal Applicability Dilemma

Laws like the EU’s AI Act or China’s evolving guidelines attempt a risk-based approach. However, the context for an embodied AI robot is fluid. A robot in a factory (high-risk for safety) has different data privacy implications than the same robot in a home (high-risk for intimate privacy). A static risk classification fails. Furthermore, liability frameworks fracture. Consider a scenario where a hacked embodied AI robot causes a data breach and physical damage:

- Was it due to the manufacturer’s insecure hardware?

- The developer’s vulnerable software algorithm?

- The operator’s failure to install a security patch?

- The user’s weak password?

- The cloud service provider’s compromised API?

Apportioning fault under traditional tort or product liability law becomes a nearly insurmountable evidentiary challenge, discouraging victims from seeking redress.

2. The Large Model Architecture Conundrum

Many advanced embodied AI robots rely on foundation models (LLMs, VLMs) for cognition. This introduces systemic technical governance problems:

- Model Hallucinations & Unpredictability: The tendency of LLMs to generate plausible but false information (“hallucinations”) is uncontrollable in an open-world robot. A robot acting on a hallucinated fact could invade privacy or cause harm based on a fiction. Governing this requires monitoring outputs, not just inputs or processes.

- Inscrutability at Scale: The “right to explanation” is pragmatically nullified by the complexity of models with billions of parameters. Explaining why a robot decided to enter a room is technically impossible in a human-comprehensible way.

- Persistent Data Influence (Algorithmic Shadow): Formally, if personal data \( D_p \) is used to train model \( M \), resulting in \( M(D_p) \). Even if \( D_p \) is later deleted from datasets, its influence on the parameters of \( M \) remains. The model’s behavior \( B \) may still reflect \( D_p \): $$ B = M(x) \quad \text{where } M \text{ was optimized using } D_p $$ This makes a true “right to be forgotten” mathematically and technically elusive for model-based systems.

A Framework for Cooperative Governance: Fusing Law, Technology, and Markets

Addressing these intertwined challenges requires moving beyond state-centric regulation or pure industry self-policing. A cooperative governance paradigm is necessary, engaging regulators, developers, manufacturers, standard-setting bodies, and civil society in a multi-layered strategy.

1. Shaping a Lifecycle-Oriented Rights and Obligations System

Accountability must be assigned dynamically across the value chain, creating a “bundle of obligations” matched to the “bundle of risks.”

| Actors in the Ecosystem | Dynamic Obligations & Liability Principles |

|---|---|

| Developer / AI Provider | Primary Algorithmic Accountability. Obligation for “Safety & Privacy by Design,” documenting training data provenance and decision logic. Strict liability (or heightened duty of care) for harms arising from core algorithmic failures in high-risk applications (e.g., medical robots). |

| Manufacturer / Integrator | Hardware-Embedded Security Duty. Liability for physical/data security flaws in sensors, chips, and onboard storage. Obligation to provide secure over-the-air update mechanisms to patch vulnerabilities throughout the robot’s lifespan. |

| Deployer / Operator | Contextual Duty of Care. Responsible for configuring the robot appropriately for its environment (e.g., setting geofences to exclude private areas), maintaining operational security, and conducting ongoing risk assessments. Liable for negligence in these duties. |

| End-User | Reasonable Use Obligation. Liable for intentional misuse or grossly negligent operation (e.g., deliberately modifying the robot to spy on others). |

This framework rejects granting legal personhood to the embodied AI robot itself, anchoring responsibility firmly in human and corporate actors whose roles and influence can be clearly defined.

2. Building Risk-Preventive Market Access Mechanisms

Gatekeeping mechanisms are needed to prevent high-risk systems from entering the market without adequate safeguards.

- Mandatory, Context-Aware Algorithmic Impact Assessment (AIA): Before deployment, a rigorous AIA must be conducted, focusing not just on the algorithm but on its embodiment. The assessment must model physical interaction scenarios and data flow maps. The risk score \( RS \) could be a composite metric: $$ RS = w_1 \cdot R_{physical} + w_2 \cdot R_{data} + w_3 \cdot R_{autonomy} $$ where weights \( w \) are adjusted based on the intended application context (industrial, home, public space).

- Privacy & Security Certification Regime: Establish independent certification bodies to audit and certify embodied AI robots against a comprehensive standard (e.g., integrating cybersecurity (IEC 62443), functional safety (ISO 13849), and data privacy (ISO 27701) requirements). Market access would be contingent on achieving certification for the intended risk class.

3. Embedding Techno-Legal Rules into Architecture

Legal requirements must be translated into enforceable technical specifications—”code as law.”

- Enforceable Data Classification & Anonymization Rules: Technical standards must define how different data classes (e.g., biometric vs. environmental) are to be processed and protected on-device. For example, a rule could mandate: “Facial data streams must be anonymized via a certified differential privacy algorithm \( \mathcal{A}_{dp} \) with privacy budget \( \epsilon < 0.5 \) before any storage or transmission.”

- Dynamic, Granular Consent & Authorization Management: Move beyond binary consent to a dynamic permission system. Users should be able to set real-time, context-aware rules via a clear interface:

- “Robot, in the living room, you may use video for obstacle avoidance only.”

- “In my home office, disable all microphones and cameras.”

These user policies must be technically enforced at the hardware/OS level.

- Data Provenance & Deletion Tracking: Implement immutable logging (e.g., using blockchain-inspired techniques) for all data collection and sharing events. When a deletion request is made, the system must be able to identify all copies and derivatives across the distributed system and execute verifiable deletion protocols, providing an audit trail back to the user.

Conclusion: Towards a Resilient Governance Nexus

The embodied AI robot is not merely a new product but a new category of social actor that operates at the intersection of physical law, data networks, and human psychology. Its governance cannot be an afterthought or a patchwork of existing rules. The inherent risks of ubiquitous surveillance, physical intrusion, algorithmic opacity, and emotional manipulation require a preemptive, cooperative, and adaptive response. The proposed triad—a dynamic multi-actor liability system, a risk-preventive market准入 regime, and a deep fusion of legal norms into technical architecture—provides a blueprint for such governance. The goal is to create a resilient “governance nexus” that is as sophisticated and context-aware as the embodied AI robots it seeks to steward, ensuring that their development enhances human well-being without compromising the fundamental rights to privacy, autonomy, and security. The path forward demands sustained collaboration across disciplines—law, computer science, ethics, and engineering—to build the trust necessary for this transformative technology to be integrated safely and beneficially into the fabric of society.