From my vantage point behind the reference desk, I have witnessed the evolution of our library from a quiet repository of printed knowledge to a vibrant, digitally-augmented hub. Yet, a persistent gap remained between our sophisticated digital collections and the physical, often messy, reality of patron interaction. The arrival of embodied AI robot technology marks not merely another upgrade, but a fundamental paradigm shift. It promises to close that gap by embedding intelligence directly into the physical fabric of our library. In this chronicle, I will explore the profound opportunities this fusion presents, confront the tangible challenges we face in its implementation, and outline the strategic pathways we must navigate to realize a truly integrated, intelligent library ecosystem.

Part I: The Dawn of a New Service Era – Opportunities Unveiled

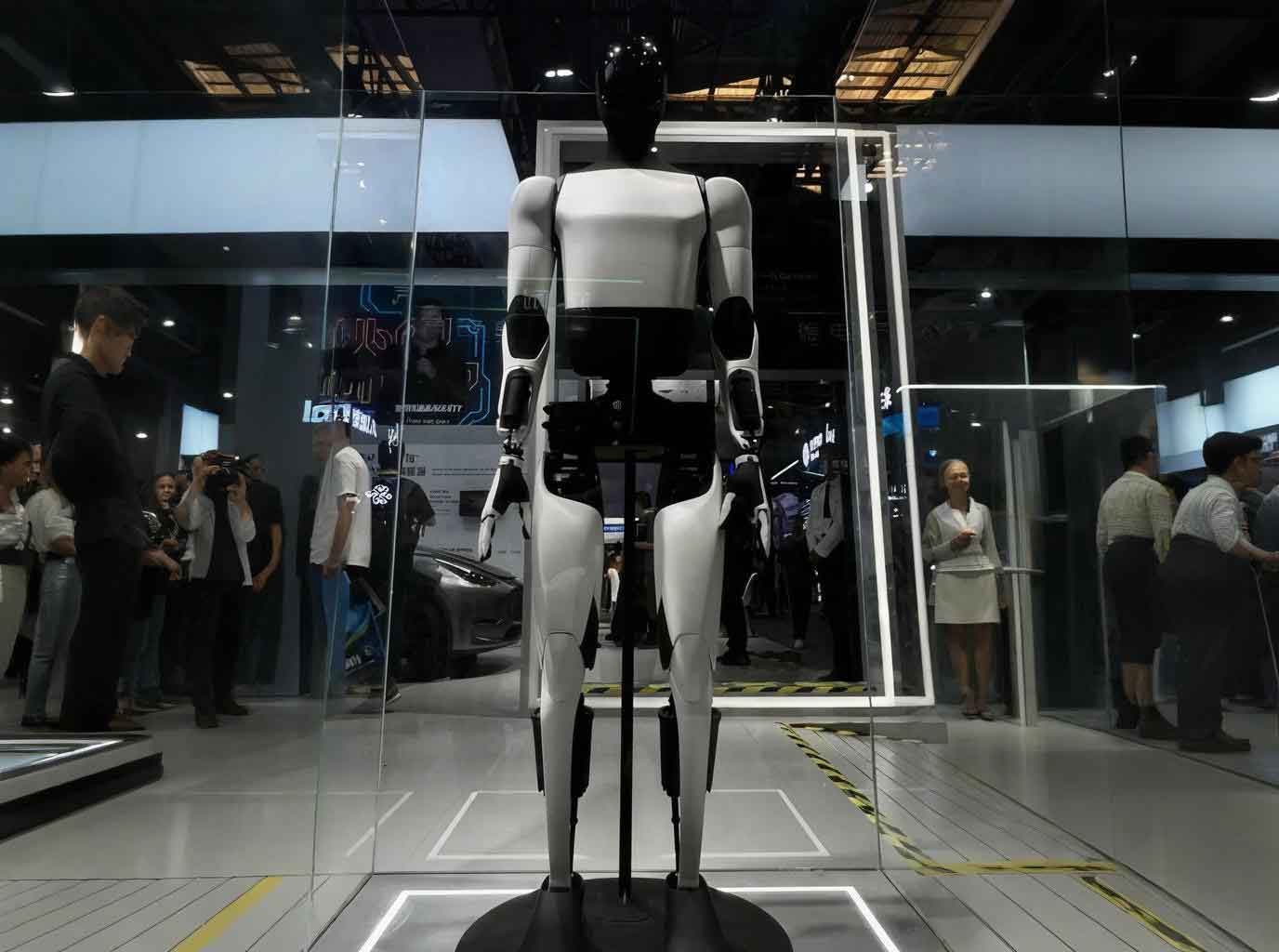

The integration of the embodied AI robot into our library’s ecosystem is not about adding a novelty gadget; it is about architecting a new operational nervous system. Its core capability—closing the perception-action loop within a physical environment—unlocks transformative potential across four key dimensions.

1.1 Deep Technological Symbiosis: From Connected Tools to a Cognitive Organism

Previous technologies were tools we used. The embodied AI robot aspires to be a collaborative partner within our environment. This symbiosis manifests through a layered integration. At the sensory layer, a distributed network of sensors on robots and in the environment creates a real-time, multi-modal feed of data: patron flow, dwell times, ambient sound, and even anonymized thermal signatures indicating crowded zones. This raw data is processed at the edge, where lightweight models perform initial analysis, reducing latency. The true fusion occurs at the cognitive layer, where data from our Integrated Library System (ILS), digital resource platforms, and these physical sensors converge. A patron’s query to a robot assistant is no longer just a text string; it is enriched with context—their location in the stacks, the books they have just browsed, and the time they have spent in a particular section. This creates a rich, contextual understanding previously impossible. We can model this fusion as an enhancement to a standard recommendation function:

$$R_{\text{traditional}} = f(U_i, I_j)$$

$$R_{\text{embodied}} = f(U_i, I_j, L_{t}, B_{[t-\Delta t, t]}, S_{\text{ambient}})$$

where $R$ is the recommendation score, $U_i$ is user profile, $I_j$ is item (book/resource), $L_{t}$ is real-time location, $B$ is recent browsing history, and $S$ is ambient situational data. This formula, while simplified, captures the enriched decision-making context an embodied AI robot can leverage.

1.2 Service Paradigm Shift: From Transactional to Proactive & Personal

The most visible change for our patrons is the service model. We are transitioning from standardized, transactional services (“find this book,” “answer this query”) to proactive, personalized companionship. The embodied AI robot acts as a mobile, perceptive guide. Consider a student beginning research on climate policy. A traditional search yields a list. An embodied AI robot, having identified the topic from an initial conversation, can physically guide the student to the relevant LC classification section, point out seminal texts, and, using its display, project a timeline of key treaties from our digital archive onto a nearby table. It personalizes further by accessing the student’s borrowing history to avoid recommending already-read material and by adjusting its explanation complexity based on perceived engagement levels. This shift is summarized in the table below.

| Aspect | Traditional Service Model | Embodied AI Robot-Enabled Model |

|---|---|---|

| Interaction | Static, station-based (desk, terminal). | Mobile, context-aware, follows the patron’s workflow. |

| Recommendation | Based on aggregate borrowing data (collaborative filtering). | Based on real-time behavior, location, and personal history (contextual & collaborative). |

| Instruction | Scheduled workshops or one-on-one appointments. | Just-in-time, point-of-need assistance embedded in the research process. |

| Accessibility | Requires patron to seek help. | Can proactively offer help based on observed hesitation or prolonged searching. |

1.3 Dynamic Resource Orchestration: The Library as a Living System

Resource management moves from periodic, batch-process updates to a dynamic, continuous orchestration. An embodied AI robot patrolling the shelves does more than inventory; it analyzes. It can detect that books on a certain topic are consistently mis-shelved, indicating high demand or confusing categorization, and flag this for review. It can identify “cold spots” where material never moves, informing weeding decisions. In real-time, it can act as a physical broker for digital assets. If all physical copies of a text are loaned out, a robot near a patron searching for it can immediately offer an interactive terminal to access the e-book or a digital scan, seamlessly bridging the physical-digital divide. This creates a feedback loop where collection usage directly and immediately informs collection management. The efficiency of resource placement can be modeled as an optimization problem, where the embodied AI robot provides the critical real-time data variable $D_t$:

$$\text{Minimize } Z = \sum_{t=1}^{T} (W_{\text{retrieval}} \cdot C_{\text{retrieval}}(D_t) + W_{\text{restock}} \cdot C_{\text{restock}}(D_t))$$

Subject to space and budget constraints, where costs are a function of real-time demand data $D_t$ gathered by the robots.

1.4 Experiential Transformation: Enhancing Cognitive Engagement

Finally, the library space itself becomes an interactive, cognitive partner. An embodied AI robot can transform special collections viewing. Instead of handling a fragile document, a patron can interact with a robot that displays high-resolution scans, can “virtually turn pages” via gesture control, and overlays annotations, translations, or related commentary through AR. In learning commons, robots can facilitate group work by acting as a shared interactive display, recording brainstorming sessions, and dynamically calling up relevant reference material. This embodied interaction—learning through physical navigation and manipulation mediated by an intelligent agent—promotes deeper cognitive processing and retention compared to screen-based searching alone.

Part II: The Reality Check – Navigating the Implementation Labyrinth

Despite the compelling vision, the path to integrating embodied AI robot systems is fraught with practical, ethical, and human-centric challenges that we must address with clear eyes.

2.1 Technical Bottlenecks: The Limits of Embodiment in a Complex World

The library is a uniquely challenging environment for any embodied AI robot. Our core technical hurdles are:

- Environmental Adaptation: Dense, dynamically changing bookshelves (from reshelving carts) degrade SLAM (Simultaneous Localization and Mapping) accuracy. The long, narrow aisles create perceptual challenges. A robot’s success hinges on robust algorithms for navigation in such semi-structured chaos, which can be expressed as a constrained pathfinding problem:

$$ \text{Find path } P \text{ minimizing } \int_{P} (c_{\text{distance}} + \lambda \cdot c_{\text{risk}}( \text{perception uncertainty} )) \, ds $$

subject to avoiding dynamic obstacles (patrons, carts). - Multi-Modal Understanding Gaps: A patron’s vague gesture (“I’m looking for the big red book about art”) or a mumbled query in a noisy atrium pushes the limits of current sensor fusion and natural language understanding. The discrepancy between high-level intent and low-level sensor data is a significant barrier.

- Computational and Power Constraints: Real-time processing of video, LIDAR, and audio streams for multiple robots demands significant edge computing power, conflicting with the need for all-day battery life and quiet operation.

2.2 Scenario Misalignment: The “Solution in Search of a Problem” Trap

A major risk is deploying embodied AI robot technology for superficial, “showcase” tasks without addressing core library mission needs. A common pitfall is using an expensive, multi-sensor robot as a simple mobile kiosk for direction queries—a task easily handled by static screens. The true value lies in complex, context-rich services like in-stack discovery support or personalized research assistance. Without careful design, robots can create “feature bloat,” increasing cognitive load for patrons who just want a simple answer. The challenge is to map robot capabilities to high-value, high-complexity tasks, as outlined below.

| Low-Value / High-Risk Applications | High-Value / Strategic Applications |

|---|---|

| Simple directional guidance to known locations (restrooms, main desk). | Guided discovery within complex subject stacks, connecting physical and digital resources. |

| Replacing basic check-in/out stations without added functionality. | Proactive assistance for patrons showing signs of confusion or research frustration. |

| Reading out static announcements. | Facilitating immersive experiences with special collections or archival materials. |

2.3 Management and Ethical Quagmires: Governance in a Data-Driven Space

Operating an embodied AI robot fleet introduces novel management complexities:

- Data Ethics and Privacy: These robots are constant data collectors. How do we obtain meaningful consent for capturing video, audio snippets, and location trails within a public yet knowledge-focused space? Patron behavior data—like which controversial topics they browse—is incredibly sensitive. We must implement privacy-by-design principles, ensuring data is anonymized, aggregated, or ephemeral. The algorithm’s decision-making process (“Why did it recommend *this* book?”) must be auditable to prevent hidden biases.

- Operational Silos and Costs: Robot maintenance falls between IT, facilities, and public services departments. Sustainable funding is also critical; beyond the capital purchase, costs for software updates, hardware repairs, and continuous AI model training can be substantial and ongoing.

- Liability and Safety: Who is liable if a robot’s navigation fails and it bumps into a patron or damages a rare book? Clear protocols and insurance models are needed.

2.4 Human Acceptance Barriers: Overcoming Trust and Habit

The most sophisticated embodied AI robot will fail if patrons and staff reject it.

- Patron Skepticism and Trust: Will patrons trust a machine with a complex research query? Initial failures (mishearing, wrong directions) can erode trust rapidly. Some may find persistent robotic presence intrusive or dehumanizing.

- Staff Displacement and Role Evolution: Staff may fear job replacement. The crucial message is that robots handle repetitive, informational, and navigational tasks, freeing librarians for higher-value work: complex research consultations, content curation, instructional design, and community engagement—tasks requiring human empathy, creativity, and deep expertise.

- Breaking Established Habits: Patrons are accustomed to certain workflows. Introducing a new intermediary requires clear communication of benefits and patient onboarding.

Part III: Charting the Course – A Strategy for Symbiotic Integration

To harness the opportunities while mitigating the risks, we are pursuing a multi-pronged strategy focused on pragmatic integration, ethical governance, and human-centered design.

3.1 Phased Technical Architecture: Building a Robust Embodied Nervous System

We are avoiding a monolithic rollout. Instead, we are building a modular, layered architecture:

- Pilot Zone & Specialized Agents: Initial deployment is in a controlled zone (e.g., periodicals or a specific subject floor) with robots dedicated to single, high-value tasks like “in-stack discovery guide” or “special collections docent.” This allows for focused testing and refinement.

- Hybrid Sensor Network: We are augmenting robot sensors with a fixed environmental sensor network (overhead cameras for crowd flow, shelf weight sensors). This shared perception layer improves overall situational awareness and reduces the computational burden on each individual embodied AI robot.

- Federated Learning for Continuous Improvement: To improve without centralizing sensitive data, we are exploring federated learning. Each robot trains its local model on anonymized interaction data. Only model updates (not raw data) are shared and aggregated to create a globally improved model, enhancing performance across the fleet while preserving privacy.

$$ \min_{w} \sum_{k=1}^{K} \frac{n_k}{n} F_k(w) \quad \text{where } F_k(w) = \frac{1}{n_k} \sum_{i \in \mathcal{P}_k} f_i(w) $$

Here, $K$ robots each have a local dataset $\mathcal{P}_k$, and they collaboratively learn a global model $w$ without sharing their data.

3.2 Purpose-Driven Service Design: Aligning Robots with Core Mission

We use a strict design filter for any embodied AI robot application: does it directly support knowledge discovery, enhance accessibility, or enable a service impossible without physical embodiment? We are developing clear personas and journey maps. For example, the “First-Year Researcher” journey map identifies moments of confusion (deciphering call numbers, evaluating source credibility) as prime intervention points for robot assistance. This ensures technology serves the mission, not vice versa.

3.3 Proactive Governance and Ethical Frameworks

We have established a cross-functional oversight committee (librarians, IT, legal, ethics scholars) to govern our embodied AI robot initiative. Key policies include:

- Transparent Data Charter: Clear signage and digital notices inform patrons about data collection. We implement “data minimization” and set short retention periods for raw sensor data.

- Algorithmic Accountability: We mandate logging for robot decisions in high-stakes contexts (e.g., research recommendations) to allow for audit and bias detection.

- Sustainable Operational Model: We are building total-cost-of-ownership models and exploring “Robot-as-a-Service” subscriptions to smooth out budgeting and ensure access to the latest software and hardware updates.

3.4 Cultivating a Culture of Human-Robot Collaboration

Successful integration is a human factor challenge. Our approach includes:

- Staff as Co-Designers and Supervisors: Librarians are involved in training the robots’ knowledge bases and designing interaction scripts. Their role evolves to “robot fleet supervisor” and “complex query escalator,” handling cases the robot cannot.

- Patron Education and Transparent Interaction: Robots are programmed to introduce themselves, explain their capabilities, and gracefully hand off to a human. We run workshops on “Working with Your AI Library Assistant.”

- Designing for Trust: Robot behavior is calibrated to be predictable and non-intrusive. Their physical design and movement patterns (slower speed, clear signaling of intent) are engineered to feel helpful, not threatening.

The journey of integrating the embodied AI robot into our library is ongoing. It is a complex undertaking, rife with both technical hurdles and profound philosophical questions about privacy, employment, and the nature of service. However, the potential reward—a library that is more accessible, more responsive, and more deeply integrated into the cognitive workflows of its community—is a powerful motivator. We are not building a library of robots; we are cultivating a symbiotic ecosystem where human expertise and embodied machine intelligence amplify each other, ensuring that the library remains an indispensable, dynamic, and profoundly human-centered space for discovery in the digital age.