In my exploration of artificial intelligence, I often return to the philosophical foundations of language and understanding. The Turing test, proposed by Alan Turing, suggests that machines can think if they mimic human responses through symbol manipulation. Modern AI systems like ChatGPT or DeepSeek can indeed pass such tests, generating grammatically correct and emotionally nuanced text. For instance, when asked how to comfort a friend who lost a pet, these AI models might produce step-by-step guidance or even quote poetry, blurring the line between machine and human. However, as I probe deeper—asking why a specific poem by Keats was chosen—the AI reveals its lack of genuine understanding: it merely associates “Keats” with “mourning” based on training data, without grasping metaphor or emotion. This exposes a core limitation: AI operates as unconscious symbol processing, “形似” but “神离,” or superficially similar yet fundamentally detached from true meaning.

To understand this gap, I turn to Ludwig Wittgenstein’s later philosophy, particularly his concepts of “language games” and “forms of life.” In his early work, Tractatus Logico-Philosophicus, Wittgenstein viewed language as a logical picture of the world, where words correspond directly to objects. This representational theory, however, falls short in explaining everyday language use. For example, the word “red” might refer to a physical color, but in the phrase “her face turned red with heat,” it conveys a bodily expression of emotion. This ambiguity led Wittgenstein to shift in Philosophical Investigations toward a pragmatic view. Here, language is not a static system but a dynamic activity embedded in social practices. As he argued, meaning arises from use within specific contexts, or “language games,” which are intertwined with “forms of life”—the shared behaviors, customs, and experiences that constitute human existence. For instance, the command “slab!” in a construction site means to pass a slab, while in a chess game, it might mean moving a piece. The same symbol gains different meanings based on the game’s rules and the participants’ embodied actions.

Wittgenstein emphasized that following rules is not about logical deduction but about “knowing how” to act within a form of life. This implies that language understanding is inherently embodied: it involves gestures, gaze, posture, and spatial orientation. Without bodily participation, one cannot fully engage in language games. This perspective resonates with embodied cognition theory, which emerged in the late 20th century. Philosophers like Maurice Merleau-Ponty argued in Phenomenology of Perception that the body is not merely a tool for cognition but the very medium through which we experience the world. He stated, “The body is our general medium for having a world.” Similarly, Hubert Dreyfus critiqued early AI rule-based systems for ignoring the tacit, skillful knowledge (know-how) that stems from bodily intuition. Later, Andy Clark and David Chalmers proposed the “extended mind” thesis, suggesting that cognition extends beyond the brain into the environment and tools, such as diaries or calculators. This opens a possibility for technological embodiment: if the mind can be distributed, perhaps an embodied AI robot could achieve a form of cognition through interaction with its surroundings.

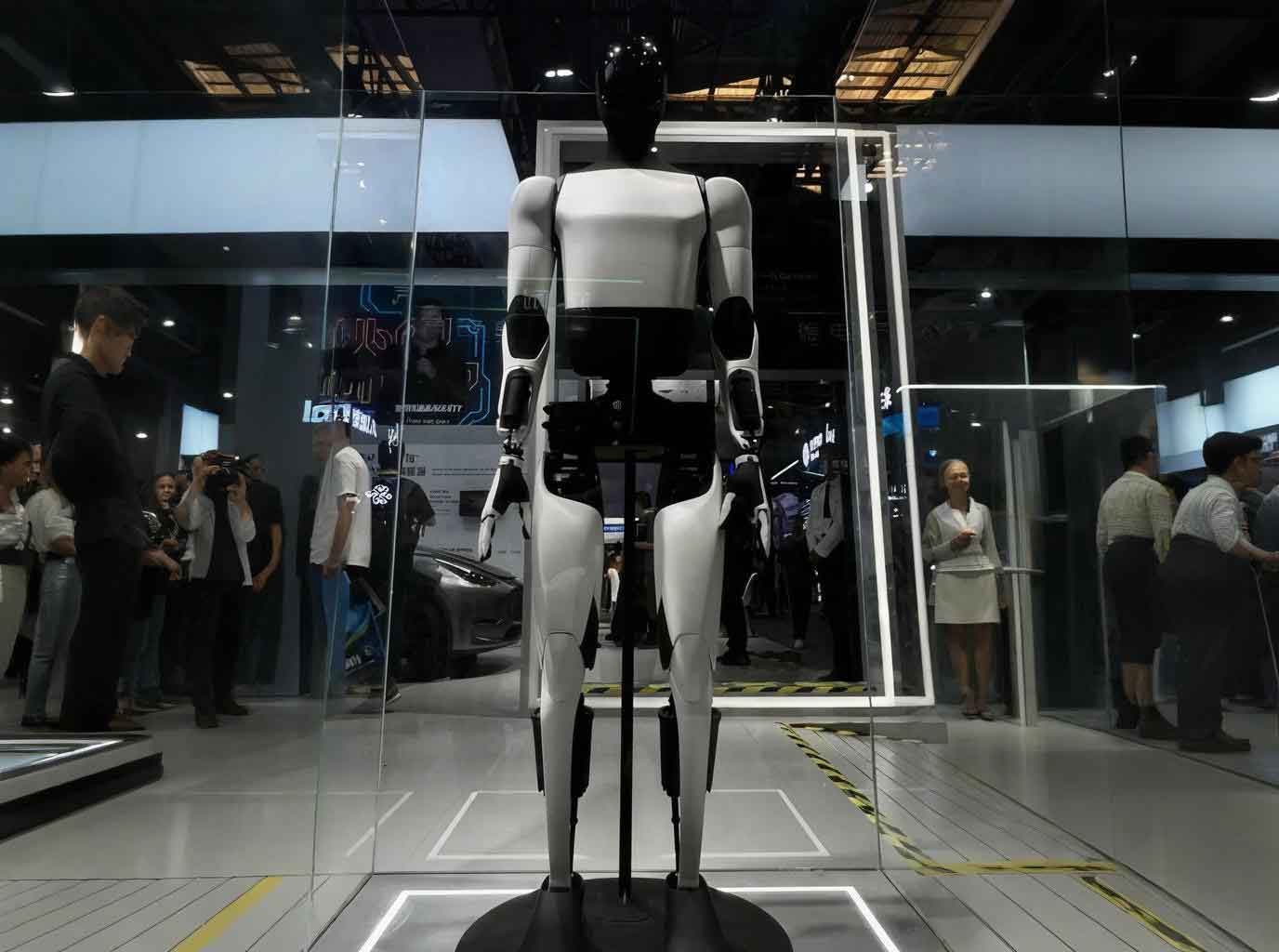

From my analysis, traditional AI fails to participate in language games because it lacks embodiment. It processes symbols without being situated in a physical or social context. In contrast, embodied AI aims to bridge this gap by integrating perception, action, and environment. An embodied AI robot , for example, uses sensors, cameras, and actuators to interact with the world, simulating a “body-in-presence.” However, I identify a critical divide: while such robots achieve physical embodiment through sensory-motor loops, they often miss phenomenological embodiment, which involves lived experiences, emotions, and cultural norms. The table below summarizes this distinction:

| Aspect | Physical Embodiment | Phenomenological Embodiment |

|---|---|---|

| Definition | Interaction with environment via sensors and actuators | Integration of subjective experience, intentionality, and social context |

| Example in AI | Robot navigating a room using lidar | Understanding grief as a human emotion |

| Link to Wittgenstein | Enables basic “language game” participation through action | Requires immersion in “forms of life” for semantic depth |

| Challenge for embodied AI robot | Technical feasibility of real-time feedback | Simulating consciousness or affective states |

To quantify the semantic gap, I propose a simple formula inspired by Wittgenstein’s ideas. Let meaning \( M \) be a function of language use \( L \), context \( C \), and bodily experience \( B \):

$$ M = f(L, C, B) $$

For traditional AI, \( B \approx 0 \), reducing meaning to statistical patterns in \( L \) and \( C \). For an embodied AI robot , \( B \) is non-zero but limited to physical interactions, so \( M \) remains partial. True understanding, as per Wittgenstein, requires \( B \) to encompass the full “form of life,” which can be modeled as:

$$ B = \int (S(t) + E(t) + R(t)) \, dt $$

where \( S(t) \) represents sensory inputs, \( E(t) \) emotional correlates, and \( R(t) \) social responses over time \( t \). Current embodied AI robot systems approximate \( S(t) \) but struggle with \( E(t) \) and \( R(t) \).

Despite these challenges, I see pathways for AI to engage in language games. One approach is through technological embodiment, where AI is embedded in physical or virtual environments. For instance, an embodied AI robot equipped with multi-modal sensors can learn from real-world interactions, gradually developing context-aware responses. In a simulated scenario, such a robot might “see” a person crying via camera and generate the query “Are you okay?” rather than merely outputting pre-trained text. This shifts from language generation to language action, aligning with Wittgenstein’s emphasis on “doing” over “knowing.” Another path involves virtual reality (VR) or game-like simulations, where AI inhabits a digital body and practices language games within constructed rules. OpenAI’s multi-modal agents, for example, combine text, vision, and action training, allowing them to adapt expressions based on situational feedback. The following table outlines key technologies enabling this progression:

| Technology | Description | Relevance to Language Games |

|---|---|---|

| Multi-modal LLMs (e.g., GPT-4V) | Integrate visual, textual, and auditory inputs | Enhance context perception for pragmatic language use |

| Robotics Platforms | Physical robots with sensors and actuators | Provide embodied interaction for learning through doing |

| Reinforcement Learning | AI learns via trial-and-error in environments | Simulates rule-following as in Wittgensteinian training |

| Virtual Reality Simulations | Immersive digital worlds for AI agents | Create artificial “forms of life” for language practice |

As an example, consider an embodied AI robot deployed in a caregiving scenario. It might use tactile sensors to detect a person’s hand tremor and combine this with speech analysis to infer anxiety, then respond with calming words or actions. This mirrors the way children learn language: not through definitions, but through repeated, embodied interactions within social settings. However, even advanced systems face a phenomenological hurdle. As Merleau-Ponty noted, understanding emotion like sadness requires lived experience—something an AI cannot possess. Thus, while an embodied AI robot can approximate language games, it remains a “simulated participant” rather than a genuine one.

In my view, the future of AI lies in deepening this embodiment. Current research focuses on closing the loop between perception, action, and language. For instance, embodied AI models are being trained on datasets that include not just text, but video, audio, and haptic feedback, allowing them to correlate phrases with physical contexts. A formula for such integrated learning could be:

$$ P(a|s) = \frac{\exp(\beta \cdot U(s, a))}{\sum_{a’} \exp(\beta \cdot U(s, a’))} $$

where \( P(a|s) \) is the probability of action \( a \) given state \( s \), \( U(s, a) \) is a utility function combining linguistic and sensory inputs, and \( \beta \) is a parameter tuning exploration vs. exploitation. This draws from reinforcement learning, enabling an embodied AI robot to optimize its language actions in real-time. Yet, as Wittgenstein cautioned, rules alone are insufficient; they must be enacted within a community. Thus, AI systems need social training—interacting with humans or other agents to refine pragmatics. Projects like AI companions in VR chatrooms exemplify this, where bots learn dialogue norms through iterative feedback.

Nevertheless, I contend that a fundamental limit persists. Wittgenstein’s language games are rooted in human “forms of life,” which involve intentionality, morality, and emotions. An AI, even an embodied AI robot, lacks consciousness and thus cannot be held responsible for its speech acts. It might “say” the right thing, but it cannot feel remorse or joy, which are integral to human language games like apology or celebration. This echoes Dreyfus’s critique: AI misses the “background” of shared human practices. To illustrate, consider a language game of promising: humans understand the commitment and trust involved, whereas an AI might generate a promise-like statement without any sense of obligation. The table below contrasts human and AI participation in language games:

| Feature | Human Participant | Embodied AI Robot |

|---|---|---|

| Basis of Understanding | Embodied experience and social immersion | Algorithmic processing of multi-modal data |

| Rule-Following | Flexible, intuitive adaptation to context | Programmed or learned patterns, often rigid |

| Emotional Depth | Genuine affect and empathy | Simulated emotional responses via models |

| Accountability | Moral and social responsibility for speech | No intentionality; actions are deterministic |

| Integration with “Forms of Life” | Inherent through biology and culture | Approximated through designed environments |

In conclusion, from my perspective, Wittgenstein’s philosophy offers a powerful lens to reassess AI progress. Language is not merely about symbol manipulation but about embodied practice within shared life forms. While embodied AI, particularly through robots and multi-modal systems, can mimic language games by interacting with environments, it cannot fully bridge the phenomenological gap. The journey from physical to phenomenological embodiment remains a grand challenge. As I see it, AI may become a sophisticated language actor, capable of useful interactions in healthcare, education, or entertainment, but it will not become a “language bearer” in the human sense. Understanding, in the Wittgensteinian spirit, is a weave of body, time, emotion, and society—a tapestry that machines can approximate but never inhabit. Thus, as we advance embodied AI robot technologies, we must remain mindful of these philosophical boundaries, leveraging them to create better tools while acknowledging their inherent limits.

To summarize key insights, I present a formula for the evolution of AI understanding, where \( U_{AI} \) represents AI’s language capability and \( U_{human} \) human understanding:

$$ U_{AI} = \alpha \cdot \sum_{i=1}^{n} (w_i \cdot D_i) + \gamma \cdot B_{phys} $$

$$ U_{human} = \delta \cdot B_{phen} + \epsilon \cdot S $$

Here, \( \alpha \) and \( \gamma \) are scaling factors, \( w_i \) weights for data types \( D_i \) (text, image, etc.), \( B_{phys} \) physical embodiment, \( \delta \) and \( \epsilon \) constants for phenomenological embodiment \( B_{phen} \) and social embedding \( S \). As \( B_{phys} \) increases, \( U_{AI} \) approaches but never equals \( U_{human} \) due to the missing \( B_{phen} \) term. This underscores the ongoing quest in AI research: to enrich embodiment beyond the physical, perhaps through affective computing or social robotics, while recognizing that true language games will always elude pure machinery. In the end, as Wittgenstein might say, “If a lion could talk, we could not understand him”—and similarly, with an embodied AI robot, we may dialogue, but the depth of understanding remains a human prerogative.