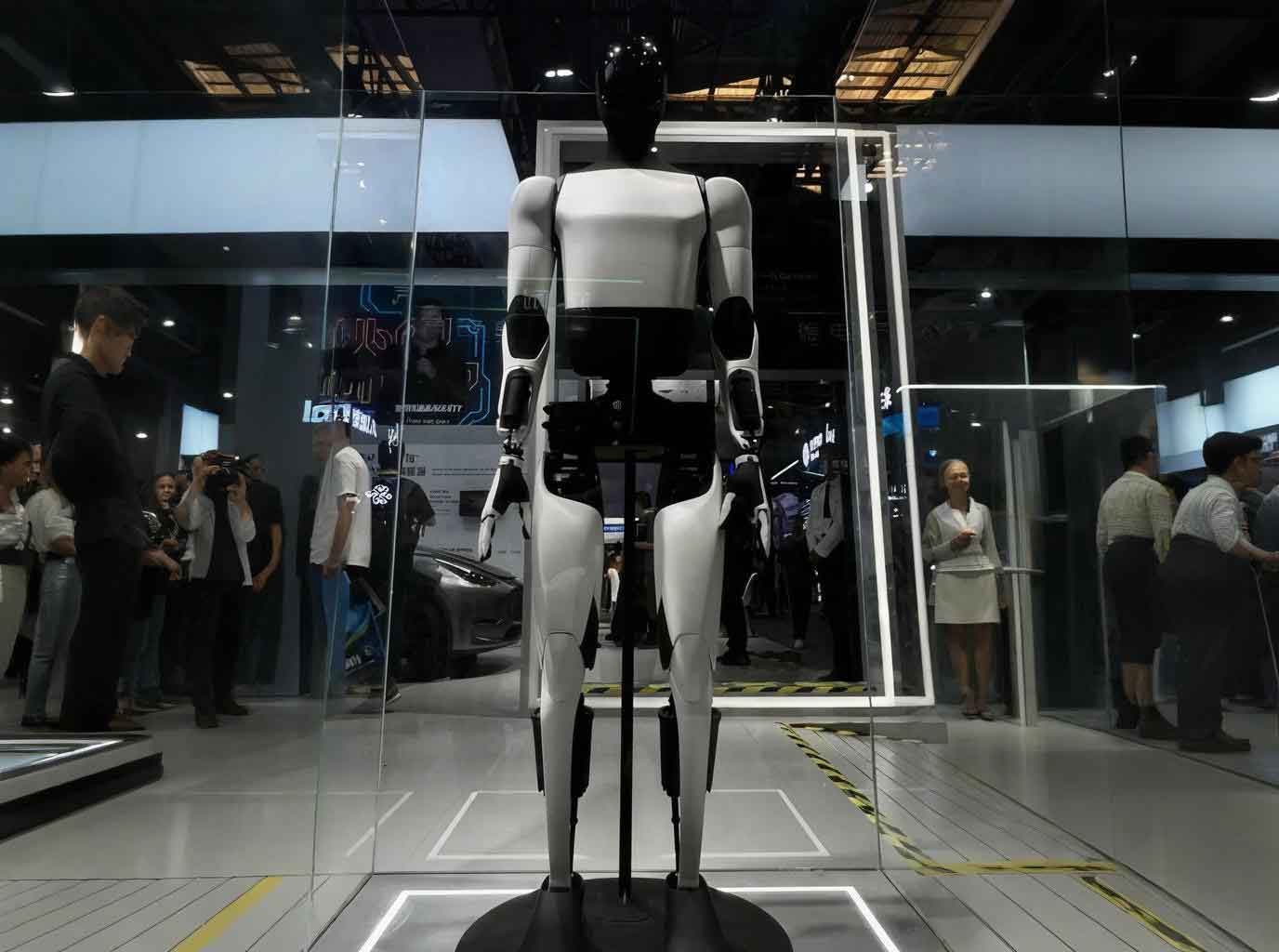

The evolution of intelligent technology is ushering in a paradigm shift in how humans acquire, interact with, and internalize knowledge. Traditional models of knowledge service, characterized by static symbols and passive consumption through reading, listening, or watching, are proving inadequate for the dynamic, experiential, and highly personalized demands of the modern user. There is an urgent need to deepen the supply-side reform of knowledge services and seek new models empowered by digital technologies. In this transformative landscape, the concept of embodied intelligence knowledge service emerges as a groundbreaking frontier. This paradigm leverages the profound integration of a physical or virtual presence within the user’s context to deliver knowledge not as abstract information, but as a lived, interactive experience. At the heart of this revolution is the embodied AI robot, a sophisticated agent that combines physical instantiation, environmental perception, and cognitive reasoning to bridge the gap between digital knowledge and human understanding. This article explores the theoretical foundations, technological architecture, systemic framework, and expansive application prospects of knowledge services delivered through the lens of embodied AI, positioning the embodied AI robot as the central actor in this new ecosystem.

The theoretical underpinnings of this shift are rooted in the convergence of several key philosophies. Embodied Cognition posits that cognitive processes are deeply rooted in the body’s interactions with the world; understanding is not merely a brain-bound computation but is shaped by sensory-motor experiences. This translates directly to knowledge services, emphasizing the presence of the service agent—whether a physical robot or a virtual avatar—within the user’s spatial and situational context. An embodied AI robot operationalizes this theory by being “there,” enabling a shared situational awareness that forms the basis for relevant and timely knowledge intervention.

Building upon this, the concept of Embodied Knowledge highlights knowledge that is tied to action, sensation, and lived experience. It encompasses perceptual, motor, emotional, and experiential knowing. An effective knowledge service must therefore foster active exploration and manipulation, moving beyond passive dissemination. The embodied AI robot acts as a catalyst for this active learning, guiding users through hands-on tasks, simulating complex scenarios, and providing kinetic feedback, thereby transforming inert data into actionable, “felt” understanding.

Finally, Embodied Communication theory broadens the perspective to the dynamics of interaction itself, asserting that meaning is co-constructed through multimodal signals—gesture, posture, gaze, and tone—not just language. This underscores the connectivity between the service provider and recipient, as well as between virtual and physical realms. The embodied AI robot, with its capacity for natural gesture, affective computing, and seamless cross-reality presence, becomes a powerful medium for this rich, connected communication, ensuring knowledge transfer is intuitive, engaging, and contextually resonant.

The realization of this vision rests on a powerful technological bedrock, a synergistic “Four Pillars” architecture where the embodied AI robot serves as the unifying physical or virtual embodiment.

| Pillar | Core Function | Contribution to Embodied AI Robot |

|---|---|---|

| Metaverse & Spatial Computing | Creates persistent, immersive, and interactive 3D environments. | Provides the infinite, scalable “field of operation” and shared contextual space. The robot’s digital twin can operate here, or it can use spatial data to navigate and understand the physical world. |

| AIGC & Large Language Models (LLMs) | Generates, reasons with, and personalizes content and dialogue dynamically. | Serves as the “cognitive brain.” Enables natural language interaction, contextual reasoning, personalized knowledge synthesis, and adaptive response generation for the robot. |

| Digital Humans & Avatars | Offers photorealistic or stylized human-like agents for virtual interaction. | Provides the primary visual and interactive interface in virtual/metaverse settings. It is the virtual instantiation of the service agent, capable of empathetic, face-to-face knowledge delivery. |

| Robotics & Sensor Fusion | Provides physical presence, mobility, and environmental sensing (LiDAR, vision, force/touch). | Constitutes the “physical body.” Enables navigation, object manipulation, haptic interaction, and real-world data collection, grounding knowledge services in tangible reality. |

The embodied AI robot is not merely one of these pillars but the integrative platform that binds them. Its “body” (robotics/avatar) interacts within a “world” (Metaverse/real space), guided by a “mind” (AIGC), to perform knowledge acts. The service framework built upon this integration undergoes a fundamental transformation across four key dimensions, as summarized below:

| Service Dimension | Traditional Paradigm | Embodied AI Robot Paradigm | Enabling Mechanism |

|---|---|---|---|

| Knowledge Production | Digital, static, author-centric. | Humanized & Experiential: Data is transformed into interactive, multi-sensory knowledge scenarios. | AIGC generates narrative-driven simulations; robot sensors capture real-world data to create authentic learning modules. |

| Knowledge Processing | Professional, standardized, one-size-fits-all. | Precise & Personalized: Content is dynamically tailored to user’s cognitive level, learning style, and real-time context. | LLMs analyze user interaction history and physiological feedback (via robot sensors) to adapt content complexity and presentation mode. |

| Knowledge Dissemination | Broadcast, linear, symbolic. | Intuitive & Interactive: Knowledge is conveyed through guided doing, spatial demonstration, and Socratic dialogue. | The robot uses gesture, gaze, movement, and object manipulation to demonstrate procedures or explain spatial concepts within a Metaverse or real environment. |

| Knowledge Feedback & Evolution | Surveys, tests, lagging indicators. | Continuous & Contextual: Real-time assessment through action completion, gaze tracking, and conversational analysis drives immediate service optimization. | Robot vision and dialogue systems assess user confusion or mastery, creating a feedback loop $$F(t)$$ that instantly adjusts the service path $$S(t)$$. |

This framework can be formalized as an adaptive service loop. Let the state of the user-context be represented by a multi-modal vector $$C_t$$ at time $$t$$, encapsulating verbal query, physical environment, user emotional state, and task progress. The embodied AI robot possesses a knowledge model $$K$$ and an action policy $$\pi$$. The service interaction is a sequence where the robot observes $$C_t$$, uses its AIGC core to reason and retrieve relevant knowledge $$k_t \subset K$$, and then executes an embodied action $$a_t = \pi(k_t, C_t)$$ (e.g., speaking, pointing, manipulating an object, displaying a hologram). The resulting user response and changed context yield $$C_{t+1}$$, closing the loop. The system’s goal is to maximize a utility function $$U$$ representing knowledge assimilation, defined as the reduction in the distance between the user’s mental model $$M_{user}$$ and the target knowledge model $$M_{target}$$:

$$ \text{Maximize } U = – \int_{t_0}^{T} \| M_{user}(t) – M_{target} \| \, dt $$

where the evolution of $$M_{user}(t)$$ is directly influenced by the embodied actions $$a_t$$ of the robot. This closed-loop, contextual, and action-oriented model is what distinguishes the embodied AI robot from any previous knowledge delivery system.

The applications of this paradigm are vast and transformative. The following table outlines its impact across major sectors:

| Sector | Traditional Challenge | Embodied AI Robot Solution | Exemplar Scenario |

|---|---|---|---|

| Education & Training | Abstract theory, lack of practice, one-way instruction. | An embodied AI robot acts as a personal lab instructor or historical guide. In a Metaverse chemistry lab, it demonstrates procedures, warns of unsafe virtual actions, and explains outcomes. In vocational training, a physical robot guides a trainee through a physical repair task, offering hands-on correction. | A medical student practices a surgical procedure with a robotic tutor that provides haptic feedback on incision depth and real-time verbal guidance, powered by an AIGC model simulating patient physiology. |

| Public Cultural Heritage | Passive viewing, physical preservation limits access, lack of engagement. | A museum deploys an embodied AI robot guide (physical or as an AR avatar) that doesn’t just recite facts but tells interactive stories, “resurrects” historical figures for Q&A, and allows visitors to virtually handle fragile artifacts through a robotic telepresence interface. | At an ancient ruins site, an AR-enabled robot guide overlays reconstructions onto the physical ruins and narrates the story from the perspective of a former inhabitant, whose persona is generated dynamically by an LLM. |

| Healthcare & Therapy | Generalized advice, poor patient adherence, limited monitoring. | A companion embodied AI robot provides personalized rehabilitation coaching, demonstrating exercises, monitoring form via computer vision, and offering encouragement. It can also serve as a empathetic conduit for mental health first aid, conducting therapeutic conversations and detecting signs of distress. | For post-stroke motor recovery, a robot guides a patient’s limb through prescribed movements, adapts the exercise difficulty in real-time based on performance metrics, and maintains a motivational dialogue. |

| Industrial & Technical Support | Complex manuals, expert scarcity, costly downtime. | A field-service embodied AI robot can be deployed to assist a technician. Using augmented reality, it overlays schematic diagrams onto machinery, identifies faulty components through thermal imaging, and provides step-by-step repair instructions verbally and through gesture, accessing a vast AIGC-maintained knowledge base. | A technician repairing a wind turbine is assisted by a drone-based robotic agent that visually inspects hard-to-reach areas, streams video to an LLM for anomaly diagnosis, and projects repair annotations directly onto the equipment in the technician’s AR glasses. |

In conclusion, the fusion of embodied cognition principles with the capabilities of advanced AI and robotics is not merely an incremental improvement but a fundamental redefinition of knowledge services. The embodied AI robot stands as the central, active agent in this new ecosystem—a teacher that demonstrates, a guide that accompanies, a coach that corrects, and a collaborator that reasons within shared physical or virtual contexts. It transforms knowledge from a commodity to be consumed into an experience to be lived and a skill to be practiced. The framework presented here, spanning from its theoretical logic and technological pillars to its systemic processes and diverse applications, provides a roadmap for building more comprehensive, intelligent, and profoundly human-centric knowledge service networks. As these technologies mature, the focus must remain on seamless integration, robust and ethical feedback mechanisms, and user-centered design to fully realize the potential of embodied AI robot in driving the high-quality development of knowledge services for all sectors of society. The future of learning, understanding, and expertise will be shaped not just by what we know, but by how our intelligent, embodied partners help us experience and interact with that knowledge.