The evolution of manufacturing towards intelligence and flexibility has exposed the inherent limitations of traditional teach-and-playback robot programming. Struggling to adapt to the demands of “high-mix, low-volume” production scenarios, this method hinders rapid task redeployment. In this context, human-robot interaction paradigms are shifting towards more efficient and intuitive paradigms. Learning from Demonstration (LfD) emerges as a pivotal technological framework, enabling robots to acquire executable policies by observing and interpreting human demonstrations. This significantly lowers the programming barrier and enhances the environmental adaptability of robotic systems. By abstracting strategies from human actions, LfD effectively constructs a skill library for robotic agents.

The integration of Internet of Things (IoT) technology presents transformative opportunities for LfD systems. By connecting vision sensors, Augmented Reality (AR) devices, and industrial robots to an IoT platform, we enable real-time acquisition, efficient transmission, and synergistic processing of multi-source data. This convergence facilitates the construction of an embodied intelligent system endowed with perception, decision-making, and execution capabilities. Such a system not only achieves precise imitation of human operations but also possesses a degree of generalization and self-adaptation to cope with dynamically changing industrial environments.

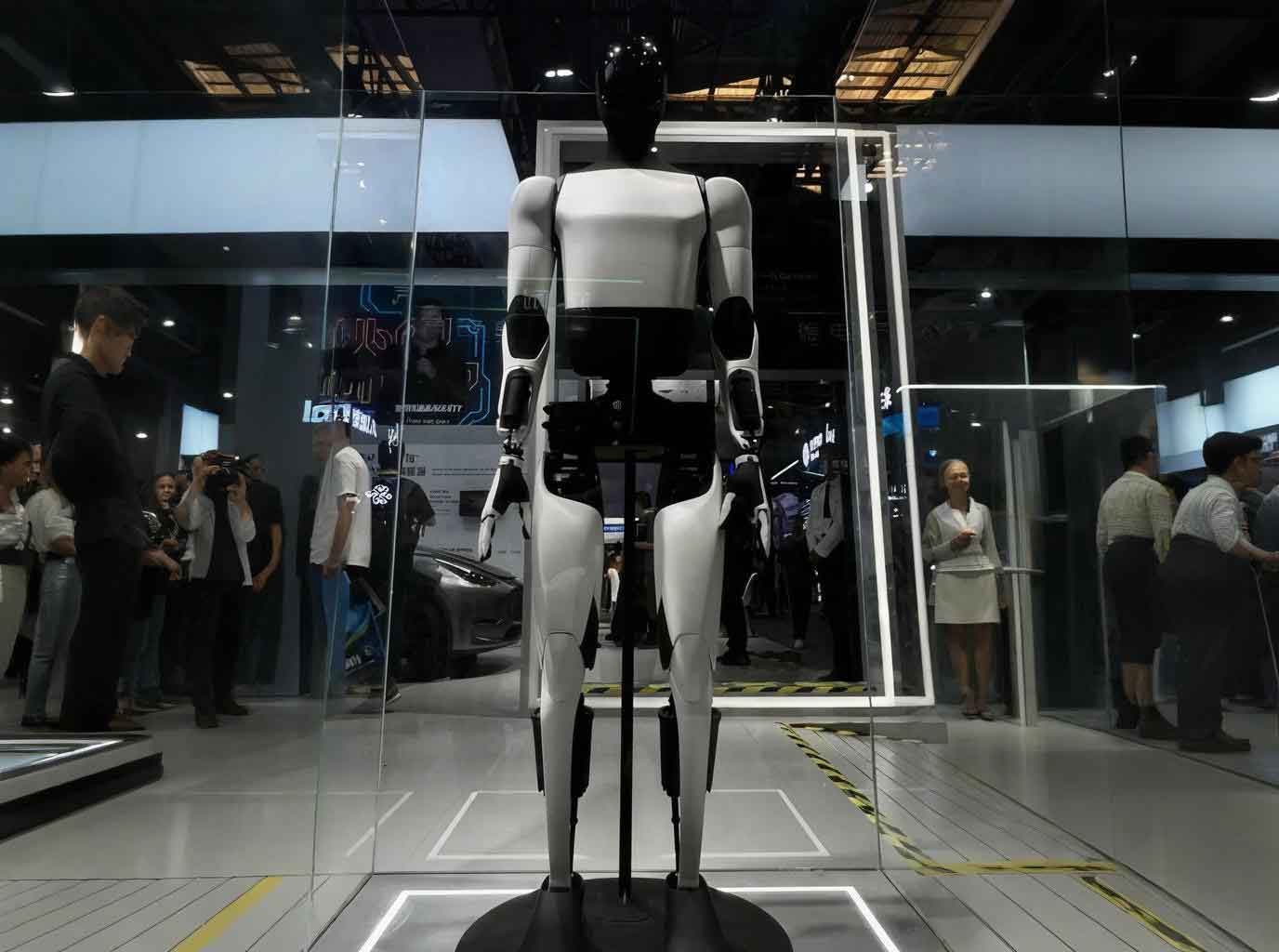

An Industrial Embodied Agent (IEA) represents an intelligent production system that fuses industrial robotics with artificial intelligence. Conceptually, an IEA is a “thinking production line” built upon clusters of robots (e.g., for welding, grinding) overlaid with edge devices and cloud-based AI large models. Within this architecture, the embodied AI robot is responsible for precise execution, edge devices aggregate multi-source data from robots, PLCs, and sensors, and cloud AI models leverage this data for adaptive process optimization, predictive maintenance, quality traceability, and real-time defect alarming.

Building upon traditional teaching methods, this research integrates AR interaction, visual teaching, and IoT technologies to construct a multi-modal input LfD system. The system leverages IoT for device interconnection and data fusion, employing a combined cloud-training and edge-inference approach to enhance skill learning efficiency, thereby offering a novel technical pathway for intelligent human-robot collaboration in industrial settings.

System Architecture for IoT-Enabled Embodied AI Learning

The proposed IoT-based LfD system for Industrial Embodied Agents analyzes operational demonstration data to construct generalizable policy models. This empowers the embodied AI robot to adapt its actions based on environmental perception to complete specified tasks. Effectively overcoming the constraints of manual programming, this system holds significant importance for advancing the intelligent development and scalable application of robotics. The architecture is fundamentally structured into three layers: the Industrial Field Layer, the Network Layer, and the Platform Service Layer.

System Composition and Deployment

The Industrial Field Layer integrates perception devices such as cameras, AR glasses, and Inertial Measurement Units (IMUs) for data collection. It also deploys edge computing nodes responsible for real-time data preprocessing and model inference, ensuring system responsiveness and reliability. The Network Layer utilizes industrial-standard communication protocols like OPC UA and MQTT to guarantee efficient and reliable data transmission between the field devices and the platform. The Platform Service Layer hosts the AI computing engine, performing in-depth analysis of demonstration data and model training to support intelligent decision-making and optimization functions.

In this system, the IoT platform acts as the central data hub and scheduler. It integrates and orchestrates data from heterogeneous field devices through standardized protocols, providing unified access to on-site data. This continuous, high-quality data stream is crucial for the LfD process and enables cross-factory skill model sharing and incremental updates. The system employs a training-inference decoupled deployment strategy. Cloud-based training servers utilize collected demonstration data to train skill recognition and policy generation models, while edge devices are responsible for model deployment and real-time inference. This division ensures both high-quality model training and meets the real-time requirements of task execution.

Methodologies for Robot Learning from Demonstration

Robot LfD can be primarily categorized into two methodological approaches: trajectory-level (based on motion capture) and skill-level (based on demonstration video) learning. Each caters to different task requirements and complexities inherent to the embodied AI robot operation.

Trajectory-Level Demonstration Learning via Motion Capture

This method records the operator’s precise motion trajectories using high-accuracy capture systems. After coordinate transformation and kinematic solving, these trajectories generate control commands for the robotic arm. It is particularly suitable for high-precision tasks like welding or dispensing.

The core mechanism of AR technology involves overlaying computer-generated virtual information (e.g., virtual objects, spatial annotations) seamlessly onto the real environment, enhancing the user’s perception of reality while preserving the capacity for real-time interaction with the physical world. Our system integrates AR interaction to construct a multi-modal LfD framework. Leveraging AR’s spatial positioning and visual trajectory recognition capabilities, operators can perform intuitive action demonstrations. The system automatically captures and generates structured demonstration data to form policy models, significantly enhancing programming efficiency and the environmental adaptability of the embodied AI robot.

Multi-modal perception and virtual-real interaction are achieved through AR devices and multi-sensor fusion. In the IoT environment, all sensing devices and the robot system are networked. The operator, wearing an AR device, sees virtual guides and real-time feedback overlaid on the real scene, interacting naturally via gestures or voice. SLAM (Simultaneous Localization and Mapping) technology ensures spatial alignment and registration between virtual and real scenes. Data processing begins at the edge node, which performs cleaning and feature extraction on raw field data before sending it to the IoT platform for further modeling. By learning from multiple demonstrations, the system can extract optimal motion trajectories and perform online adjustments based on real-time sensor feedback to adapt to varying workpiece positions.

This approach excels in scenarios demanding extreme motion precision. The integration of IoT enables multi-device cooperative teaching and allows remote experts to participate in real-time, greatly enhancing flexibility. In robot interactive programming, AR-based spatial planning differs markedly from traditional offline programming. It allows operators to intuitively adjust the optimal pose of a virtual tool within the AR environment. The core mechanism fuses visual data from cameras with spatial depth data from laser rangefinders, superimposing a virtual interface onto the real workspace. The technical architecture involves multi-modal sensor arrays for scene digitization, SLAM for coordinate system alignment, and an AR engine to generate the interactive interface. The system then uses inverse kinematics to convert virtual manipulations into executable joint-space commands for the embodied AI robot.

The mathematical representation of a demonstrated trajectory \( \tau \) can be modeled as a time-series of end-effector poses:

$$

\tau = \{ \mathbf{p}_t, \mathbf{R}_t \}_{t=1}^{T}, \quad \text{where } \mathbf{p}_t \in \mathbb{R}^3, \ \mathbf{R}_t \in SO(3)

$$

where \( T \) is the trajectory duration, \( \mathbf{p}_t \) is the 3D position, and \( \mathbf{R}_t \) is the rotation matrix at time \( t \). Learning involves finding a policy \( \pi \) that reproduces this trajectory, often through dynamic movement primitives (DMPs) or probabilistic models like Gaussian Mixture Models (GMMs).

Skill-Level Demonstration Learning from Video

Skill-level learning transcends specific trajectories, abstracting demonstrations into sequences of perception-action units. It focuses on extracting transferable, general skills from observations, making it suitable for adaptable tasks like assembly and handling. This method is particularly valuable for complex tasks with high dexterity requirements, such as wire harness assembly or fine adjustment, where it can capture implicit expert knowledge (e.g., force control, subtle manipulations).

The process involves several key steps. First, vision data is acquired and processed. Depth cameras capture demonstration videos, and computer vision algorithms extract key information like hand posture, object state, and action sequences. The IoT platform coordinates multiple visual sensors to ensure spatiotemporal consistency of data. By fusing multi-angle video streams, the system can reconstruct the operator’s 3D action trajectory and object state transitions. Subsequently, skill abstraction and policy generation occur. Deep learning-based action recognition and segmentation algorithms decompose the continuous video into a series of skill units (e.g., “grasp”, “insert”, “rotate”), each corresponding to a perception-action pair. By observing multiple demonstrations, the system builds a skill model and extracts invariant features to form a generalizable policy for the embodied AI robot.

This can be formalized as learning a policy \( \pi_\theta \) parameterized by \( \theta \) that maps observed states \( s_t \) (e.g., from video) to actions \( a_t \):

$$

\pi_\theta(a_t | s_t) = P(a_t | s_t; \theta)

$$

The learning objective is often to maximize the likelihood of demonstrated action sequences \( \mathcal{D} = \{(s_{1:T}^{(i)}, a_{1:T}^{(i)})\}_{i=1}^{N} \):

$$

\theta^* = \arg\max_{\theta} \sum_{i=1}^{N} \sum_{t=1}^{T} \log \pi_\theta(a_t^{(i)} | s_t^{(i)})

$$

The following table contrasts the two core LfD methodologies implemented in our system for the embodied AI robot.

| Aspect | Trajectory-Level Learning | Skill-Level Learning |

|---|---|---|

| Primary Input | Precise motion capture data (e.g., from AR, IMUs) | Demonstration videos (2D/3D) |

| Learning Focus | Geometric path and kinematic reproduction | Semantic action segmentation and policy abstraction |

| Key Output | Time-parameterized trajectory \( \tau \) | Policy \( \pi_\theta(s_t) \) or skill sequence graph |

| Adaptation Mechanism | Online trajectory warping based on sensor feedback | Conditional policy execution based on perceived state |

| Typical Use Case | Welding, glue dispensing, precise motion tasks | Assembly, kitting, packing, dexterous manipulation |

| Data Efficiency | Often requires fewer demonstrations for a specific path | May require more data to learn robust, general skills |

| Role of IoT | Synchronizes multi-sensor data for accurate capture; enables remote expert demonstration. | Coordinates multi-camera views; facilitates sharing of skill models across production lines. |

IoT-Enabled Key Technologies for Embodied AI

The IoT platform is not merely a connectivity layer but the enabling foundation that transforms a collection of robots and sensors into a cohesive, intelligent embodied AI robot system. Its key technological contributions are multi-faceted.

Multi-Source Data Fusion and Synchronization

The platform integrates heterogeneous data from visual, inertial, and force-torque sensors. By establishing a unified temporal standard (e.g., via Precision Time Protocol) and performing coordinate transformation into a common reference frame, it achieves robust multi-modal data fusion. This fusion enriches the environmental context for skill learning, providing a more complete representation of the demonstration. The state observation \( s_t \) for the embodied AI robot can thus be a composite vector:

$$

s_t = [ \mathbf{v}_t^{\text{vision}}, \ \mathbf{i}_t^{\text{IMU}}, \ \mathbf{f}_t^{\text{force}}, \ \mathbf{o}_t^{\text{object}} ]^T

$$

where each component originates from a different sensor stream synchronized via the IoT infrastructure.

Distributed Learning and Knowledge Sharing

The IoT architecture facilitates the secure sharing of demonstration data and skill models across different factories and production lines, fostering a collaborative learning ecosystem. Techniques like Federated Learning allow multiple edge nodes (each with a local embodied AI robot) to collaboratively train a global model \( \theta_G \) without sharing raw local data \( \mathcal{D}_k \). The aggregation on the cloud server can be represented as:

$$

\theta_G^{(n+1)} \leftarrow \sum_{k=1}^{K} \frac{|\mathcal{D}_k|}{|\mathcal{D}|} \theta_k^{(n+1)}, \quad \text{where } \theta_k^{(n+1)} \leftarrow \text{Train}(\theta_G^{(n)}, \mathcal{D}_k)

$$

This accelerates the collective skill acquisition process while maintaining data privacy.

Remote Teaching and Collaborative Operation

IoT enables remote experts to connect to a local teaching environment via AR devices, achieving “remote hands-on” instruction. The expert’s actions in a virtual environment are captured in real-time, translated into commands, and executed by the local embodied AI robot. This drastically expands the scope and efficiency of skill transfer, overcoming geographical barriers.

Skill Transfer and Adaptive Execution

Once a skill model is generated and deployed, the embodied AI robot can invoke corresponding skill modules based on real-time perception. The IoT platform continuously collects execution state data and environmental feedback. By comparing this with the demonstration data, it can detect execution deviations and trigger policy adjustment mechanisms. This closed-loop adaptation is crucial for handling natural variances in real-world tasks.

The table below summarizes the core IoT-enabled technologies and their impact on the embodied AI robot LfD system.

| Technology | Functional Description | Benefit for Embodied AI Robot |

|---|---|---|

| Unified Data Fusion | Synchronizes and fuses vision, motion, and force data into a coherent state representation \( s_t \). | Provides rich, context-aware perception for robust policy learning and execution. |

| Cloud-Edge Synergy | Deploys heavy model training to the cloud and time-critical inference to the edge. | Balances learning quality with real-time performance, enabling online adaptation. |

| Federated Learning Framework | Enables collaborative model training across multiple robot cells without centralizing raw data. | Accelerates system-wide skill acquisition and improves model generalization while ensuring privacy. |

| Remote AR Gateway | Streams AR context and teleoperation commands between remote experts and local robots. | Allows for instantaneous skill transfer and troubleshooting from anywhere, reducing downtime. |

| Digital Twin Feedback Loop | Maintains a virtual model of the robot and environment, comparing expected vs. actual execution. | Enables predictive adjustment of policies and facilitates what-if analysis for new tasks. |

System Application and Validation

To validate the practical performance of the proposed system, application tests were conducted in several typical industrial scenarios, showcasing the versatility of the embodied AI robot.

In an assembly cell, the system, utilizing AR teaching and visual skill learning modules, enabled robots to autonomously perform various wire harness insertion and fastener assembly tasks. The system demonstrated the ability to recognize different part models and adapt to dimensional variances, adjusting assembly paths and operation forces in real-time. Compared to traditional manual programming methods, this system reduced robot task programming time by approximately 70%, significantly improving production line reconfiguration responsiveness and efficiency for high-mix manufacturing.

In a welding station, the system achieved consistent, high-quality weld reproduction by capturing demonstration data from skilled workers. Leveraging the multi-modal perception system and IoT feedback mechanisms, the embodied AI robot could monitor key parameters such as welding temperature and bead geometry. Based on this perceptual information, it dynamically optimized the welding trajectory and process parameters. This resulted in an increase in product qualification rate by approximately 15%.

These application results demonstrate that the proposed system, through the deep integration of multi-modal perception, IoT coordination, and LfD mechanisms, effectively enhances the task adaptability and operational flexibility of industrial robots in complex environments, providing a validated technical solution for flexible production.

Conclusion and Future Perspectives

The IoT-architected LfD system for Industrial Embodied Agents, by fusing AR interaction, multi-modal perception, and cloud-edge协同 processing technologies, achieves efficient learning and reproduction of human operational skills. The primary conclusions are as follows:

- IoT technology provides the system with device interconnection, data fusion, and distributed learning capabilities, enabling it to adapt to complex industrial environments and support cross-platform skill sharing and real-time optimization for the embodied AI robot.

- The combination of trajectory-level and skill-level LfD methodologies allows the system to accomplish both high-precision trajectory reproduction and semantic skill abstraction, significantly elevating the intelligence level and application flexibility of industrial robots.

- The system has shown promising results in practical industrial applications such as assembly and welding, validating its effectiveness and practicality in improving programming efficiency, adaptability, and operational quality.

Future work will focus on several key directions to advance the capabilities of the embodied AI robot: First, exploring more efficient few-shot skill learning methods to reduce dependency on large demonstration datasets. Second, investigating cross-task skill transfer mechanisms to enhance the system’s generalization能力. Third, strengthening IoT security mechanisms to ensure robust data security and privacy protection in industrial networks. Fourth, further refining the AR human-robot interaction experience to minimize cognitive load and make the system more intuitive for operators. The continuous evolution of these areas will further solidify the role of embodied AI robot systems as intelligent, flexible, and collaborative partners in the factory of the future.