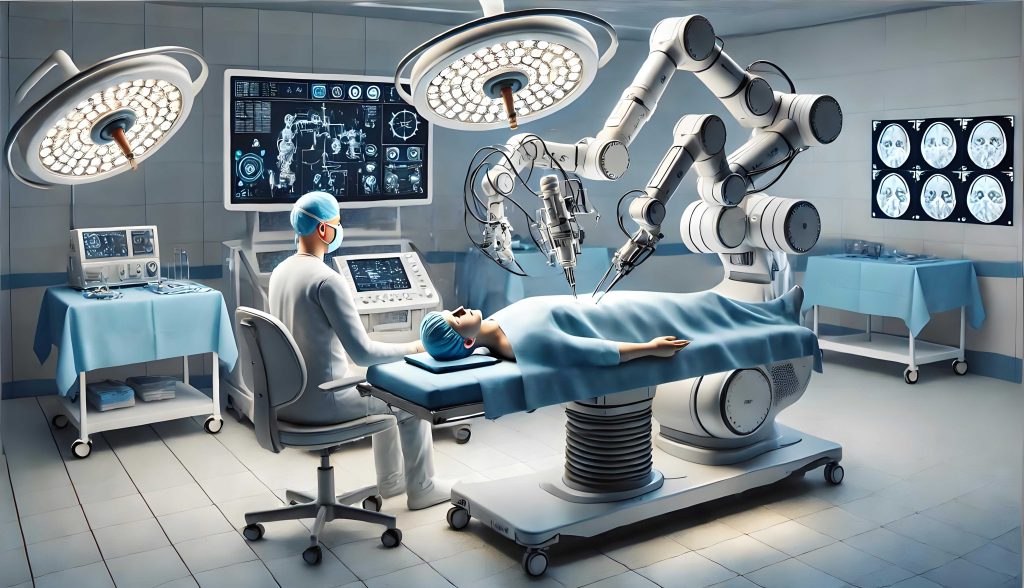

The integration of artificial intelligence into healthcare, particularly through the deployment of medical robots, represents a paradigm shift in diagnostics, surgery, and patient care. This technological evolution, however, introduces profound legal challenges, primarily concerning the fair and efficient allocation of tort liability when a medical robot causes harm. The core of the challenge lies in the dynamic nature of the technology itself; a medical robot’s capacity for autonomy and independent decision-making is not static but evolves through distinct developmental phases. To address liability, we must therefore abandon a one-size-fits-all legal approach and instead construct a framework that corresponds to the robot’s position on the spectrum of AI maturity. This spectrum can be usefully segmented into three stages: the stage of Weak Artificial Intelligence, the transitional stage of Medium Artificial Intelligence, and the prospective stage of Strong Artificial Intelligence. As the medical robot progresses from a sophisticated tool to a potentially autonomous agent, the legal duties of the human healthcare professionals supervising it, the applicability of traditional tort doctrines, and the very legal nature of the robot undergo significant transformation. This article, from my analytical perspective, explores the intricate web of relationships between manufacturers, healthcare providers, and the medical robots themselves, proposing a staged model for allocating tort liability that aims to balance innovation with robust patient protection.

The journey of a medical robot begins in the era of Weak AI. Here, the system operates strictly within predefined parameters and algorithms. It possesses no consciousness, no capacity for genuine learning or creativity beyond its initial programming, and its every action is a direct or interpretative response to human input. In this phase, the medical robot functions unequivocally as a “smart” instrument—an advanced surgical arm guided by a surgeon’s console movements or a diagnostic algorithm that processes inputs to suggest outputs. Legally, its characterization aligns perfectly with that of a product, specifically a complex medical device. It is an object, a chattel, without independent legal status. Consequently, the primary legal frameworks for addressing harm are medical malpractice (or organizational liability of the healthcare institution) and product liability law. The central figure in this stage is the human medical professional, who bears a heightened duty of care. Given the medical robot’s complexity and inherent operational risks—such as unexpected mechanical failures or “black box” decision-making processes—the physician or surgeon is obligated to exercise a significant verification obligation. They must scrutinize the robot’s diagnostic suggestions and maintain vigilant oversight during procedures, ready to intervene immediately if the system malfunctions or deviates from the expected path.

The liability scenarios in the Weak AI stage bifurcate based on the source of the error. If the harm stems solely from a flaw in the medical robot—a design defect, manufacturing error, or inadequate warning—and the healthcare professional fulfilled their verification duty, then liability falls squarely under product liability rules. The injured party can seek compensation from the manufacturer (or the hospital, which may then seek indemnity from the manufacturer). Conversely, if the harm results from the healthcare professional’s negligent use, erroneous command input, or a failure in the hospital’s organizational processes (e.g., poor training, maintenance, or data management), the matter is one of pure medical liability. The most complex case arises from concurrent causation: a defect in the medical robot coincides with a failure in the human verification duty. This constitutes a joint tort, where both the manufacturer (via product liability) and the healthcare provider (via medical liability) can be held jointly and severally liable. The internal apportionment of responsibility in such a case leans heavily toward the medical side, reflecting the human’s primary control and heightened duty. We can summarize this allocation with the following inequality, where $L_{MD}$ represents the share of liability assigned to the medical provider and $L_{MR}$ (effectively the manufacturer) represents the share assigned due to the medical robot’s defect:

$$L_{MD} > L_{MR} \quad \text{and specifically,} \quad L_{MD} > 50\%, \quad L_{MR} < 50\%$$

| Stage | Legal Nature of Medical Robot | Primary User Duty | Pure Product Fault | Pure User Fault | Concurrent Fault (Joint Tort) |

|---|---|---|---|---|---|

| Weak AI | Medical Device / Tool | High Verification Obligation | 100% Product Liability | 100% Medical Liability | Medical Liability > Product Liability |

As technology advances, we enter the nebulous but critical realm of Medium AI. In this stage, the medical robot exhibits “semi-autonomy.” It possesses sophisticated machine learning capabilities, allowing it to improve its performance based on new data, make complex inferences, and operate with a significant degree of procedural independence. Its role evolves from “assistant to the doctor” towards “doctor to the assistant.” It may, for instance, autonomously analyze medical images for screening with high reliability, or execute large portions of a surgical plan with minimal real-time guidance. Legally, it remains a product, but its enhanced capabilities fundamentally alter the dynamics of oversight. The previously clear-cut “high verification obligation” becomes blurred. It may be neither practical nor reasonable to expect a human to double-check every output of a system whose diagnostic accuracy in a specific domain has been validated and may even surpass human capability. The key question becomes: When is a healthcare professional obligated to verify the medical robot’s decision?

The answer, I propose, lies in adopting a “Reasonable Physician Standard.” This standard asks whether a responsible body of medical professionals, skilled in the relevant specialty, would consider it appropriate practice to rely on the medical robot’s output in the given clinical context without independent verification. If such reliance constitutes accepted medical practice, the physician’s verification duty is discharged or significantly reduced. If not, the duty persists. This standard introduces a crucial flexibility. Its application leads to two primary liability pathways in the Medium AI stage. First, if the medical robot causes harm due to a product defect and the reasonable physician standard deemed verification unnecessary, liability is solely product-based. Second, if verification was required under the standard and the physician failed to meet this obligation, concurrent with a product defect, a joint tort is established again. However, the internal apportionment of liability now shifts. Because the medical robot is entrusted with a greater share of the primary medical task, and because its advanced, less-predictable “learning” behavior originates from its design and training by the manufacturer, a larger portion of the responsibility is allocated to the product side. The allocation inequality thus reverses compared to the Weak AI stage:

$$L_{MR} > L_{MD} \quad \text{and specifically,} \quad L_{MR} > 50\%, \quad L_{MD} < 50\%$$

| Stage | Legal Nature of Medical Robot | Primary User Duty | Pure Product Fault (No Verification Duty) | Concurrent Fault (Verification Duty Exists) |

|---|---|---|---|---|

| Medium AI | Advanced Product / Semi-Autonomous Agent | Context-Dependent (Reasonable Physician Standard) | 100% Product Liability | Product Liability > Medical Liability |

The prospective stage of Strong AI presents the most profound legal conundrum. Here, the medical robot would achieve or surpass human-level intelligence, possessing full self-awareness, autonomous learning, and creative problem-solving. It could operate completely independently in a clinical setting, making its own judgments and potentially modifying its own operational protocols. This level of autonomy severs the direct chain of command from human to tool, challenging the very foundation of existing liability models. The central debate revolves around legal personhood. Should a Strong AI medical robot be granted a form of limited legal personality, allowing it to hold assets, enter into certain obligations, and be a direct bearer of liability? Or does it remain an extraordinarily complex product, with liability ultimately tracing back to its creators and users?

Granting legal personality, akin to corporate personhood, is a conceptually tidy but practically fraught solution. A responsible medical robot could carry liability insurance or maintain a compensation fund. However, this raises immense philosophical and technical questions about consciousness, intent, and punishment. The alternative—denying personhood—forces us to innovate within the framework of human-centric responsibility. A compelling model for this path is the “Risk-Beneficiary Pooling” principle. Since a Strong AI medical robot would generate value and risk for a broad ecosystem (developers, manufacturers, healthcare systems, insurers, and society at large), a collective compensation mechanism could be established. This could take the form of a mandatory, layered insurance system: a first-layer insurance mandatory for all medical robot operators, supplemented by a central compensation fund financed by levies on the entire industry. The fund $C$ could be modeled as a function of contributions from various stakeholders in the ecosystem:

$$C = \sum_{i=1}^{n} \alpha_i P_i$$

where $P_i$ represents the financial contribution (e.g., levy, premium) from stakeholder group $i$ (manufacturers, software developers, hospitals), and $\alpha_i$ is a weighting factor reflecting their relative benefit and influence over the AI’s risk profile. This model ensures victim compensation while distributing the unassignable risk of truly autonomous machine behavior across those who profit from and enable the technology, without requiring a definitive answer to the metaphysical question of machine personhood.

| Stage | Legal Nature of Medical Robot | Core Liability Challenge | Proposed Liability Mechanism |

|---|---|---|---|

| Strong AI | Autonomous Agent (Personhood Debated) | Decoupling from human control; identifying a responsible “actor.” | 1. Grantance of Limited Legal Personality or 2. Denial of Personhood + Risk-Beneficiary Pooling (Mandatory Insurance & Central Fund) |

In conclusion, the tort liability for medical robot malfunctions cannot be static. It must evolve in lockstep with the technology’s growing autonomy. Our legal frameworks must be anticipatory and adaptive. For the present and near future (Weak and Medium AI), we can and must refine the application of existing product liability and medical malpractice doctrines, with the “Reasonable Physician Standard” serving as a crucial pivot point for determining human duty. The allocation formulas and tables provided offer a schematic guide for this refinement. As we look toward the horizon of Strong AI, the law must engage in proactive design—whether through creating new categories of electronic personhood or, as I find more pragmatic, through constructing sophisticated, pre-emptive risk-pooling mechanisms. The goal is unwavering: to foster the immense therapeutic potential of the medical robot while constructing a resilient and just system that guarantees redress for harm, regardless of how intelligent the machine causing it may become.