The integration of Artificial Intelligence (AI) with robotics has catalyzed a paradigm shift in modern medicine, finding profound applications in clinical treatment, surgical procedures, and postoperative rehabilitation. This synergy, often embodied in the form of AI-powered medical robots, is a cornerstone of the rapidly advancing field of smart healthcare. However, the increasing autonomy and complexity of these systems introduce significant legal challenges, particularly concerning liability when accidents occur. The traditional legal frameworks for accident liability, predicated on clear human agency and fault, struggle to accommodate incidents involving AI medical robots. The confluence of multiple development entities, opaque algorithmic decision-making, and novel human-machine interaction models renders the identification of accident causes, the determination of wrongful acts, and the allocation of responsibility exceedingly complex. This necessitates a critical examination of the existing legal regime and a forward-looking approach to liability determination.

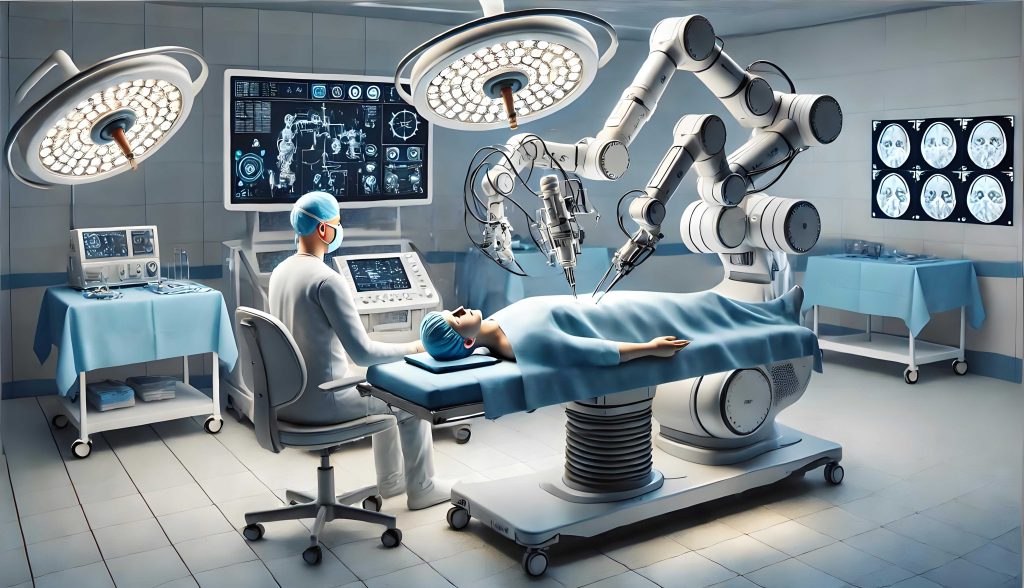

The clinical deployment of AI medical robots is diverse and expanding. Broadly, they can be categorized into three primary types based on application: surgical, rehabilitative, and assistive robots. AI surgical robots, like the widely adopted “Da Vinci” system, enhance precision in minimally invasive procedures. AI rehabilitation robots leverage theories of neuroplasticity to provide adaptive, interactive therapy for functional recovery. AI assistive robots encompass systems for diagnostic imaging analysis, radiotherapy, and logistical support within hospitals. Their technical hallmarks are distinct from traditional medical devices: Artificial Intelligence enables machine learning and algorithmic decision-making; Human-Machine Hybrid Control creates a shared agency where neither human nor machine has absolute control; and Remote Telesurgery capabilities decouple the surgeon’s physical location from the patient. These very features, while driving efficacy, generate novel legal risks that destabilize conventional liability principles centered on clear human error and product malfunction.

The core challenge for traditional tort liability systems lies in their foundational assumptions. They are designed to adjudicate responsibility where a natural person’s fault is identifiable or where a static product has a manufacturing defect. The AI medical robot, with its capacity for autonomous action within a hybrid-control framework, disrupts this logic. The dilemma manifests in three critical dimensions: the ambiguity of the liable subject, the inapplicability of standard liability categories, and the difficulty in apportioning blame.

First, identifying the liable subject is problematic. Should the medical robot itself bear legal personhood? While some argue for a “qualified legal personality” akin to corporations, a more coherent view maintains its status as a legal object—a sophisticated tool. Its lack of independent assets for compensation and its inherent dependence on human design and context support this object-status. More pressingly, the role of the designer—the entity responsible for the AI algorithms and software ecosystem—is legally obscure. Traditional product liability often focuses on the manufacturer, but with AI systems, the designer’s choices in data training and algorithmic logic can be the primary source of failure. Their omission from standard liability frameworks creates a significant accountability gap.

Second, classifying the appropriate type of liability is fraught. Standard options each present major shortcomings when applied to accidents involving an AI medical robot.

| Traditional Liability Type | Application Dilemma for AI Medical Robots |

|---|---|

| Medical Malpractice Liability | Presupposes a human healthcare provider-patient relationship and human fault. It struggles where the medical robot acts with significant autonomy or where the error stems from an opaque algorithm. |

| Product Liability | Requires proving a “defect.” Algorithmic defects, especially those arising from biased training data or unforeseen machine learning outcomes, are difficult to define and evidence against existing “reasonable expectation” or regulatory standards, which are often absent for AI. |

| Liability for Ultrahazardous Activities | Could serve as a residual category but is ambiguous. It is unclear if using an AI medical robot constitutes an “ultrahazardous activity,” and this framework typically exempts producers and designers, focusing only on the user/operator, which may be inequitable. |

Third, apportioning responsibility is complex due to the intermingling of human and machine fault. The cause of an accident is rarely singular. It could be a combination of a surgeon’s misjudgment, a sensor failure, an algorithmic error in interpreting real-time data, and a latency issue in remote transmission. Disentangling the causal contribution (the “reasonance”) of each factor is a formidable, often forensically impossible, task under traditional rules that rely on comparing clear degrees of fault or causal potency.

A principled response requires a typological analysis of accident causation. We can categorize the proximate causes of failure in AI medical robot operations:

| Causation Type | Description & Examples | Traditional Rule Fit |

|---|---|---|

| Purely Human Fault | Error by the user (e.g., wrong input), maintenance staff, or hospital system failure. Designer/producer negligence in coding or manufacturing also falls here, though they are often not direct “users.” | Fits medical malpractice or standard negligence/product liability, though designer liability is often a gap. |

| Purely Algorithmic Failure (“AI Error”) | 1. Generic Software Bug: Crash, non-response. 2. ML/Algorithmic Defect: Bias in training data leads to discriminatory diagnosis; failure in a novel clinical scenario. 3. Rational “Choice”: Robot executes a pre-programmed harm-minimization protocol causing unavoidable injury. |

Poor fit. Product liability is challenging due to defect definition. The “rational choice” scenario has no human mental state to assign fault. |

| Hybrid Human-Machine Fault | The most likely scenario. A chain of events where human error (e.g., inadequate monitoring) and machine error (e.g., misleading sensor output) interact synergistically to cause harm. | Greatest dilemma. Traditional rules require clear apportionment, which is often technologically infeasible, leading to denial of justice or unfair blame-shifting. |

This typology reveals that a significant class of accidents—those involving core AI failures or hybrid causation—lies outside the effective reach of traditional law. Therefore, constructing a bespoke liability framework for AI medical robot accidents is imperative. This framework should be built on three pillars: clarifying legal statuses, defining applicable liability rules, and establishing fair burden-shifting mechanisms.

Pillar 1: Clarify the Legal Status of Involved Parties. The AI medical robot should be conclusively defined as a legal object—a high-risk medical product. Concurrently, the designer must be elevated to a co-responsible position alongside the manufacturer. Their liability should be joint and several for defects originating in the software/algorithmic ecosystem, just as the manufacturer is liable for hardware defects. This can be modeled as:

$$ L_{producer} + L_{designer} \rightarrow Liability_{product} $$ where a product defect triggers the combined liability of both entities.

Pillar 2: Define a Multi-Track Liability System. Different causation types should trigger different liability rules:

- Medical Malpractice (Organizational Fault): Apply when the healthcare provider’s system of using the medical robot is at fault (e.g., lack of training, failure to supervise). The hospital bears vicarious liability. The standard shifts from individual doctor negligence to organizational failure in integrating the AI tool safely.

- Strict Product Liability for Producers/Designers: Apply for defects. The definition of “defect” must be adapted. A medical robot‘s algorithm is defective if it fails to perform with the safety level a “reasonably advanced alternative design” could have achieved, or if it creates an unreasonable, foreseeable risk not outweighed by its utility. The “general rational person” technical safety standard from traffic law can be analogized here.

- Residual Strict Liability for Ultrahazardous Use: For certain high-risk applications (e.g., autonomous robotic brain surgery), using the AI medical robot could be deemed an ultrahazardous activity. This strict liability would fall on the hospital only if the product itself is found non-defective, serving as a final recourse for the injured patient.

Pillar 3: Rebalance the Evidentiary Burden. Given the “black box” nature of AI and the defendant’s superior access to technical data, procedural fairness demands a shift in the burden of proof.

- Rebuttable Presumption of Causation: When a plaintiff demonstrates a “gross malfunction” of the AI medical robot (prima facie evidence of a defect) and a consequent injury, causation should be presumed. The burden then shifts to the producer/designer to prove the malfunction did not cause the harm.

- Res Ipsa Loquitur / Apparent Proof: In medical contexts, if an injury occurs that ordinarily would not happen without negligence during a procedure involving an AI medical robot, negligence can be inferred. The defendant must then prove specific facts absolving them.

- Liability for Evidence Spoliation: If a healthcare provider or manufacturer intentionally withholds or alters log data from the medical robot, courts should infer the facts alleged by the patient regarding the defect or error.

The allocation of final responsibility among multiple liable parties (e.g., hospital and producer) in hybrid-fault cases can be guided by principles of equitable contribution. Where fault cannot be disaggregated, they should be held jointly and severally liable. A right of contribution among them can be based on their settled share of responsibility or, if indeterminable, equally. This can be conceptually framed as finding the proportional responsibility $p_i$ for each party $i$ such that:

$$ \sum_{i=1}^{n} p_i = 1 $$

where $p_i$ is based on comparative fault and causal potency, and total damages $D$ are owed to the plaintiff such that each party is liable for $p_i \cdot D$, with joint and several liability applying where $p_i$ cannot be determined.

The future of AI in medicine is inextricably linked to robotics, with humanoid medical robots on the horizon. The legal system must evolve in tandem. A proactive, nuanced liability framework that clearly defines roles, adapts existing categories, and recalibrates procedural burdens is essential. It must balance the imperative to compensate victims fairly with the need to foster responsible innovation. The goal is not to stifle technological progress but to channel it safely and accountably, ensuring that the benefits of AI medical robots are not eclipsed by unaddressed legal risks. The principle must be clear: those who create, deploy, and benefit from the risk must also bear its legal consequences.