The paradigm of scientific research is undergoing a profound transformation driven by artificial intelligence (AI). Technologies such as Large Language Models (LLMs) and embodied AI robot systems are pushing the boundaries of what scientific instrumentation can achieve. In this context, we introduce a novel conceptual framework: the Scientific-Intention Driven Embodied AI Robot Solar Telescope (SIDEST). This system aims to redefine the solar telescope not as a passive data-collection tool, but as an active, intelligent partner in scientific discovery. SIDEST is designed to understand high-level scientific goals expressed in natural language, autonomously plan and execute complex observation campaigns, and iteratively evolve its strategies based on results, thereby closing the loop on the entire research process.

The core innovation of SIDEST lies in its three-layer architecture, each responsible for a distinct type of intelligent research loop, effectively creating a synergistic human-machine collaboration framework for heliophysics.

1. Conceptual Framework: The Three-Layer Architecture

The SIDEST system is architected around three synergistic layers that translate abstract scientific curiosity into concrete discovery. This structure facilitates a seamless flow from idea generation to physical observation and, finally, to knowledge synthesis and system evolution.

1.1 The Scientific Intent Research and Argumentation Layer

This layer serves as the interface between the human scientist and the machine. Its primary function is to parse, understand, and deconstruct a natural language research intent (e.g., “Investigate the triggering mechanisms of solar flares” or “Analyze the correlation between active region magnetic field evolution and filament instability”). Leveraging a foundation model fine-tuned on solar physics literature and integrated with a structured knowledge graph, this layer employs a multi-agent debate framework to generate, critique, and refine a set of testable hypotheses.

The process can be formalized. Given a scientific intent \( I \), the layer aims to produce a set of validated hypotheses \( H^* \):

$$ H^* = \arg\max_{H \in \mathcal{H}} \left( \text{Novelty}(H) + \text{Feasibility}(H) + \text{Alignment}(H, I) \right) $$

where \( \mathcal{H} \) is the space of possible hypotheses generated by the AI agents, and the criteria are evaluated through simulated reasoning against physical laws and existing data. The output is not just an observation plan, but a justified research pathway.

| Agent Type | Primary Function | Example Output |

|---|---|---|

| Intent Parser | Natural Language Understanding of research goals. | Structured task: “Monitor AR 13075 for 48 hours, measuring vector magnetic field shear and H-alpha brightening.” |

| Hypothesis Generator | Generates candidate research hypotheses from intent and knowledge base. | Hypothesis: “Flares in this region are preceded by a threshold in magnetic gradient along the polarity inversion line.” |

| Simulation & Debate Agent | Tests hypothesis feasibility via physics-based simulators and agentic debate. | Validation score: 0.87. Resource estimate: 12 hours of telescope time. |

| Observation Planner | Translates the winning hypothesis into a detailed, executable observation schedule. | Schedule: {Telescope A: Stokes V+I sequence every 90s; Telescope B: H-alpha burst mode on trigger.} |

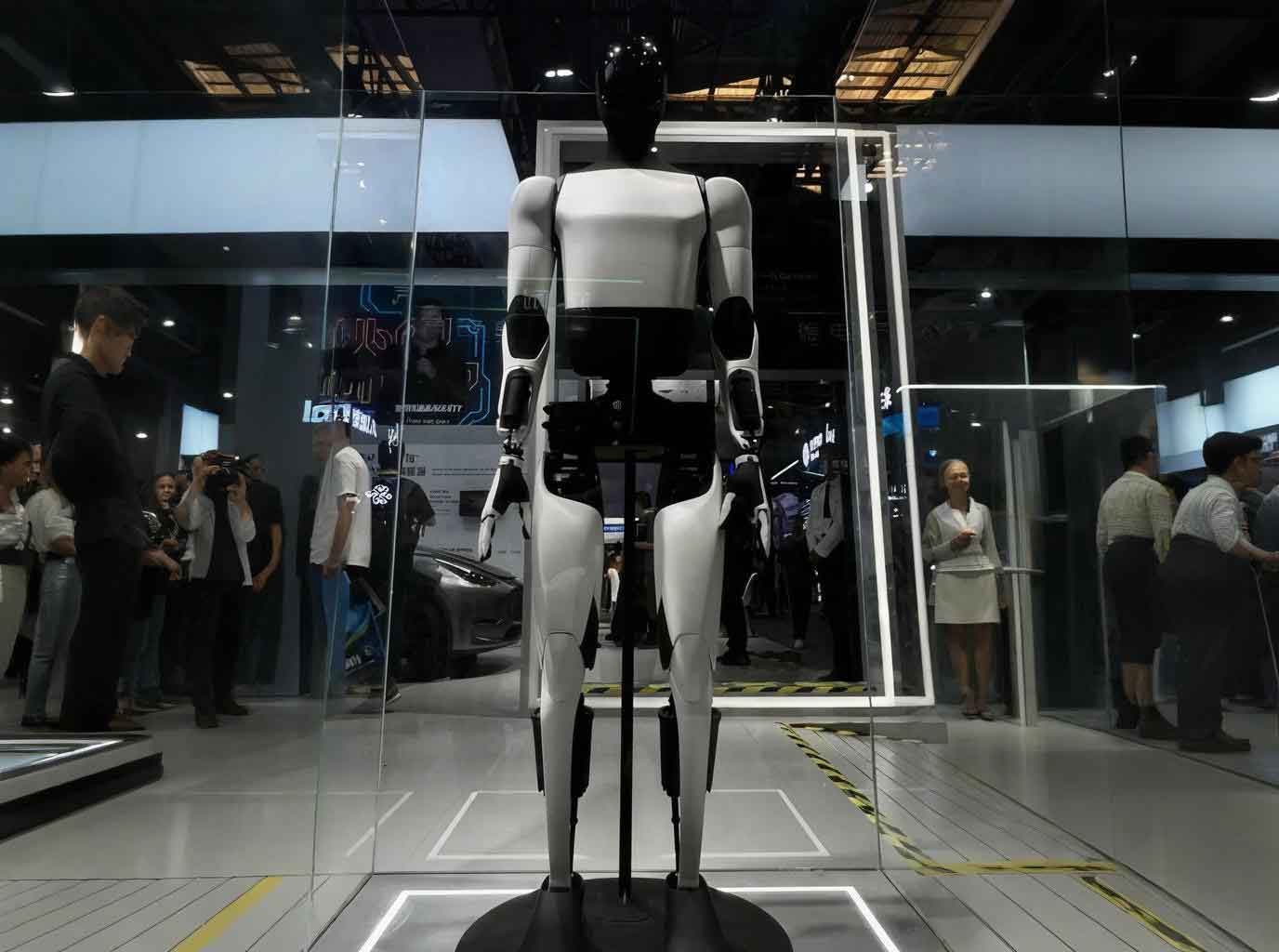

1.2 The Observation Realization Layer: The Embodied AI Robot in Action

This layer is where the abstract plan meets physical reality. It consists of the embodied AI robot telescopes themselves—hardware systems endowed with multi-modal perception, adaptive control, and the ability to execute complex tasks. A key feature is their dual perception: internal (telescope pointing, filter temperature, instrument status) and external (seeing conditions, cloud cover, wind).

The control logic for such an embodied AI robot telescope can be modeled as a constrained optimization problem. At each time step \( t \), the system receives a high-level task \( T_t \) from the upper layer and sensor state \( S_t \). It computes an optimal action \( A_t^* \) (e.g., adjust pointing, change filter, modify exposure):

$$ A_t^* = \pi_\theta(S_t, T_t) $$

where \( \pi_\theta \) is a policy model, potentially a hybrid of classical control and a learned network, trained to maximize data quality \( Q \) and task completion \( C \) while minimizing risk \( R \):

$$ \pi_\theta = \arg\max_{\pi} \mathbb{E} \left[ \sum_{t} \gamma^t ( \alpha Q(s_t, a_t) + \beta C(s_t, a_t) – \rho R(s_t, a_t) ) \right] $$

This enables the telescope to dynamically adapt to changing conditions, such as compensating for tracking errors in real-time or re-prioritizing observations based on sudden solar activity.

1.3 The Evaluation and Evolution Layer

The final layer closes the research loop. It assesses whether the collected data validates the initial hypothesis and satisfies the original scientific intent. This is performed by analysis agents that process the data, generate findings, and produce a draft research report. Crucially, a meta-review agent critically evaluates the entire process—from hypothesis generation to data quality. Its feedback is used to refine the system’s models and strategies.

The evolution is driven by a form of online reinforcement learning. The system’s performance \( P \) on fulfilling intent \( I \) is evaluated, creating a reward signal \( r \). This reward is used to update the parameters of relevant models (e.g., the hypothesis generator \( \phi \), the observation planner \( \psi \)):

$$ \phi_{\text{new}}, \psi_{\text{new}} \leftarrow \phi_{\text{old}}, \psi_{\text{old}} + \eta \nabla_{\phi, \psi} J(r) $$

where \( J \) is an objective function and \( \eta \) is a learning rate. This creates a self-improving system where successful strategies are reinforced, and failures prompt exploration of new approaches, embodying a form of automated scientific reasoning.

2. Core Enabling Technologies and System Integration

The realization of SIDEST hinges on the integration of several advanced technologies, converging around the central theme of an embodied AI robot for science.

2.1 The Embodied AI Robot Telescope Platform

The physical platform is a telescope transformed into an embodied AI robot. This requires:

- Multi-modal Sensing: A sensor suite including guide cameras, position encoders, thermal sensors, spectro-polarimeters, all-sky cameras, and meteorological stations.

- Skill Abstraction: Low-level hardware actions (e.g., `slew_to(ra, dec)`, `set_filter(lambda)`, `acquire_frame(exptime)`) are encapsulated as callable tools or skills via protocols like MCP (Model Context Protocol).

- Hierarchical Control: A high-level LLM-based agent reasons about the task and calls these skills, while a low-level real-time controller ensures precise, stable execution. The control law for a critical subsystem like pointing can be expressed as:

$$ u(t) = K_p e(t) + K_i \int_0^t e(\tau) d\tau + K_d \frac{de(t)}{dt} + f_{NN}(s(t)) $$

where the classic PID terms are augmented by a neural network term \( f_{NN} \) compensating for non-linear disturbances, learned from the embodied AI robot‘s interaction history.

2.2 The Multi-Agent Cognitive Architecture

The “brain” of SIDEST is a society of specialized AI agents, orchestrated to mimic a research team:

| Agent | Role | Key Technology |

|---|---|---|

| Intent Driver | Interfaces with scientist, clarifies goals. | LLM with Retrieval-Augmented Generation (RAG). |

| Research Scientist Agent | Generates and debates hypotheses. | LLM fine-tuned on solar physics, debate frameworks. |

| Embodied AI Robot Controller | Plans and executes physical observations. | LLM with tool-use, integrated with telescope control API. |

| Data Analyst Agent | Processes data, runs analysis pipelines. | Vision-language models, pre-trained solar feature detectors. |

| Meta-Review & Evolution Agent | Evaluates entire process, updates strategies. | Reinforcement Learning, causal reasoning modules. |

The workflow is not linear but dynamic, with agents collaborating and competing through a structured “scientific debate” to arrive at the most robust research path.

2.3 Knowledge Foundation and Continuous Learning

SIDEST is grounded in domain knowledge and learns continuously:

- Knowledge Base: A RAG system built on solar physics textbooks, research papers, and historical observation logs provides factual grounding and reduces model “hallucination.”

- Foundation Models: Domain-adapted LLMs and vision models (e.g., for flare prediction, sunspot classification) serve as the core perceptual and reasoning engines.

- Evolution Loop: The system employs online learning where new observations and research outcomes are fed back to fine-tune the agents’ policies and the underlying models, as formalized in the update equation in Section 1.3.

3. Proof-of-Concept Validation: An Embodied AI Robot for Precision Control

To validate the core principles of SIDEST—particularly intent understanding, autonomous research, and physical embodiment—we developed a minimal prototype in the domain of precision temperature control, a critical subsystem for solar telescope birefringent filters. This prototype acts as an embodied AI robot engineer.

System Design: The hardware consisted of a thermal stage with a heater and a high-precision temperature sensor. The low-level PID controller was disabled, leaving only basic actuation and sensing. The control was managed by an AI agent system with access to this hardware via tool APIs.

Agent Workflow:

1. Perception Agent: Collected temperature data \( T_{actual}(t) \) and calculated error \( e(t) = T_{setpoint} – T_{actual}(t) \).

2. Research Agent: Queried local and online resources (datasheets, control theory textbooks, academic papers) to understand thermal system dynamics and advanced control strategies (e.g., Model Predictive Control – MPC).

3. Design Agent: Synthesized findings and proposed a control strategy. For example, it might derive a discrete-time control law for a system approximated by a first-order model:

$$ u[k] = K \left( e[k] + \frac{T_s}{T_i} \sum_{j=0}^{k} e[j] + T_d \frac{e[k]-e[k-1]}{T_s} \right) + \delta_{MPC} $$

where \( \delta_{MPC} \) is a compensating term from a simple predictive model.

4. Code Generation & Test Agent: Wrote, simulated, and safely deployed Python code implementing the strategy.

5. Evaluation Agent: Monitored performance (e.g., settling time, overshoot, steady-state error \( \sigma_{T} \)) and triggered iterative re-design if goals were not met.

Result: Starting from only a high-level goal (“Achieve and maintain 32.7°C with stability better than ±0.002°C”), the AI engineer system autonomously researched, designed, and implemented a control solution that achieved a peak-to-peak stability of ±0.0015°C within three weeks. This demonstrates the feasibility of an embodied AI robot system understanding an intent, conducting research, and physically realizing a solution—a microcosm of the SIDEST vision.

4. Discussion and Future Trajectory

SIDEST proposes a fundamental shift from telescopes as instruments to telescopes as embodied AI robot scientists. The three-layer architecture enables a new paradigm of human-machine collaborative research, where scientists focus on posing profound questions and judging the significance of results, while the embodied AI robot system handles the intensive labor of planning, observing, and iterating.

The challenges ahead are significant. They include ensuring the robustness and safety of embodied AI robot control in complex environments, curating high-quality domain-specific datasets for training, mitigating model hallucinations in critical reasoning chains, and addressing the ethical implications of autonomous discovery. However, the prototype demonstrates a viable technical path.

Looking forward, SIDEST paves the way for AI-powered networked observatories, where fleets of embodied AI robot telescopes collaborate globally to respond to transient astrophysical events. It represents a step towards a future where the scientific method itself is augmented, making systematic, data-intensive discovery more accessible and accelerating our understanding of the Sun and the universe beyond.