From my perspective as a researcher and practitioner in the field of library science, I have observed the profound impact of artificial intelligence on public cultural services. In recent years, embodied artificial intelligence (EAI), particularly embodied AI robots, has emerged as a cutting-edge frontier, garnering global attention and experiencing rapid development. The year 2024 marked a pivotal moment, often termed the “embodied intelligence元年,” with widespread applications across sectors like transportation, manufacturing, healthcare, education, and culture. In public cultural domains, including libraries, embodied AI robots have demonstrated immense potential in enriching service content and innovating service models. During the 2025 Two Sessions in China, embodied intelligence was formally incorporated into the government work report as a strategic emerging industry, highlighting its national significance. This article explores, from my firsthand experience, the construction of a human-machine collaborative service model in libraries driven by embodied AI robots, aiming to bridge the “last meter” gap between digital and physical spaces.

Embodied intelligence, or “embodied AI,” refers to intelligent agents that generate smart behaviors through their “bodies” interacting with the physical environment. Its core lies in a “perception-reasoning-execution” closed-loop system, enabling real-time dynamic interaction with the physical world to build autonomous cognitive systems. The theoretical foundations date back to Rodney Brooks’ 1991 concept of “intelligence without representation,” which argued that intelligent behavior arises directly from agent-environment interactions without relying on pre-set algorithms. Later, Rolf Pfeifer and Christian Scheier coined “embodied intelligence” in 2001, emphasizing the body’s role in shaping intelligence. Linda Smith’s embodiment hypothesis in 2005 further stressed that cognition emerges from sensorimotor activities during environmental interactions. With breakthroughs in large models, multimodal computing, and simulation training, embodied AI robots have evolved from theoretical research to practical applications, ushering in a new era of deep human-machine collaboration.

In libraries, the past decade has seen smart service research focused primarily on digital resources and virtual services—termed “disembodied intelligence”—leading to a service disconnect between virtual and physical spaces. Embodied AI robots, however, offer a transformative path by integrating physical forms (e.g., robots, smart devices) that perceive and act in the real world. Their value lies in enabling direct interaction with users, resources, and environments, thus resolving the “last meter” issue. By providing continuous perception, decision-making, and creativity, embodied AI robots empower libraries to become dynamic, organic entities. Moreover, they facilitate natural and efficient human-machine collaboration, handling repetitive, high-physical-demand tasks, enabling 24/7 services, and supporting data-driven operations. This allows librarians to shift focus to complex decision-making, in-depth consultations, creative design, and knowledge creation—tasks uniquely suited to human expertise.

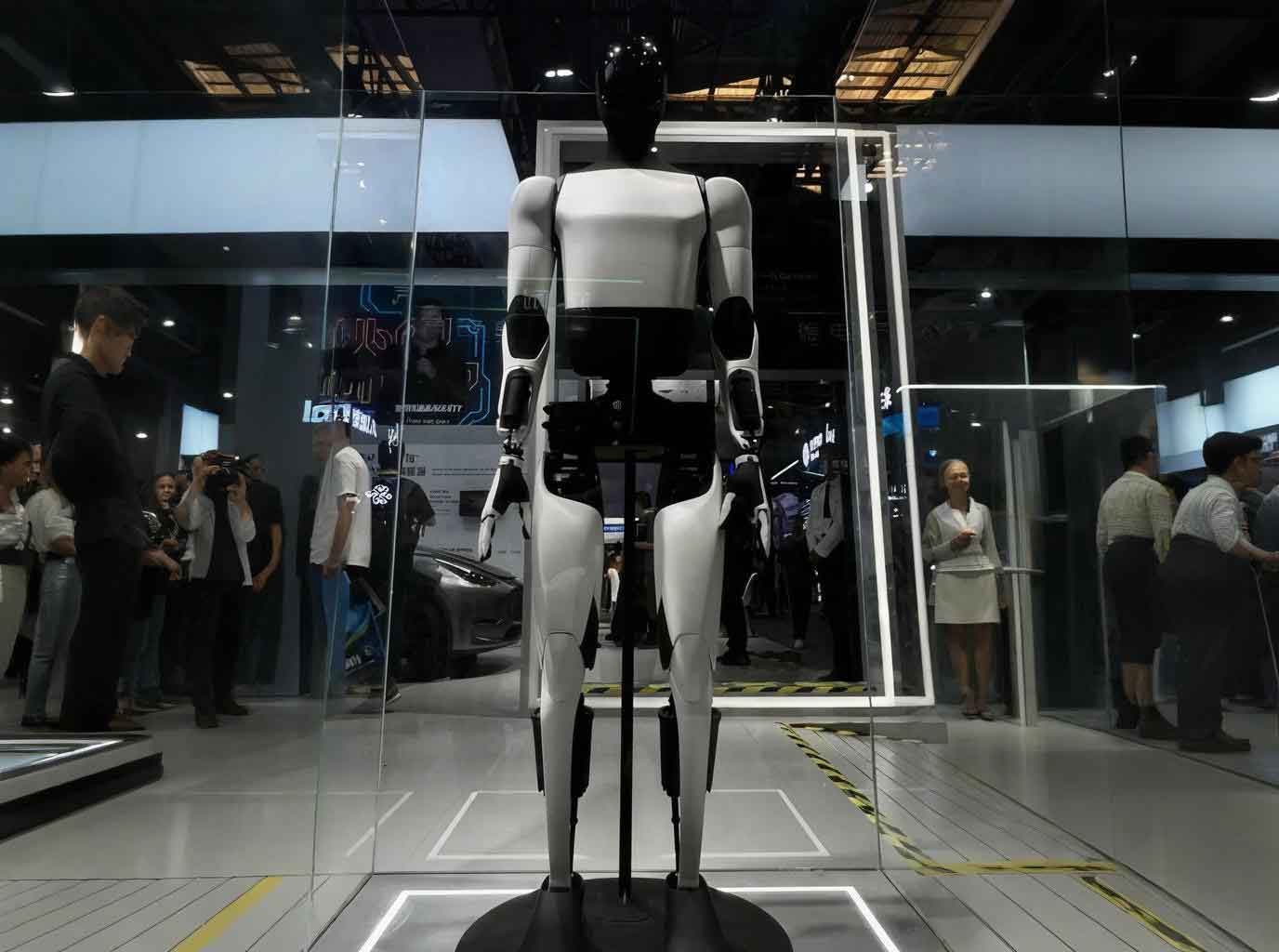

Current applications of embodied AI robots in libraries can be categorized into humanoid (or human-like) robots and non-humanoid robots. Humanoid robots, with their anthropomorphic forms, enhance user acceptance and interaction comfort. For instance, in Shanghai Library, the “图小灵” robot assists with book borrowing, navigation, inquiries, and even delivers books to users’ seats. In the United States, Westport Library uses humanoid robots for programming demonstrations and multilingual interactions. Pepper, an interactive emotional robot, is deployed in various libraries for emotion recognition and engagement, while NAO robots in Germany provide讲解 services and cultural activities. Non-humanoid robots include AGV transport robots for automated book handling, such as in Foshan Shunde Library;智能盘点 robots for inventory management, like the aerial librarian robot using computer vision; and outdoor circulation robots for mobile lending services, as seen in Beijing City Library. These embodied AI robots are gradually evolving toward humanoid designs, driven by policy support and technological advances.

However, from my observation, these applications remain in an exploratory stage as “intelligent tools,” with limitations hindering their full potential. Embodied AI robots often exhibit weak intelligence, requiring human intervention for complex tasks; human-robot interaction can be rigid, with difficulties in understanding nuanced contexts; and off-the-shelf products may not fully align with library-specific needs. Safety risks, such as physical collisions or data privacy breaches, also pose challenges, as highlighted by incidents like a chess-playing robot injuring a child in Moscow. Thus, a systematic “human-machine collaborative service model” is essential to harness the true potential of embodied AI robots and achieve a harmonious library ecosystem.

I propose a comprehensive model comprising three layers: Technical, Application, and Support, as summarized in the table below.

| Layer | Components | Key Functions |

|---|---|---|

| Technical Layer | Multimodal Perception Fusion, Digital Twin & Knowledge Graphs, Cloud-Edge Collaborative Platform | Foundation for data acquisition, processing, and decision-making |

| Application Layer | Perception-Decision-Action-Feedback Closed Loop, Resource Management, User Services, Activity Implementation, Space Management | Core service operations and human-robot collaboration |

| Support Layer | Librarian Role Transformation, Ethical & Safety Norms, Organizational Restructuring | Ensuring sustainable and responsible implementation |

The Technical Layer forms the backbone of the model. Multimodal perception fusion integrates data from diverse sensors, such as visual cameras for user behavior analysis, auditory microphones for voice interaction, environmental sensors for climate control, and RF/laser devices for book tracking. This fusion enhances the embodied AI robot’s environmental understanding. Mathematically, the perception process can be represented as a feature extraction function: $$ \mathbf{F} = \phi(\mathbf{X}_v, \mathbf{X}_a, \mathbf{X}_e, \mathbf{X}_r) $$ where $\mathbf{F}$ denotes the fused feature vector, and $\mathbf{X}_v, \mathbf{X}_a, \mathbf{X}_e, \mathbf{X}_r$ are inputs from visual, auditory, environmental, and RF/laser modalities, respectively. Digital twin technology creates a dynamic virtual replica of the library, enabling real-time monitoring and simulation. For example, the digital twin updates book locations and user flows based on sensor data, allowing predictive analytics. Knowledge graphs encode semantic relationships among resources, users, and domains, supporting intelligent recommendations. The cloud-edge collaborative platform optimizes computation: edge nodes handle low-latency tasks like robot navigation, while the cloud中心 manages large-scale data training and global调度. This can be modeled as a distributed optimization problem: $$ \min_{\theta} \sum_{i=1}^{N} \mathcal{L}(f(\mathbf{x}_i; \theta)) + \lambda \Omega(\theta) $$ where $\theta$ represents model parameters, $\mathcal{L}$ is the loss function for tasks like book demand prediction, and $\Omega$ regularizes for efficiency across edge and cloud resources.

The Application Layer operates through a “perception-decision-action-feedback” closed loop, driving continuous improvement. In perception, embodied AI robots and IoT devices collect real-time data on users, resources, and environments. Decision-making involves both autonomous robot decisions for local tasks (e.g., path planning using reinforcement learning: $$ \pi^* = \arg\max_\pi \mathbb{E}\left[\sum_{t=0}^{\infty} \gamma^t R(s_t, a_t) \mid \pi \right] $$ where $\pi$ is the policy, $R$ is the reward, and $\gamma$ is the discount factor) and librarian decisions for complex, value-driven scenarios. Action execution sees embodied AI robots performing physical tasks (e.g., book delivery), while librarians handle深度咨询 and creative activities. Feedback loops evaluate outcomes to refine algorithms and rules. This闭环 enables four key human-machine collaborative modes.

First, in resource management, embodied AI robots enable holistic smart management. They automate inventory and delivery, linking physical books with digital resources. For instance, when a user borrows a book, the robot can recommend related e-books via knowledge graphs. Librarians oversee collection strategies and布局 optimization. Second, user service is reshaped through embodied AI robots as mobile service fronts. They proactively identify needs via multimodal感知 and provide basic咨询, with librarians介入 for complex queries. This分层 network enhances accessibility and satisfaction. Third, activity implementation leverages embodied AI robots as versatile assistants. In storytelling sessions, they engage children as interactive companions; in conferences, they handle logistics like registration and recording. Librarians focus on content creation and community building. Fourth, space management uses digital twins and embodied AI robots for real-time monitoring and adjustment. Sensors track occupancy and environmental conditions, with AI models predicting trends to optimize comfort and safety. Librarians set macro-policies and respond to alerts.

The Support Layer ensures responsible and sustainable operation. Librarian role transformation is critical: they evolve into service designers, robot coordinators, and data analysts. For example, librarians might design workflows where an embodied AI robot triggers特定 actions based on user停留 time. Ethical and safety norms address privacy concerns, such as anonymizing data from cameras and microphones, and physical safety protocols to prevent collisions. Organizational restructuring involves flattening hierarchies and creating new roles, like a “Robot Operations Manager,” to align with the collaborative model.

From my analysis, the integration of embodied AI robots in libraries faces challenges but offers promising prospects. Key issues include technical limitations in reasoning, high costs, and ethical dilemmas around data security. However, with advancing AI, these hurdles can be overcome. The human-machine collaborative model not only boosts efficiency but also fosters innovation, positioning libraries as dynamic智慧 spaces. In conclusion, embodied AI robots represent a paradigm shift, connecting physical, human, and virtual worlds. By embracing this technology, libraries can become感知, adaptive, and creative hubs, delivering continuous knowledge nourishment to society. As I envision it, the future of libraries lies in harmonious synergy between humans and embodied AI robots, driving unparalleled service excellence.

To further illustrate the technical aspects, consider the multimodal fusion process in detail. The table below summarizes sensor types and their roles in library embodied AI robot systems.

| Modality Type | Sensor Examples | Data Collected | Library Application | Role in Embodied AI Robot |

|---|---|---|---|---|

| Visual | HD cameras, IR sensors | Images, video streams, thermal maps | User posture detection, book recognition, security surveillance | Enables vision-based navigation and interaction |

| Auditory | Microphone arrays | Audio signals, speech patterns | Voice commands, noise level monitoring, emergency alerts | Facilitates natural language processing and user engagement |

| Environmental | Temperature, humidity, CO₂ sensors | Scalar measurements (e.g., °C, % RH, ppm) | Climate control for preservation and comfort, air quality management | Supports autonomous environment regulation |

| RF/Laser | RFID readers, LiDAR | RF signals, 3D point clouds | Book tracking via RFID, spatial mapping for robot navigation | Ensures accurate localization and item management |

The perception fusion can be mathematically expressed using a weighted integration model: $$ \mathbf{P} = \sum_{i=1}^{M} w_i \cdot \psi_i(\mathbf{D}_i) $$ where $\mathbf{P}$ is the fused perception output, $w_i$ are weights assigned to each modality $i$, $\psi_i$ are processing functions (e.g., CNN for images, RNN for audio), and $\mathbf{D}_i$ is the raw data from modality $i$. This allows the embodied AI robot to construct a comprehensive environmental model.

In decision-making, the embodied AI robot employs probabilistic reasoning to handle uncertainties. For instance, when determining user intent, it might use Bayesian inference: $$ P(I|O) = \frac{P(O|I) P(I)}{P(O)} $$ where $I$ represents the intent (e.g., “needs help finding a book”), $O$ is the observed data (e.g., user lingering near a shelf), and $P(I|O)$ is the posterior probability guiding the robot’s action. Librarians intervene when $P(I|O)$ falls below a confidence threshold, ensuring robust collaboration.

The cloud-edge协同 platform optimizes resource allocation. Let $C_e$ be the edge computing cost and $C_c$ the cloud cost. The total cost minimization for an embodied AI robot task can be formulated as: $$ \min_{x} (C_e \cdot x_e + C_c \cdot x_c) \quad \text{s.t.} \quad x_e + x_c = T, \quad \text{Latency} \leq L_{\text{max}} $$ where $x_e$ and $x_c$ are processing shares at edge and cloud, $T$ is the total task load, and $L_{\text{max}}$ is the maximum allowed latency. This ensures efficient computation for real-time responses.

Regarding safety, embodied AI robots must adhere to constraints. For collision avoidance, a potential field approach can be used: $$ F_{\text{rep}} = \sum_{j} k_r \cdot \frac{1}{d_j^2} $$ where $F_{\text{rep}}$ is the repulsive force from obstacles $j$, $k_r$ is a constant, and $d_j$ is the distance to obstacle $j$. This is integrated into the robot’s motion planning to prevent accidents.

In terms of library service metrics, the impact of embodied AI robots can be quantified. For example, service efficiency improvement $\Delta E$ might be modeled as: $$ \Delta E = \alpha \cdot \log(1 + N_r) + \beta \cdot A_c $$ where $N_r$ is the number of embodied AI robots deployed, $A_c$ is the level of human-machine collaboration, and $\alpha, \beta$ are coefficients determined empirically. This highlights the synergistic effect of scaling机器人 integration.

Looking ahead, I believe embodied AI robots will evolve with more advanced cognitive capabilities, such as affective computing for emotional support and cross-modal learning for deeper environmental understanding. Libraries should invest in pilot projects, staff training, and ethical frameworks to harness this potential. By doing so, they can transform into truly intelligent spaces where embodied AI robots and humans co-create value, fostering a culture of innovation and accessibility for all users.